Event camera

An event camera, also known as a neuromorphic camera,[1] silicon retina[2] or dynamic vision sensor,[3] is an imaging sensor that responds to local changes in brightness. Event cameras do not capture images using a shutter as conventional (frame) cameras do. Instead, each pixel inside an event camera operates independently and asynchronously, reporting changes in brightness as they occur, and staying silent otherwise.

Functional description

Event camera pixels independently respond to changes in brightness as they occur.[4] Each pixel stores a reference brightness level, and continuously compares it to the current brightness level. If the difference in brightness exceeds a threshold, that pixel resets its reference level and generates an event: a discrete packet that contains the pixel address and timestamp. Events may also contain the polarity (increase or decrease) of a brightness change, or an instantaneous measurement of the illumination level[5], depending on the specific sensor model. Thus, event cameras output an asynchronous stream of events triggered by changes in scene illumination.

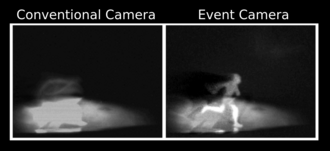

Event cameras typically report timestamps with a microsecond temporal resolution, 120 dB dynamic range, and less under/overexposure and motion blur[4][6] than frame cameras. This allows them to track object and camera movement (optical flow) more accurately. They yield grey-scale information. Initially (2014), resolution was limited to 100 pixels. A later entry reached 640x480 resolution in 2019. Because individual pixels fire independently, event cameras appear suitable for integration with asynchronous computing architectures such as neuromorphic computing. Pixel independence allows these cameras to cope with scenes with brightly and dimly lit regions without having to average across them.[7] It is important to note that while the camera reports events with microsecond resolution, the actual temporal resolution (or, alternatively, the bandwidth for sensing) is in the order of tens of microseconds to a few miliseconds - depending on signal contrast, lighting conditions and sensor design[8].

| Sensor | Dynamic

range (dB) |

Equivalent

framerate (fps) |

Spatial

resolution (MP) |

Power

consumption (mW) |

|---|---|---|---|---|

| Human eye | 30–40 | 200-300* | - | 10[9] |

| High-end DSLR camera (Nikon D850) | 44.6[10] | 120 | 2–8 | - |

| Ultrahigh-speed camera (Phantom v2640)[11] | 64 | 12,500 | 0.3–4 | - |

| Event camera[12] | 120 | 50,000 - 300,000** | 0.1–1 | 30 |

* Indicates human perception temporal resolution, including cognitive processing time. **Refers to change recognition rates, and varies according to signal and sensor model.

Types

Temporal contrast sensors (such as DVS

Retinomorphic sensors

Another class of event sensors are so-called retinomorphic sensors. While the term retinomorphic has been used to describe event sensors generally,[15][16] in 2020 it was adopted as the name for a specific sensor design based on a resistor and photosensitive capacitor in series.[17] These capacitors are distinct from photocapacitors, which are used to store solar energy,[18] and are instead designed to change capacitance under illumination. They charge/discharge slightly when the capacitance is changed, but otherwise remain in equilibrium. When a photosensitive capacitor is placed in series with a resistor, and an input voltage is applied across the circuit, the result is a sensor that outputs a voltage when the light intensity changes, but otherwise does not.

Unlike other event sensors (typically a photodiode and some other circuit elements), these sensors produce the signal inherently. They can hence be considered a single device that produces the same result as a small circuit in other event cameras. Retinomorphic sensors have to-date only been studied in a research environment.[19][20][21][22]

Algorithms

Image reconstruction

Image reconstruction from events has the potential to create images and video with high dynamic range, high temporal resolution and reduced motion blur. Image reconstruction can be achieved using temporal smoothing, e.g. high-pass or complementary filter.[23] Alternative methods include optimization[24] and gradient estimation[25] followed by Poisson integration.

Spatial convolutions

The concept of spatial event-driven convolution was postulated in 1999

Motion detection and tracking

Segmentation and detection of moving objects viewed by an event camera can seem to be a trivial task, as it is done by the sensor on-chip. However, these tasks are difficult, because events carry little information[31] and do not contain useful visual features like texture and color.[32] These tasks become further challenging given a moving camera,[31] because events are triggered everywhere on the image plane, produced by moving objects and the static scene (whose apparent motion is induced by the camera’s ego-motion). Some of the recent approaches to solving this problem include the incorporation of motion-compensation models[33][34] and traditional clustering algorithms.[35][36][32][37]

Potential applications

Potential applications include most tasks classically fitting conventional camera, but with emphasis on machine vision tasks (such as object recognition, autonomous vehicles, and robotics.[21]). The US military is considering infrared and other event cameras because of their lower power consumption and reduced heat generation.[7]

Considering the advantages the event camera possesses, compared to conventional image sensors, it is considered fitting for applications requiring low power consumption, low latency, and difficulty to stabalize camera line of sight. These applications include the aforementioned autonomous systems, but also space imaging, security, defense and industrial monitoring. It is notable that while research into color sensing with event cameras is underway[38], it is not yet convenient for use with applications requiring color sensing.

See also

References

- PMID 28611582.

- S2CID 228375825.

- S2CID 2283149. Retrieved 27 June 2021.

- ^ S2CID 6119048. Archived from the original(PDF) on 2021-05-03. Retrieved 2019-12-06.

- ^ S2CID 21317717.

- ^ Longinotti, Luca. "Product Specifications". iniVation. Archived from the original on 2019-04-02. Retrieved 2019-04-21.

- ^ ISSN 0013-0613. Retrieved 2022-02-02.

- , retrieved 2024-04-08

- S2CID 9340738.

- ^ DxO. "Nikon D850 : Tests and Reviews | DxOMark". www.dxomark.com. Retrieved 2019-04-22.

- ^ "Phantom v2640". www.phantomhighspeed.com. Retrieved 2019-04-22.

- ^ Longinotti, Luca. "Product Specifications". iniVation. Archived from the original on 2019-04-02. Retrieved 2019-04-22.

- S2CID 6686013.

- ISSN 0018-9200.

- S2CID 62609792.

- S2CID 11513955.

- S2CID 230546095.

- ISSN 0003-6951.

- ^ "Perovskite sensor sees more like the human eye". Physics World. 2021-01-18. Retrieved 2021-10-28.

- ^ "Simple Eyelike Sensors Could Make AI Systems More Efficient". Inside Science. 8 December 2020. Retrieved 2021-10-28.

- ^ a b Hambling, David. "AI vision could be improved with sensors that mimic human eyes". New Scientist. Retrieved 2021-10-28.

- ^ "An eye for an AI: Optic device mimics human retina". BBC Science Focus Magazine. Retrieved 2021-10-28.

- ^ S2CID 53182986.

- S2CID 53749928.

- S2CID 59619729.

- ISSN 1057-7122.

- S2CID 6537174.

- S2CID 8287877.

- S2CID 23238741.

- S2CID 170040.

- ^ S2CID 234740723.

- ^ S2CID 238227007– via IEEE Xplore.

- S2CID 3845250.

- S2CID 91183976.

- S2CID 310741.

- ISSN 0197-6729.

- S2CID 237420620.

- ^ "CED: Color Event Camera Dataset". rpg.ifi.uzh.ch. Retrieved 2024-04-08.