Publication bias

In published academic research, publication bias occurs when the outcome of an experiment or research study biases the decision to publish or otherwise distribute it. Publishing only results that show a significant finding disturbs the balance of findings in favor of positive results.[1] The study of publication bias is an important topic in metascience.

Despite similar quality of execution and design,[2] papers with statistically significant results are three times more likely to be published than those with null results.[3] This unduly motivates researchers to manipulate their practices to ensure statistically significant results, such as by data dredging.[4]

Many factors contribute to publication bias.

Attempts to find unpublished studies often prove difficult or are unsatisfactory.

Other proposed strategies to detect and control for publication bias

Definition

Publication bias occurs when the publication of research results depends not just on the quality of the research but also on the hypothesis tested, and the significance and direction of effects detected.[10] The subject was first discussed in 1959 by statistician Theodore Sterling to refer to fields in which "successful" research is more likely to be published. As a result, "the literature of such a field consists in substantial part of false conclusions resulting from errors of the first kind in statistical tests of significance".[11] In the worst case, false conclusions could canonize as being true if the publication rate of negative results is too low.[12]

Publication bias is sometimes called the file-drawer effect, or file-drawer problem. This term suggests that results not supporting the hypotheses of researchers often go no further than the researchers' file drawers, leading to a bias in published research.[13] The term "file drawer problem" was coined by psychologist Robert Rosenthal in 1979.[14]

Positive-results bias, a type of publication bias, occurs when authors are more likely to submit, or editors are more likely to accept, positive results than negative or inconclusive results.[15] Outcome reporting bias occurs when multiple outcomes are measured and analyzed, but the reporting of these outcomes is dependent on the strength and direction of its results. A generic term coined to describe these post-hoc choices is HARKing ("Hypothesizing After the Results are Known").[16]

Evidence

There is extensive

The presence of publication bias was investigated in

Meta-analyses (reviews) have been performed in the field of ecology and environmental biology. In a study of 100 meta-analyses in ecology, only 49% tested for publication bias.[25] While there are multiple tests that have been developed to detect publication bias, most perform poorly in the field of ecology because of high levels of heterogeneity in the data and that often observations are not fully independent.[26]

As of 1998[update], "No trial published in China or Russia/USSR found a test treatment to be ineffective."[27]

Impact on meta-analysis

Where publication bias is present, published studies are no longer a representative sample of the available evidence. This bias distorts the results of meta-analyses and systematic reviews. For example, evidence-based medicine is increasingly reliant on meta-analysis to assess evidence.

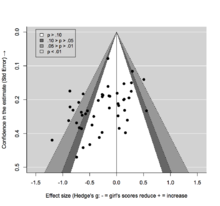

Meta-analyses and systematic reviews can account for publication bias by including evidence from unpublished studies and the grey literature. The presence of publication bias can also be explored by constructing a funnel plot in which the estimate of the reported effect size is plotted against a measure of precision or sample size. The premise is that the scatter of points should reflect a funnel shape, indicating that the reporting of effect sizes is not related to their statistical significance.[29] However, when small studies are predominately in one direction (usually the direction of larger effect sizes), asymmetry will ensue and this may be indicative of publication bias.[30]

Because an inevitable degree of subjectivity exists in the interpretation of funnel plots, several tests have been proposed for detecting funnel plot asymmetry.[29][31][32] These are often based on linear regression including the popular Eggers regression test,[33] and may adopt a multiplicative or additive dispersion parameter to adjust for the presence of between-study heterogeneity. Some approaches may even attempt to compensate for the (potential) presence of publication bias,[23][34][35] which is particularly useful to explore the potential impact on meta-analysis results.[36][37][38]

In ecology and environmental biology, a study found that publication bias impacted the effect size, statistical power, and magnitude. The prevalence of publication bias distorted confidence in meta-analytic results, with 66% of initially statistically significant meta-analytic means becoming non-significant after correcting for publication bias.[39] Ecological and evolutionary studies consistently had low statistical power (15%) with a 4-fold exaggeration of effects on average (Type M error rates = 4.4).

The presence of publication bias can be detected by Time-lag bias tests, where time-lag bias occurs when larger or statistically significant effects are published more quickly than smaller or non-statistically significant effects. It can manifest as a decline in the magnitude of the overall effect over time. The key feature of time-lag bias tests is that, as more studies accumulate, the mean effect size is expected to converge on its true value.[26]

Compensation examples

Two meta-analyses of the efficacy of reboxetine as an antidepressant demonstrated attempts to detect publication bias in clinical trials. Based on positive trial data, reboxetine was originally passed as a treatment for depression in many countries in Europe and the UK in 2001 (though in practice it is rarely used for this indication). A 2010 meta-analysis concluded that reboxetine was ineffective and that the preponderance of positive-outcome trials reflected publication bias, mostly due to trials published by the drug manufacturer Pfizer. A subsequent meta-analysis published in 2011, based on the original data, found flaws in the 2010 analyses and suggested that the data indicated reboxetine was effective in severe depression (see Reboxetine § Efficacy). Examples of publication bias are given by Ben Goldacre[40] and Peter Wilmshurst.[41]

In the social sciences, a study of published papers exploring the relationship between corporate social and financial performance found that "in economics, finance, and accounting journals, the average correlations were only about half the magnitude of the findings published in Social Issues Management, Business Ethics, or Business and Society journals".[42]

One example cited as an instance of publication bias is the refusal to publish attempted replications of Bem's work that claimed evidence for precognition by The Journal of Personality and Social Psychology (the original publisher of Bem's article).[43]

An analysis[44] comparing studies of gene-disease associations originating in China to those originating outside China found that those conducted within the country reported a stronger association and a more statistically significant result.[45]

Risks

John Ioannidis argues that "claimed research findings may often be simply accurate measures of the prevailing bias."[46] He lists the following factors as those that make a paper with a positive result more likely to enter the literature and suppress negative-result papers:

- The studies conducted in a field have small sample sizes.

- The effect sizes in a field tend to be smaller.

- There is both a greater number and lesser preselection of tested relationships.

- There is greater flexibility in designs, definitions, outcomes, and analytical modes.

- There are prejudices (financial interest, political, or otherwise).

- The scientific field is hot and there are more scientific teams pursuing publication.

Other factors include

Remedies

Publication bias can be contained through better-powered studies, enhanced research standards, and careful consideration of true and non-true relationships.[46] Better-powered studies refer to large studies that deliver definitive results or test major concepts and lead to low-bias meta-analysis. Enhanced research standards such as the pre-registration of protocols, the registration of data collections and adherence to established protocols are other techniques. To avoid false-positive results, the experimenter must consider the chances that they are testing a true or non-true relationship. This can be undertaken by properly assessing the false positive report probability based on the statistical power of the test[47] and reconfirming (whenever ethically acceptable) established findings of prior studies known to have minimal bias.

Study registration

In September 2004, editors of prominent medical journals (including the

The World Health Organization (WHO) agreed that basic information about all clinical trials should be registered at the study's inception, and that this information should be publicly accessible through the WHO International Clinical Trials Registry Platform. Additionally, public availability of complete study protocols, alongside reports of trials, is becoming more common for studies.[50]

Megastudies

In a megastudy, a large number of treatments are tested simultaneously. Given inclusion of different interventions in the study, a megastudy's publication likelihood is less dependent on the statistically significant effect of any specific treatment, so it has been suggested that megastudies may be less prone to publication bias.[51] For example, an intervention found to be ineffective would be easier to publish as part of a megastudy as just one of many studied interventions, whereas it might go unreported due to the file-drawer problem if it were the sole focus of a contemplated paper. For the same reason, the megastudy research design may encourage researchers to study not only the interventions they consider more likely to be effective but also those interventions that researchers are less certain about and that they would not pick as the sole focus of the study due to the perceived high risk of a null effect.

See also

- Academic bias

- Adversarial collaboration – research collaboration by scientists with opposing views

- Confirmation bias – Bias confirming existing attitudes

- Conflicts of interest in academic publishing

- Counternull – the effect size that is just as well supported by the data as the null hypothesis

- Funding bias – Tendency of a scientific study to support the interests of its funder

- FUTON bias– Tendency of scholars to cite journals with open access

- Proteus phenomenon – Phenomenon in scientific publishing

- Replication crisis – Observed inability to reproduce scientific studies

- Selection bias – Bias in a statistical analysis due to non-random selection

- White hat bias – Type of bias in public health research

- Woozle effect – False credibility due to quantity of citations

References

- PMID 20181324.

- ^ S2CID 36570135.

- PMID 3442991.

- .

- ^ a b c H. Rothstein, A. J. Sutton and M. Borenstein. (2005). Publication bias in meta-analysis: prevention, assessment and adjustments. Wiley. Chichester, England; Hoboken, NJ.

- ISSN 0013-0133.

- PMID 22342262.

- .

- S2CID 8505270.

- PMID 2406472.

- JSTOR 2282137.

- PMID 27995896.

- Bibcode:1999physics...9033S.

- S2CID 36070395.

- PMID 447779.

- PMID 15647155.

- PMID 25636259.

- PMID 8306005.

- PMID 15967761.

- PMID 19941636.

- PMID 15681569.

- PMID 24311990.

- ^ S2CID 25560005.

- PMID 24363797.

- S2CID 254466150.

- ^ S2CID 241159497.

- PMID 9551280.

- .

- ^ PMID 28975717.

- OCLC 1036880624.

- S2CID 12341436.

- S2CID 24560718.

- PMID 9310563.

- .

- S2CID 123680599.

- PMID 27694467.

- .

- PMID 25168036.

- PMID 37013585.

- ^ Goldacre, Ben (June 2012). What doctors don't know about the drugs they prescribe (Speech). TEDMED 2012. Retrieved 3 February 2020.

- S2CID 26915448. Archived from the originalon 21 May 2013.

- S2CID 147466849. Archived from the original(PDF) on 25 January 2018.

- ^ Goldacre, Ben (23 April 2011). "Backwards step on looking into the future". The Guardian. Retrieved 11 April 2017.

- PMID 16285839.)

{{cite journal}}: CS1 maint: multiple names: authors list (link - PMID 16363911.

- ^ PMID 16060722.

- PMID 15026468.

- ^ Vedantam, Shankar (9 September 2004). "Journals Insist Drug Manufacturers Register All Trials". Washington Post. Retrieved 3 February 2020.

- ^ "Instructions for Trials authors — Study protocol". 15 February 2009. Archived from the original on 2 August 2007.

- PMID 22179297.

- ^ Tkachenko, Y., Jedidi, K. A megastudy on the predictability of personal information from facial images: Disentangling demographic and non-demographic signals. Sci Rep 13, 21073 (2023). https://doi.org/10.1038/s41598-023-42054-9

External links

- Lehrer, Jonah (13 December 2010). "The Truth Wears Off". The New Yorker. Retrieved 30 January 2020.

- Register of clinical trials conducted in the US and around the world, maintained by the National Library of Medicine, Bethesda

- Skeptic's Dictionary: positive outcome bias.

- Skeptic's Dictionary: file-drawer effect.

- Journal of Negative Results in Biomedicine

- The All Results Journals

- Journal of Articles in Support of the Null Hypothesis

- Psychfiledrawer.org: Archive for replication attempts in experimental psychology