History of artificial intelligence

| Part of a series on |

| Artificial intelligence |

|---|

| History of computing |

|---|

|

| Hardware |

| Software |

| Computer science |

| Modern concepts |

| By country |

| Timeline of computing |

| Glossary of computer science |

The history of artificial intelligence (AI) began in

The field of AI research was founded at a workshop held on the campus of Dartmouth College, USA during the summer of 1956.[1] Those who attended would become the leaders of AI research for decades. Many of them predicted that a machine as intelligent as a human being would exist in no more than a generation, and they were given millions of dollars to make this vision come true.[2]

Eventually, it became obvious that researchers had grossly underestimated the difficulty of the project.

Investment and interest in

Precursors

Mythical, fictional, and speculative precursors

Myth and legend

In Greek mythology,

Pygmalion was a legendary king and sculptor of Greek mythology, famously represented in Ovid's Metamorphoses. In the 10th book of Ovid's narrative poem, Pygmalion becomes disgusted with women when he witnesses the way in which the Propoetides prostitute themselves.[7] Despite this, he makes offerings at the temple of Venus asking the goddess to bring to him a woman just like a statue he carved.

Medieval legends of artificial beings

In Of the Nature of Things, written by the Swiss alchemist, Paracelsus, he describes a procedure that he claims can fabricate an "artificial man". By placing the "sperm of a man" in horse dung, and feeding it the "Arcanum of Mans blood" after 40 days, the concoction will become a living infant.[8]

The earliest written account regarding golem-making is found in the writings of Eleazar ben Judah of Worms in the early 13th century.[9][10] During the Middle Ages, it was believed that the animation of a Golem could be achieved by insertion of a piece of paper with any of God’s names on it, into the mouth of the clay figure.[11] Unlike legendary automata like Brazen Heads,[12] a Golem was unable to speak.[13]

In Faust: The Second Part of the Tragedy by Johann Wolfgang von Goethe, an alchemically fabricated homunculus, destined to live forever in the flask in which he was made, endeavors to be born into a full human body. Upon the initiation of this transformation, however, the flask shatters and the homunculus dies.[15]

Modern fiction

By the 19th century, ideas about artificial men and thinking machines were developed in fiction, as in

Automata

Realistic humanoid

The oldest known automata were the sacred statues of ancient Egypt and Greece.[25] The faithful believed that craftsman had imbued these figures with very real minds, capable of wisdom and emotion—Hermes Trismegistus wrote that "by discovering the true nature of the gods, man has been able to reproduce it".[26][27] English scholar

During the early modern period, these legendary automata were said to possess the magical ability to answer questions put to them. The late medieval alchemist and proto-protestant

Formal reasoning

Artificial intelligence is based on the assumption that the process of human thought can be mechanized. The study of mechanical—or "formal"—reasoning has a long history.

Spanish philosopher

In the 17th century,

In the 20th century, the study of

Their answer was surprising in two ways. First, they proved that there were, in fact, limits to what mathematical logic could accomplish. But second (and more important for AI) their work suggested that, within these limits, any form of mathematical reasoning could be mechanized. The

Computer science

Calculating machines were designed or built in antiquity and throughout history by many people, including

The first modern computers were the massive machines of the

Birth of artificial intelligence (1941-56)

The earliest research into thinking machines was inspired by a confluence of ideas that became prevalent in the late 1930s, 1940s, and early 1950s. Recent research in

In the 1940s and 50s, a handful of scientists from a variety of fields (mathematics, psychology, engineering, economics and political science) explored several research directions that would be vital to later AI research.[53] Alan Turing, who developed the theory of computation, was among the first people to seriously investigate the theoretical possibility of "machine intelligence".[54] The field of "artificial intelligence research" was founded as an academic discipline in 1956.[55]

Turing Test

Alan Turing was thinking about machine intelligence at least as early as 1941, when he circulated a paper on machine intelligence which could be the earliest paper in the field of AI - though it is now lost.[54] In 1950 Turing published a landmark paper "

Artificial neural networks

Cybernetic robots

Experimental robots such as

Game AI

In 1951, using the

Symbolic reasoning and the Logic Theorist

When access to

In 1955, Allen Newell and (future Nobel Laureate) Herbert A. Simon created the "Logic Theorist" (with help from J. C. Shaw). The program would eventually prove 38 of the first 52 theorems in Russell and Whitehead's Principia Mathematica, and find new and more elegant proofs for some.[67] Simon said that they had "solved the venerable

Dartmouth Workshop

The Dartmouth workshop of 1956 was a pivotal event that marked the formal inception of AI as an academic discipline.[70] It was organized by Marvin Minsky, John McCarthy and two senior scientists: Claude Shannon and Nathan Rochester of IBM. The primary objective of this workshop was to delve into the possibilities of creating machines capable of simulating human intelligence, marking the commencement of a focused exploration into the realm of AI; [71] the proposal for the conference stated they intended to test the assertion that "every aspect of learning or any other feature of intelligence can be so precisely described that a machine can be made to simulate it".[72]

The term "Artificial Intelligence" itself was officially introduced by John McCarthy at the workshop. [73] (The term "Artificial Intelligence" was chosen by McCarthy to avoid associations with cybernetics and the influence of Norbert Wiener.)[74]

The participants included Ray Solomonoff, Oliver Selfridge, Trenchard More, Arthur Samuel, Allen Newell and Herbert A. Simon, all of whom would create important programs during the first decades of AI research.[75] At the workshop Newell and Simon debuted the "Logic Theorist".[76]

The 1956 Dartmouth workshop was the moment that AI gained its name, its mission, its first major success and its key players, and is widely considered the birth of AI.[77]

Cognitive revolution

In the fall of 1956, Newell and Simon also presented the Logic Theorist at a meeting of the Special Interest Group in Information Theory at the Massachusetts Institute of Technology. At the same meeting, Noam Chomsky discussed his generative grammar, and George Miller described his landmark paper "The Magical Number Seven, Plus or Minus Two". Miller wrote "I left the symposium with a conviction, more intuitive than rational, that experimental psychology, theoretical linguistics, and the computer simulation of cognitive processes were all pieces from a larger whole."[78]

This meeting was the beginning of the "

The cognitive approach allowed researchers to consider "mental objects" like thoughts, plans, goals, facts or memories, often analyzed using high level symbols in functional networks. These objects had been forbidden as "unobservable" by earlier paradigms such as behaviorism. Symbolic mental objects would become the major focus of AI research and funding for the next several decades.

Early successes (1956-1974)

The programs developed in the years after the Dartmouth Workshop were, to most people, simply "astonishing":[79] computers were solving algebra word problems, proving theorems in geometry and learning to speak English. Few at the time would have believed that such "intelligent" behavior by machines was possible at all.[80] Researchers expressed an intense optimism in private and in print, predicting that a fully intelligent machine would be built in less than 20 years.[81] Government agencies like DARPA poured money into the new field.[82] Artificial Intelligence laboratories were set up at a number of British and US Universities in the latter 1950s and early 1960s.[54]

Approaches

There were many successful programs and new directions in the late 50s and 1960s. Among the most influential were these:

Reasoning as search

Many early AI programs used the same basic

The principal difficulty was that, for many problems, the number of possible paths through the "maze" was simply astronomical (a situation known as a "

Neural networks

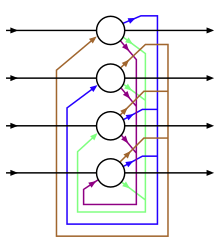

The McCulloch and Pitts paper (1944) inspired approaches to creating computing hardware that realizes the neural network approach to AI in hardware. The most influential was the effort led by Frank Rosenblatt on building Perceptron machines (1957-1962) of up to four layers. He was primarily funded by Office of Naval Research.[88] Bernard Widrow and his student Ted Hoff built ADALINE (1960) and MADALINE (1962), which had up to 1000 adjustable weights.[89] A group at Stanford Research Institute led by Charles A. Rosen and Alfred E. (Ted) Brain built two neural network machines named MINOS I (1960) and II (1963), mainly funded by U.S. Army Signal Corps. MINOS II[90] had 6600 adjustable weights,[91] and was controlled with an SDS 910 computer in a configuration named MINOS III (1968), which could classify symbols on army maps, and recognize hand-printed characters on Fortran coding sheets.[92][93][94]

Most of neural network research during this early period involved building and using bespoke hardware, rather than simulation on digital computers. The hardware diversity was particularly clear in the different technologies used in implementing the adjustable weights. The perceptron machines and the SNARC used potentiometers moved by electric motors. ADALINE used memistors adjusted by electroplating, though they also used simulations on an IBM 1620. The MINOS machines used ferrite cores with multiple holes in them that could be individually blocked, with the degree of blockage representing the weights.[95]

Though there were multi-layered neural networks, most neural networks during this period had only one layer of adjustable weights. There were empirical attempts at training more than a single layer, but they were unsuccessful. Backpropagation did not become prevalent for neural network training until the 1980s.[95]

Natural language

An important goal of AI research is to allow computers to communicate in

A

Micro-worlds

In the late 60s,

This paradigm led to innovative work in

Automata

In Japan, Waseda University initiated the WABOT project in 1967, and in 1972 completed the WABOT-1, the world's first full-scale "intelligent" humanoid robot,[102][103] or android. Its limb control system allowed it to walk with the lower limbs, and to grip and transport objects with hands, using tactile sensors. Its vision system allowed it to measure distances and directions to objects using external receptors, artificial eyes and ears. And its conversation system allowed it to communicate with a person in Japanese, with an artificial mouth.[104][105][106]

Optimism

The first generation of AI researchers made these predictions about their work:

- 1958, H. A. Simon and Allen Newell: "within ten years a digital computer will be the world's chess champion" and "within ten years a digital computer will discover and prove an important new mathematical theorem."[107]

- 1965, H. A. Simon: "machines will be capable, within twenty years, of doing any work a man can do."[108]

- 1967, Marvin Minsky: "Within a generation ... the problem of creating 'artificial intelligence' will substantially be solved."[109]

- 1970, Life Magazine): "In from three to eight years we will have a machine with the general intelligence of an average human being."[110]

Financing

In June 1963,

The money was proffered with few strings attached:

First AI winter (1974–1980)

In the 1970s, AI was subject to critiques and financial setbacks. AI researchers had failed to appreciate the difficulty of the problems they faced. Their tremendous optimism had raised public expectations impossibly high, and when the promised results failed to materialize, funding targeted at AI nearly disappeared.

Problems

In the early seventies, the capabilities of AI programs were limited. Even the most impressive could only handle trivial versions of the problems they were supposed to solve; all the programs were, in some sense, "toys".[121] AI researchers had begun to run into several fundamental limits that could not be overcome in the 1970s. Although some of these limits would be conquered in later decades, others still stymie the field to this day.[122]

- Limited computer power: There was not enough memory or processing speed to accomplish anything truly useful. For example, Ross Quillian's successful work on natural language was demonstrated with a vocabulary of only twenty words, because that was all that would fit in memory.[123] Hans Moravec argued in 1976 that computers were still millions of times too weak to exhibit intelligence. He suggested an analogy: artificial intelligence requires computer power in the same way that aircraft require horsepower. Below a certain threshold, it's impossible, but, as power increases, eventually it could become easy.[124] With regard to computer vision, Moravec estimated that simply matching the edge and motion detection capabilities of human retina in real time would require a general-purpose computer capable of 109 operations/second (1000 MIPS).[125] As of 2011, practical computer vision applications require 10,000 to 1,000,000 MIPS. By comparison, the fastest supercomputer in 1976, Cray-1 (retailing at $5 million to $8 million), was only capable of around 80 to 130 MIPS, and a typical desktop computer at the time achieved less than 1 MIPS.

- exponential time (in the size of the inputs). Finding optimal solutions to these problems requires unimaginable amounts of computer time except when the problems are trivial. This almost certainly meant that many of the "toy" solutions used by AI would probably never scale up into useful systems.[126]

- Commonsense knowledge and reasoning. Many important artificial intelligence applications like vision or natural language require simply enormous amounts of information about the world: the program needs to have some idea of what it might be looking at or what it is talking about. This requires that the program know most of the same things about the world that a child does. Researchers soon discovered that this was a truly vast amount of information. No one in 1970 could build a database so large and no one knew how a program might learn so much information.[127]

- Moravec's paradox: Proving theorems and solving geometry problems is comparatively easy for computers, but a supposedly simple task like recognizing a face or crossing a room without bumping into anything is extremely difficult. This helps explain why research into vision and robotics had made so little progress by the middle 1970s.[128]

- The frame and qualification problems. AI researchers (like John McCarthy) who used logic discovered that they could not represent ordinary deductions that involved planning or default reasoning without making changes to the structure of logic itself. They developed new logics (like non-monotonic logics and modal logics) to try to solve the problems.[129]

End of funding

The agencies which funded AI research (such as the

The end of funding occurred even earlier for neural network research, partly due to lack of results and partly due to competition from

Hans Moravec blamed the crisis on the unrealistic predictions of his colleagues. "Many researchers were caught up in a web of increasing exaggeration."[134] However, there was another issue: since the passage of the

Critiques from across campus

Several philosophers had strong objections to the claims being made by AI researchers. One of the earliest was

These critiques were not taken seriously by AI researchers, often because they seemed so far off the point. Problems like

Weizenbaum began to have serious ethical doubts about AI when Kenneth Colby wrote a "computer program which can conduct psychotherapeutic dialogue" based on ELIZA.[144] Weizenbaum was disturbed that Colby saw a mindless program as a serious therapeutic tool. A feud began, and the situation was not helped when Colby did not credit Weizenbaum for his contribution to the program. In 1976, Weizenbaum published Computer Power and Human Reason which argued that the misuse of artificial intelligence has the potential to devalue human life.[145]

Perceptrons and the attack on connectionism

A

Of the main efforts towards neural networks, Rosenblatt attempted to gather funds for building larger perceptron machines, but died in a boating accident in 1971. Minsky (of SNARC) turned to a staunch objector to pure connectionist AI. Widrow (of ADALINE) turned to adaptive signal processing, using techniques based on the

Logic at Stanford, CMU and Edinburgh

Logic was introduced into AI research as early as 1959, by

Critics of the logical approach noted, as Dreyfus had, that human beings rarely used logic when they solved problems. Experiments by psychologists like Peter Wason, Eleanor Rosch, Amos Tversky, Daniel Kahneman and others provided proof.[150] McCarthy responded that what people do is irrelevant. He argued that what is really needed are machines that can solve problems—not machines that think as people do.[151]

MIT's "anti-logic" approach

Among the critics of

In 1975, in a seminal paper,

The emergence of non-monotonic logics

The logicians rose to the challenge. Pat Hayes claimed that "most of 'frames' is just a new syntax for parts of first-order

logic." But he noted that "there are one or two apparently minor details which give a lot of trouble, however, especially defaults".[155] In the meanwhile, Ray Reiter admitted that "conventional logics, such as first-order

logic, lack the expressive power to adequately represent the knowledge required for reasoning by default".

The closed world assumption, as formulated by Reiter, "is not a first-order notion. (It is a meta notion.)"[156] However, Keith Clark showed that negation as finite failure can be understood as reasoning implicitly with definitions in first-order logic including a unique name assumption that different terms denote different individuals.[157]

During the late 1970s and throughout the 1980s, a variety of logics and extensions of first-order logic were developed both for negation as failure in logic programming and for default reasoning more generally. Collectively, these logics have become known as non-monotonic logics.

Boom (1980–1987)

In the 1980s, a form of AI program called "

Rise of expert systems

An

Expert systems restricted themselves to a small domain of specific knowledge (thus avoiding the

In 1980, an expert system called

Knowledge revolution

The power of expert systems came from the expert knowledge they contained. They were part of a new direction in AI research that had been gaining ground throughout the 70s. "AI researchers were beginning to suspect—reluctantly, for it violated the scientific canon of

The 1980s also saw the birth of Cyc, the first attempt to attack the commonsense knowledge problem directly, by creating a massive database that would contain all the mundane facts that the average person knows. Douglas Lenat, who started and led the project, argued that there is no shortcut ― the only way for machines to know the meaning of human concepts is to teach them, one concept at a time, by hand. The project was not expected to be completed for many decades.[167]

Chess playing programs HiTech and Deep Thought defeated chess masters in 1989. Both were developed by Carnegie Mellon University; Deep Thought development paved the way for Deep Blue.[168]

Money returns: Fifth Generation project

In 1981, the

Other countries responded with new programs of their own. The UK began the £350 million Alvey project. A consortium of American companies formed the Microelectronics and Computer Technology Corporation (or "MCC") to fund large scale projects in AI and information technology.[171][172] DARPA responded as well, founding the Strategic Computing Initiative and tripling its investment in AI between 1984 and 1988.[173]

Revival of neural networks

In 1982, physicist

Around the same time,

Starting with the 1986 publication of the

The development of

A landmark publication in the field was the 1989 book Analog VLSI Implementation of Neural Systems by Carver A. Mead and Mohammed Ismail.[177]

Bust: second AI winter (1987–1993)

The business community's fascination with AI rose and fell in the 1980s in the classic pattern of an economic bubble. As dozens of companies failed, the perception was that the technology was not viable.[178] However, the field continued to make advances despite the criticism. Numerous researchers, including robotics developers Rodney Brooks and Hans Moravec, argued for an entirely new approach to artificial intelligence.

AI winter

The term "AI winter" was coined by researchers who had survived the funding cuts of 1974 when they became concerned that enthusiasm for expert systems had spiraled out of control and that disappointment would certainly follow.[179] Their fears were well founded: in the late 1980s and early 1990s, AI suffered a series of financial setbacks.

The first indication of a change in weather was the sudden collapse of the market for specialized AI hardware in 1987. Desktop computers from

Eventually the earliest successful expert systems, such as

In the late 1980s, the Strategic Computing Initiative cut funding to AI "deeply and brutally". New leadership at DARPA had decided that AI was not "the next wave" and directed funds towards projects that seemed more likely to produce immediate results.[182]

By 1991, the impressive list of goals penned in 1981 for Japan's

Over 300 AI companies had shut down, gone bankrupt, or been acquired by the end of 1993, effectively ending the first commercial wave of AI.[185] In 1994, HP Newquist stated in The Brain Makers that "The immediate future of artificial intelligence—in its commercial form—seems to rest in part on the continued success of neural networks."[185]

Nouvelle AI and embodied reason

In the late 1980s, several researchers advocated a completely new approach to artificial intelligence, based on robotics.[186] They believed that, to show real intelligence, a machine needs to have a body — it needs to perceive, move, survive and deal with the world. They argued that these sensorimotor skills are essential to higher level skills like commonsense reasoning and that abstract reasoning was actually the least interesting or important human skill (see Moravec's paradox). They advocated building intelligence "from the bottom up."[187]

The approach revived ideas from

In his 1990 paper "Elephants Don't Play Chess,"

AI (1993–2011)

The field of AI, now more than a half a century old, finally achieved some of its oldest goals. It began to be used successfully throughout the technology industry, although somewhat behind the scenes. Some of the success was due to increasing computer power and some was achieved by focusing on specific isolated problems and pursuing them with the highest standards of scientific accountability. Still, the reputation of AI, in the business world at least, was less than pristine.[192] Inside the field there was little agreement on the reasons for AI's failure to fulfill the dream of human level intelligence that had captured the imagination of the world in the 1960s. Together, all these factors helped to fragment AI into competing subfields focused on particular problems or approaches, sometimes even under new names that disguised the tarnished pedigree of "artificial intelligence".[193] AI was both more cautious and more successful than it had ever been.

Milestones and Moore's law

On 11 May 1997,

In 2005, a Stanford robot won the

These successes were not due to some revolutionary new paradigm, but mostly on the tedious application of engineering skill and on the tremendous increase in the speed and capacity of computer by the 90s.

Intelligent agents

A new paradigm called "

An

The paradigm gave researchers license to study isolated problems and find solutions that were both verifiable and useful. It provided a common language to describe problems and share their solutions with each other, and with other fields that also used concepts of abstract agents, like economics and

Probabilistic reasoning and greater rigor

AI researchers began to develop and use sophisticated mathematical tools more than they ever had in the past.[206] There was a widespread realization that many of the problems that AI needed to solve were already being worked on by researchers in fields like mathematics, electrical engineering, economics or operations research. The shared mathematical language allowed both a higher level of collaboration with more established and successful fields and the achievement of results which were measurable and provable; AI had become a more rigorous "scientific" discipline.

AI behind the scenes

Algorithms originally developed by AI researchers began to appear as parts of larger systems. AI had solved a lot of very difficult problems[209] and their solutions proved to be useful throughout the technology industry,[210] such as data mining,

The field of AI received little or no credit for these successes in the 1990s and early 2000s. Many of AI's greatest innovations have been reduced to the status of just another item in the tool chest of computer science.[215] Nick Bostrom explains "A lot of cutting edge AI has filtered into general applications, often without being called AI because once something becomes useful enough and common enough it's not labeled AI anymore."[216]

Many researchers in AI in the 1990s deliberately called their work by other names, such as

Deep learning, big data (2011–2020)

In the first decades of the 21st century, access to large amounts of data (known as "big data"), cheaper and faster computers and advanced machine learning techniques were successfully applied to many problems throughout the economy. In fact, McKinsey Global Institute estimated in their famous paper "Big data: The next frontier for innovation, competition, and productivity" that "by 2009, nearly all sectors in the US economy had at least an average of 200 terabytes of stored data".

By 2016, the market for AI-related products, hardware, and software reached more than 8 billion dollars, and the New York Times reported that interest in AI had reached a "frenzy".

The first global

Deep learning

Deep learning is a branch of machine learning that models high level abstractions in data by using a deep graph with many processing layers.[224] According to the Universal approximation theorem, deep-ness isn't necessary for a neural network to be able to approximate arbitrary continuous functions. Even so, there are many problems that are common to shallow networks (such as overfitting) that deep networks help avoid.[228] As such, deep neural networks are able to realistically generate much more complex models as compared to their shallow counterparts.

However, deep learning has problems of its own. A common problem for recurrent neural networks is the vanishing gradient problem, which is where gradients passed between layers gradually shrink and literally disappear as they are rounded off to zero. There have been many methods developed to approach this problem, such as Long short-term memory units.

State-of-the-art deep neural network architectures can sometimes even rival human accuracy in fields like computer vision, specifically on things like the MNIST database, and traffic sign recognition.[229]

Language processing engines powered by smart search engines can easily beat humans at answering general trivia questions (such as IBM Watson), and recent developments in deep learning have produced astounding results in competing with humans, in things like Go, and Doom (which, being a first-person shooter game, has sparked some controversy).[230][231][232][233]

Big data

Big data refers to a collection of data that cannot be captured, managed, and processed by conventional software tools within a certain time frame. It is a massive amount of decision-making, insight, and process optimization capabilities that require new processing models. In the Big Data Era written by Victor Meyer Schonberg and Kenneth Cooke, big data means that instead of random analysis (sample survey), all data is used for analysis. The 5V characteristics of big data (proposed by IBM): Volume, Velocity, Variety,[234] Value,[235] Veracity.[236]

The strategic significance of big data technology is not to master huge data information, but to specialize in these meaningful data. In other words, if big data is likened to an industry, the key to realizing profitability in this industry is to increase the "

Large language models, AI era (2020–present)

The AI boom started with the initial development of key architectures and algorithms such as the

Large language models

In 2017, the

Models such as

In 2023, Microsoft Research tested the GPT-4 large language model with a large variety of tasks, and concluded that "it could reasonably be viewed as an early (yet still incomplete) version of an artificial general intelligence (AGI) system".[243]

See also

- History of artificial neural networks

- History of knowledge representation and reasoning

- History of natural language processing

- Outline of artificial intelligence

- Progress in artificial intelligence

- Timeline of artificial intelligence

- Timeline of machine learning

Notes

- S2CID 158433736.

- ^ Newquist 1994, pp. 143–156.

- ^ Newquist 1994, pp. 144–152.

- ^ The Talos episode in Argonautica 4

- ^ Bibliotheke 1.9.26

- OCLC 811491744.

- OCLC 1102437035.

- OCLC 51210362.

- ^ Kressel M (1 October 2015). "36 Days of Judaic Myth: Day 24, The Golem of Prague". Matthew Kressel. Retrieved 15 March 2020.

- ^ Newquist 1994, p. [page needed].

- ^ "GOLEM". www.jewishencyclopedia.com. Retrieved 15 March 2020.

- ^ Newquist 1994, p. 38.

- ^ "Sanhedrin 65b". www.sefaria.org. Retrieved 15 March 2020.

- ^ O'Connor KM (1994). "The alchemical creation of life (takwin) and other concepts of Genesis in medieval Islam". Dissertations Available from ProQuest: 1–435.

- ^ Goethe JW (1890). Faust; a tragedy. Translated, in the original metres ... by Bayard Taylor. Authorised ed., published by special arrangement with Mrs. Bayard Taylor. With a biographical introd. London Ward, Lock.

- ^ McCorduck 2004, pp. 17–25.

- ^ Butler 1863.

- ^ Newquist 1994, p. 65.

- S2CID 150700981.

- ^ Needham 1986, p. 53.

- ^ McCorduck 2004, p. 6.

- ^ Nick 2005.

- ^ McCorduck 2004, p. 17.

- ^ Levitt 2000.

- ^ Newquist 1994, p. 30.

- ^ Quoted in McCorduck 2004, p. 8. Crevier 1993, p. 1 and McCorduck 2004, pp. 6–9 discusses sacred statues.

- Leonardo Torres y Quevedo (McCorduck 2004, pp. 59–62)

- ISBN 978-0-19-884666-6. Retrieved 2 May 2023.

- OCLC 5063114.

- ISBN 978-0-313-32801-5. Retrieved 15 May 2023.

- OCLC 638953.

- ^ a b c d Berlinski 2000.

- ^ Cfr. Carreras Artau, Tomás y Joaquín. Historia de la filosofía española. Filosofía cristiana de los siglos XIII al XV. Madrid, 1939, Volume I

- ^ Bonner, Anthonny, The Art and Logic of Ramón Llull: A User's Guide, Brill, 2007.

- ^ Anthony Bonner (ed.), Doctor Illuminatus. A Ramon Llull Reader (Princeton University 1985). Vid. "Llull's Influence: The History of Lullism" at 57–71

- ^

17th century mechanism and AI:

- McCorduck 2004, pp. 37–46

- Russell & Norvig 2003, p. 6

- Buchanan 2005, p. 53

- ^ Hobbes and AI:

- McCorduck 2004, p. 42

- Hobbes 1651, chapter 5

- ^

Leibniz and AI:

- McCorduck 2004, p. 41

- Russell & Norvig 2003, p. 6

- Berlinski 2000, p. 12

- Buchanan 2005, p. 53

- Lisp (the most important programming language used in AI). (Crevier 1993, pp. 190 196, 61)

- ^ The original photo can be seen in the article: Rose A (April 1946). "Lightning Strikes Mathematics". Popular Science: 83–86. Retrieved 15 April 2012.

- ^ Newquist 1994, p. 56.

- ^ The Turing machine: McCorduck 2004, pp. 63–64, Crevier 1993, pp. 22–24, Russell & Norvig 2003, p. 8 and see Turing 1936–1937

- ^ Couturat 1901.

- ^ Russell & Norvig 2021, p. 15.

- ^ Russell & Norvig (2021, p. 15); Newquist (1994, p. 67)

- ^ Randall (1982, pp. 4–5); Byrne (2012); Mulvihill (2012)

- ^ Randall (1982, pp. 6, 11–13); Quevedo (1914); Quevedo (1915)

- ^ Randall 1982, pp. 13, 16–17.

- ^ Quoted in Russell & Norvig (2021, p. 15)

- ^ Menabrea & Lovelace 1843.

- ^ a b Russell & Norvig 2021, p. 14.

- ^ McCorduck 2004, pp. 76–80.

- ^ McCorduck 2004, pp. 51–57, 80–107, Crevier 1993, pp. 27–32, Russell & Norvig 2003, pp. 15, 940, Moravec 1988, p. 3, Cordeschi 2002, Chap. 5.

- ^ ISBN 0-19-825079-7.

- ^ McCorduck 2004, pp. 111–136, Crevier 1993, pp. 49–51, Russell & Norvig 2003, p. 17, Newquist 1994, pp. 91–112 and Kaplan A. "Artificial Intelligence, Business and Civilization - Our Fate Made in Machines". Retrieved 11 March 2022.

- ^ McCorduck 2004, pp. 70–72, Crevier 1993, pp. 22−25, Russell & Norvig 2003, pp. 2–3 and 948, Haugeland 1985, pp. 6–9, Cordeschi 2002, pp. 170–176. See also Turing 1950

- ^ Newquist 1994, pp. 92–98.

- ^ Russell & Norvig (2003, p. 948) claim that Turing answered all the major objections to AI that have been offered in the years since the paper appeared.

- ISSN 1522-9602.

- S2CID 10442035.

- ^ McCorduck 2004, pp. 51–57, 88–94, Crevier 1993, p. 30, Russell & Norvig 2003, pp. 15−16, Cordeschi 2002, Chap. 5 and see also McCullough & Pitts 1943

- ^ McCorduck 2004, p. 102, Crevier 1993, pp. 34–35 and Russell & Norvig 2003, p. 17

- ^ McCorduck 2004, p. 98, Crevier 1993, pp. 27–28, Russell & Norvig 2003, pp. 15, 940, Moravec 1988, p. 3, Cordeschi 2002, Chap. 5.

- ^ See "A Brief History of Computing" at AlanTuring.net.

- ISBN 978-0-387-76575-4. Chapter 6.

- ^ McCorduck 2004, pp. 137–170, Crevier 1993, pp. 44–47

- ^ McCorduck 2004, pp. 123–125, Crevier 1993, pp. 44–46 and Russell & Norvig 2003, p. 17

- ^ Quoted in Crevier 1993, p. 46 and Russell & Norvig 2003, p. 17

- ^ Russell & Norvig 2003, pp. 947, 952

- ^ McCorduck 2004, pp. 111–136, Crevier 1993, pp. 49–51 and Russell & Norvig 2003, p. 17 Newquist 1994, pp. 91–112

- S2CID 238670666.

- ^ See McCarthy et al. 1955. Also, see Crevier 1993, p. 48 where Crevier states "[the proposal] later became known as the 'physical symbol systems hypothesis'". The physical symbol system hypothesis was articulated and named by Newell and Simon in their paper on GPS. (Newell & Simon 1963) It includes a more specific definition of a "machine" as an agent that manipulates symbols. See the philosophy of artificial intelligence.

- ^ "I won't swear and I hadn't seen it before," McCarthy told Pamela McCorduck in 1979. (McCorduck 2004, p. 114) However, McCarthy also stated unequivocally "I came up with the term" in a CNET interview. (Skillings 2006)

- ^ McCarthy J (1988). "Review of The Question of Artificial Intelligence". Annals of the History of Computing. 10 (3): 224–229., collected in McCarthy J (1996). "10. Review of The Question of Artificial Intelligence". Defending AI Research: A Collection of Essays and Reviews. CSLI., p. 73 "[O]ne of the reasons for inventing the term "artificial intelligence" was to escape association with "cybernetics". Its concentration on analog feedback seemed misguided, and I wished to avoid having either to accept Norbert (not Robert) Wiener as a guru or having to argue with him."

- ^ McCorduck (2004, pp. 129–130) discusses how the Dartmouth conference alumni dominated the first two decades of AI research, calling them the "invisible college".

- ^ McCorduck 2004, pp. 125.

- ^ Crevier (1993, pp. 49) writes "the conference is generally recognized as the official birthdate of the new science."

- PMID 12639696.

- ^ Russell and Norvig write "it was astonishing whenever a computer did anything remotely clever." Russell & Norvig 2003, p. 18

- ^ Crevier 1993, pp. 52–107, Moravec 1988, p. 9 and Russell & Norvig 2003, pp. 18−21

- ^ McCorduck 2004, p. 218, Newquist 1994, pp. 91–112, Crevier 1993, pp. 108–109 and Russell & Norvig 2003, p. 21

- ^ Crevier 1993, pp. 52–107, Moravec 1988, p. 9

- ^ Means-ends analysis, reasoning as search: McCorduck 2004, pp. 247–248. Russell & Norvig 2003, pp. 59–61

- ^ Heuristic: McCorduck 2004, p. 246, Russell & Norvig 2003, pp. 21–22

- ^ GPS: McCorduck 2004, pp. 245–250, Crevier 1993, p. GPS?, Russell & Norvig 2003, p. GPS?

- ^ Crevier 1993, pp. 51–58, 65–66 and Russell & Norvig 2003, pp. 18–19

- ^ McCorduck 2004, pp. 268–271, Crevier 1993, pp. 95–96, Newquist 1994, pp. 148–156, Moravec 1988, pp. 14–15

- ^ Rosenblatt, Frank. Principles of neurodynamics: Perceptrons and the theory of brain mechanisms. Vol. 55. Washington, DC: Spartan books, 1962.

- S2CID 195704643.

- ^ Rosen, Charles A., Nils J. Nilsson, and Milton B. Adams. "A research and development program in applications of intelligent automata to reconnaissance-phase I." Proposal for Research SRI No. ESU 65-1, 8 January 1965.

- ^ Nilsson, Nils J. The SRI Artificial Intelligence Center: A Brief History. Artificial Intelligence Center, SRI International, 1984.

- ISSN 2371-9621.

- ISBN 978-0-521-11639-8.

- ^ ISBN 978-0-9745208-0-3.

- ^ a b c d Olazaran Rodriguez, Jose Miguel. A historical sociology of neural network research. PhD Dissertation. University of Edinburgh, 1991. See especially Chapter 2 and 3.

- ^ McCorduck 2004, p. 286, Crevier 1993, pp. 76–79, Russell & Norvig 2003, p. 19

- ^ Crevier 1993, pp. 79–83

- ^ Crevier 1993, pp. 164–172

- ^ McCorduck 2004, pp. 291–296, Crevier 1993, pp. 134–139

- ^ McCorduck 2004, pp. 299–305, Crevier 1993, pp. 83–102, Russell & Norvig 2003, p. 19 and Copeland 2000

- ^ McCorduck 2004, pp. 300–305, Crevier 1993, pp. 84–102, Russell & Norvig 2003, p. 19

- ^ "Humanoid History -WABOT-".

- ISBN 978-3-319-22368-1– via Google Books.

- ^ "Historical Android Projects". androidworld.com.

- ^ Robots: From Science Fiction to Technological Revolution, page 130

- ISBN 978-1-4200-6352-3– via Google Books.

- ^ Simon & Newell 1958, pp. 7−8 quoted in Crevier 1993, p. 108. See also Russell & Norvig 2003, p. 21

- ^ Simon 1965, p. 96 quoted in Crevier 1993, p. 109

- ^ Minsky 1967, p. 2 quoted in Crevier 1993, p. 109

- ^ Minsky strongly believes he was misquoted. See McCorduck 2004, pp. 272–274, Crevier 1993, p. 96 and Darrach 1970.

- ^ Crevier 1993, pp. 64–65

- ^ Crevier 1993, p. 94

- ^ Howe 1994

- ^ McCorduck 2004, p. 131, Crevier 1993, p. 51. McCorduck also notes that funding was mostly under the direction of alumni of the Dartmouth workshop of 1956.

- ^ Crevier 1993, p. 65

- ^ Crevier 1993, pp. 68–71 and Turkle 1984

- ^ Crevier 1993, pp. 100–144 and Russell & Norvig 2003, pp. 21–22

- ^ McCorduck 2004, pp. 104–107, Crevier 1993, pp. 102–105, Russell & Norvig 2003, p. 22

- ^ Crevier 1993, pp. 163–196

- ISSN 0001-0782.

- ^ Crevier 1993, p. 146

- ^ Russell & Norvig 2003, pp. 20–21Newquist 1994, pp. 336

- ^ Crevier 1993, pp. 146–148, see also Buchanan 2005, p. 56: "Early programs were necessarily limited in scope by the size and speed of memory"

- SAIL. He states "I would say that 50 years ago, the machine capability was much too small, but by 30 years ago, machine capability wasn't the real problem." in a CNET interview. (Skillings 2006)

- ^ Hans Moravec, ROBOT: Mere Machine to Transcendent Mind

- ^ Russell & Norvig 2003, pp. 9, 21–22 and Lighthill 1973

- ^ McCorduck 2004, pp. 300 & 421; Crevier 1993, pp. 113–114; Moravec 1988, p. 13; Lenat & Guha 1989, (Introduction); Russell & Norvig 2003, p. 21

- ^ McCorduck 2004, p. 456, Moravec 1988, pp. 15–16

- ^ McCarthy & Hayes 1969, Crevier 1993, pp. 117–119

- ^ McCorduck 2004, pp. 280–281, Crevier 1993, p. 110, Russell & Norvig 2003, p. 21 and NRC 1999 under "Success in Speech Recognition".

- ^ Crevier 1993, p. 117, Russell & Norvig 2003, p. 22, Howe 1994 and see also Lighthill 1973.

- ^ Russell & Norvig 2003, p. 22, Lighthill 1973, John McCarthy wrote in response that "the combinatorial explosion problem has been recognized in AI from the beginning" in Review of Lighthill report

- ^ Crevier 1993, pp. 115–116 (on whom this account is based). Other views include McCorduck 2004, pp. 306–313 and NRC 1999 under "Success in Speech Recognition".

- ^ Crevier 1993, p. 115. Moravec explains, "Their initial promises to DARPA had been much too optimistic. Of course, what they delivered stopped considerably short of that. But they felt they couldn't in their next proposal promise less than in the first one, so they promised more."

- ^ NRC 1999 under "Shift to Applied Research Increases Investment." While the autonomous tank was a failure, the battle management system (called "DART") proved to be enormously successful, saving billions in the first Gulf War, repaying the investment and justifying the DARPA's pragmatic policy, at least as far as DARPA was concerned.

- ^ Lucas and Penrose' critique of AI: Crevier 1993, p. 22, Russell & Norvig 2003, pp. 949–950, Hofstadter 1999, pp. 471–477 and see Lucas 1961

- present-at-hand.) (Dreyfus & Dreyfus 1986)

- Dreyfus' critique of artificial intelligence: McCorduck 2004, pp. 211–239, Crevier 1993, pp. 120–132, Russell & Norvig 2003, pp. 950–952 and see Dreyfus 1965, Dreyfus 1972, Dreyfus & Dreyfus 1986

- ^ Searle's critique of AI: McCorduck 2004, pp. 443–445, Crevier 1993, pp. 269–271, Russell & Norvig 2003, pp. 958–960 and see Searle 1980

- ^ Quoted in Crevier 1993, p. 143

- ^ Quoted in Crevier 1993, p. 122

- ^ Newquist 1994, pp. 276

- ^ "I became the only member of the AI community to be seen eating lunch with Dreyfus. And I deliberately made it plain that theirs was not the way to treat a human being." Joseph Weizenbaum, quoted in Crevier 1993, p. 123.

- chatterbot-like "computer simulations of paranoid processes (PARRY)" to "make intelligible paranoid processes in explicit symbol processing terms." (Colby 1974, p. 6)

- ^ Weizenbaum's critique of AI: McCorduck 2004, pp. 356–373, Crevier 1993, pp. 132–144, Russell & Norvig 2003, p. 961 and see Weizenbaum 1976

- ^ McCorduck 2004, p. 51, Russell & Norvig 2003, pp. 19, 23

- ^ McCorduck 2004, p. 51, Crevier 1993, pp. 190–192

- ^ Crevier 1993, pp. 193–196

- ^ Crevier 1993, pp. 145–149, 258–63

- ^ Wason & Shapiro (1966) showed that people do poorly on completely abstract problems, but if the problem is restated to allow the use of intuitive social intelligence, performance dramatically improves. (See Wason selection task) Kahneman, Slovic & Tversky (1982) have shown that people are terrible at elementary problems that involve uncertain reasoning. (See list of cognitive biases for several examples). Eleanor Rosch's work is described in Lakoff 1987

- ^ An early example of McCarthy's position was in the journal Science where he said "This is AI, so we don't care if it's psychologically real" (Kolata 1982), and he recently reiterated his position at the AI@50 conference where he said "Artificial intelligence is not, by definition, simulation of human intelligence" (Maker 2006).

- ^ Crevier 1993, pp. 175

- ^ Neat vs. scruffy: McCorduck 2004, pp. 421–424 (who picks up the state of the debate in 1984). Crevier 1993, pp. 168 (who documents Schank's original use of the term). Another aspect of the conflict was called "the procedural/declarative distinction" but did not prove to be influential in later AI research.

- ^ McCorduck 2004, pp. 305–306, Crevier 1993, pp. 170–173, 246 and Russell & Norvig 2003, p. 24. Minsky's frame paper: Minsky 1974.

- ^ Hayes P (1981). "The logic of frames". In Kaufmann M (ed.). Readings in artificial intelligence. pp. 451–458.

- ^ a b Reiter R (1978). "On reasoning by default". American Journal of Computational Linguistics: 29–37.

- ISBN 978-1-4684-3386-9.

- ^ Newquist 1994, pp. 189–192

- ^ McCorduck 2004, pp. 327–335 (Dendral), Crevier 1993, pp. 148–159, Newquist 1994, p. 271, Russell & Norvig 2003, pp. 22–23

- ^ Crevier 1993, pp. 158–159 and Russell & Norvig 2003, pp. 23−24

- ^ Crevier 1993, p. 198

- ^ Newquist 1994, pp. 259

- ^ McCorduck 2004, pp. 434–435, Crevier 1993, pp. 161–162, 197–203, Newquist 1994, pp. 275 and Russell & Norvig 2003, p. 24

- ^ McCorduck 2004, p. 299

- ^ McCorduck 2004, pp. 421

- ^ Knowledge revolution: McCorduck 2004, pp. 266–276, 298–300, 314, 421, Newquist 1994, pp. 255–267, Russell & Norvig 2003, pp. 22–23

- ^ Cyc: McCorduck 2004, p. 489, Crevier 1993, pp. 239–243, Newquist 1994, pp. 431–455, Russell & Norvig 2003, pp. 363−365 and Lenat & Guha 1989

- ^ "Chess: Checkmate" (PDF). Archived from the original (PDF) on 8 October 2007. Retrieved 1 September 2007.

- ^ McCorduck 2004, pp. 436–441, Newquist 1994, pp. 231–240, Crevier 1993, pp. 211, Russell & Norvig 2003, p. 24 and see also Feigenbaum & McCorduck 1983

- ^ Crevier 1993, pp. 195

- ^ Crevier 1993, pp. 240.

- ^ a b c Russell & Norvig 2003, p. 25

- ^ McCorduck 2004, pp. 426–432, NRC 1999 under "Shift to Applied Research Increases Investment"

- ISBN 978-0-262-03803-4.

- ^ Crevier 1993, pp. 214–215.

- ^ Crevier 1993, pp. 215–216.

- ISBN 978-1-4613-1639-8.

- ^ Newquist 1994, pp. 501

- ^ Crevier 1993, pp. 203. AI winter was first used as the title of a seminar on the subject for the Association for the Advancement of Artificial Intelligence.

- ^ Newquist 1994, pp. 359–379, McCorduck 2004, p. 435, Crevier 1993, pp. 209–210

- ^ McCorduck 2004, p. 435 (who cites institutional reasons for their ultimate failure), Newquist 1994, pp. 258–283 (who cites limited deployment within corporations), Crevier 1993, pp. 204–208 (who cites the difficulty of truth maintenance, i.e., learning and updating), Lenat & Guha 1989, Introduction (who emphasizes the brittleness and the inability to handle excessive qualification.)

- ^ McCorduck 2004, pp. 430–431

- ^ a b McCorduck 2004, p. 441, Crevier 1993, p. 212. McCorduck writes "Two and a half decades later, we can see that the Japanese didn't quite meet all of those ambitious goals."

- ^ Newquist 1994, pp. 476

- ^ a b Newquist 1994, pp. 440

- ^ McCorduck 2004, pp. 454–462

- ^ Moravec (1988, p. 20) writes: "I am confident that this bottom-up route to artificial intelligence will one date meet the traditional top-down route more than half way, ready to provide the real world competence and the commonsense knowledge that has been so frustratingly elusive in reasoning programs. Fully intelligent machines will result when the metaphorical golden spike is driven uniting the two efforts."

- ^ Crevier 1993, pp. 183–190.

- .

- ^ Brooks 1990, p. 3

- ^ See, for example, Lakoff & Johnson 1999

- ^ Newquist 1994, pp. 511

- ^ McCorduck (2004, p. 424) discusses the fragmentation and the abandonment of AI's original goals.

- ^ McCorduck 2004, pp. 480–483

- ^ "Deep Blue". IBM Research. Retrieved 10 September 2010.

- ^ "DARPA Grand Challenge – home page". Archived from the original on 31 October 2007.

- ^ "Welcome". Archived from the original on 5 March 2014. Retrieved 25 October 2011.

- ^ Markoff J (16 February 2011). "On 'Jeopardy!' Watson Win Is All but Trivial". The New York Times.

- ^ Kurzweil 2005, p. 274 writes that the improvement in computer chess, "according to common wisdom, is governed only by the brute force expansion of computer hardware."

- gigaflops(and this does not even take into account Deep Blue's special-purpose hardware for chess). Very approximately, these differ by a factor of 107.

- ^ McCorduck 2004, pp. 471–478, Russell & Norvig 2003, p. 55, where they write: "The whole-agent view is now widely accepted in the field". The intelligent agent paradigm is discussed in major AI textbooks, such as: Russell & Norvig 2003, pp. 32–58, 968–972, Poole, Mackworth & Goebel 1998, pp. 7–21, Luger & Stubblefield 2004, pp. 235–240

- The Society of Mind (Minsky 1986) used the word "agent". Other "modular" proposals included Rodney Brook's subsumption architecture, object-oriented programmingand others.

- ^ a b Russell & Norvig 2003, pp. 27, 55

- ^ This is how the most widely accepted textbooks of the 21st century define artificial intelligence. See Russell & Norvig 2003, p. 32 and Poole, Mackworth & Goebel 1998, p. 1

- ^ McCorduck 2004, p. 478

- ^ McCorduck 2004, pp. 486–487, Russell & Norvig 2003, pp. 25–26

- ^ Pearl 1988

- ^ Russell & Norvig 2003, pp. 25−26

- ^ See Applications of artificial intelligence § Computer science

- ^ NRC 1999 under "Artificial Intelligence in the 90s", and Kurzweil 2005, p. 264

- ^ Russell & Norvig 2003, p. 28

- ^ For the new state of the art in AI based speech recognition, see The Economist (2007)

- ^ a b "AI-inspired systems were already integral to many everyday technologies such as internet search engines, bank software for processing transactions and in medical diagnosis." Nick Bostrom, quoted in CNN 2006

- ^ Olsen (2004),Olsen (2006)

- ^ McCorduck 2004, p. 423, Kurzweil 2005, p. 265, Hofstadter 1999, p. 601 Newquist 1994, pp. 445

- ^ CNN 2006

- ^ Markoff 2005

- ^ The Economist 2007

- ^ Tascarella 2006

- ^ Newquist 1994, pp. 532

- ^ Steve Lohr (17 October 2016), "IBM Is Counting on Its Bet on Watson, and Paying Big Money for It", New York Times

- ISSN 1540-9309.

- ^ "How Big Data is Changing Economies | Becker Friedman Institute". bfi.uchicago.edu. Archived from the original on 18 June 2018. Retrieved 9 June 2017.

- ^ S2CID 3074096.

- ^ Milmo D (3 November 2023). "Hope or Horror? The great AI debate dividing its pioneers". The Guardian Weekly. pp. 10–12.

- ^ "The Bletchley Declaration by Countries Attending the AI Safety Summit, 1-2 November 2023". GOV.UK. 1 November 2023. Archived from the original on 1 November 2023. Retrieved 2 November 2023.

- ^ "Countries agree to safe and responsible development of frontier AI in landmark Bletchley Declaration". GOV.UK (Press release). Archived from the original on 1 November 2023. Retrieved 1 November 2023.

- ^ Baral C, Fuentes O, Kreinovich V (June 2015). "Why Deep Neural Networks: A Possible Theoretical Explanation". Departmental Technical Reports (Cs). Retrieved 9 June 2017.

- S2CID 2161592.

- ISSN 0362-4331. Retrieved 10 June 2017.

- ^ "AlphaGo: Mastering the ancient game of Go with Machine Learning". Research Blog. Retrieved 10 June 2017.

- ^ "Innovations of AlphaGo | DeepMind". DeepMind. 10 April 2017. Retrieved 10 June 2017.

- ^ University CM. "Computer Out-Plays Humans in "Doom"-CMU News - Carnegie Mellon University". www.cmu.edu. Retrieved 10 June 2017.

- ^ Laney D (2001). "3D data management: Controlling data volume, velocity and variety". META Group Research Note. 6 (70).

- ^ Marr, Bernard (6 March 2014). "Big Data: The 5 Vs Everyone Must Know".

- ^ Goes PB (2014). "Design science research in top information systems journals". MIS Quarterly: Management Information Systems. 38 (1).

- ^ Marr B. "Beyond The Hype: What You Really Need To Know About AI In 2023". Forbes. Retrieved 27 January 2024.

- ^ "The Era of AI: 2023's Landmark Year". CMSWire.com. Retrieved 28 January 2024.

- ^ "How the era of artificial intelligence will transform society?". PocketConfidant AI. 15 June 2018. Retrieved 28 January 2024.

- ^ "This year signaled the start of a new era". www.linkedin.com. Retrieved 28 January 2024.

- ^ Lee A (23 January 2024). "UT Designates 2024 'The Year of AI'". UT News. Retrieved 28 January 2024.

- ^ Murgia M (23 July 2023). "Transformers: the Google scientists who pioneered an AI revolution". www.ft.com. Retrieved 10 December 2023.

- arXiv:2303.12712 [cs.CL].

References

- OCLC 46890682.

- Buchanan BG (Winter 2005), "A (Very) Brief History of Artificial Intelligence" (PDF), AI Magazine, pp. 53–60, archived from the original(PDF) on 26 September 2007, retrieved 30 August 2007.

- Butler S (13 June 1863), "Darwin Among the Machines", The Press, Christchurch, New Zealand, retrieved 10 October 2008.

- Byrne JG (8 December 2012). "The John Gabriel Byrne Computer Science Collection" (PDF). Archived from the original on 16 April 2019. Retrieved 8 August 2019.

- "AI set to exceed human brain power", CNN.com, 26 July 2006, retrieved 16 October 2007.

- Colby KM, Watt JB, Gilbert JP (1966), "A Computer Method of Psychotherapy: Preliminary Communication", The Journal of Nervous and Mental Disease, vol. 142, no. 2, pp. 148–152, S2CID 36947398.

- Colby KM (September 1974), Ten Criticisms of Parry (PDF), Stanford Artificial Intelligence Laboratory, REPORT NO. STAN-CS-74-457, retrieved 17 June 2018.

- Couturat L (1901), La Logique de Leibniz

- Copeland J (2000), Micro-World AI, retrieved 8 October 2008.

- Cordeschi R (2002), The Discovery of the Artificial, Dordrecht: Kluwer..

- ISBN 0-465-02997-3.

- Darrach B (20 November 1970), "Meet Shaky, the First Electronic Person", Life Magazine, pp. 58–68.

- Doyle J (1983), "What is rational psychology? Toward a modern mental philosophy", AI Magazine, vol. 4, no. 3, pp. 50–53.

- Dreyfus H (1965), Alchemy and AI, RAND Corporation Memo.

- Dreyfus H (1972), OCLC 5056816.

- ISBN 978-0-02-908060-3. Retrieved 22 August 2020.

- The Economist (7 June 2007), "Are You Talking to Me?", The Economist, retrieved 16 October 2008.

- ISBN 978-0-7181-2401-4.

- ISBN 978-0-262-08153-5.

- OCLC 61273290.

- OCLC 48871099.

- Hewitt C, Bishop P, Steiger R (1973), A Universal Modular Actor Formalism for Artificial Intelligence (PDF), IJCAI, archived from the original (PDF) on 29 December 2009

- Hobbes T (1651), Leviathan.

- OCLC 225590743.

- Howe J (November 1994), Artificial Intelligence at Edinburgh University: a Perspective, retrieved 30 August 2007.

- S2CID 143452957.

- Kaplan A, Haenlein M (2018), "Siri, Siri in my Hand, who's the Fairest in the Land? On the Interpretations, Illustrations and Implications of Artificial Intelligence", Business Horizons, 62: 15–25, S2CID 158433736.

- Kolata G (1982), "How can computers get common sense?", Science, 217 (4566): 1237–1238, PMID 17837639.

- OCLC 71826177.

- ISBN 978-0-226-46804-4.

- Lakoff G, Johnson M (1999). Philosophy in the flesh: The embodied mind and its challenge to western thought. Basic Books. ISBN 978-0-465-05674-3.

- OCLC 19981533.

- Levitt GM (2000), The Turk, Chess Automaton, Jefferson, N.C.: McFarland, ISBN 978-0-7864-0778-1.

- Science Research Council

- S2CID 55408480

- Luger G, ISBN 978-0-8053-4780-7. Retrieved 17 December 2019.

- Maker MH (2006), AI@50: AI Past, Present, Future, Dartmouth College, archived from the original on 8 October 2008, retrieved 16 October 2008

- Markoff J (14 October 2005), "Behind Artificial Intelligence, a Squadron of Bright Real People", The New York Times, retrieved 16 October 2008

- McCarthy J, Minsky M, Rochester N, Shannon C (31 August 1955), A Proposal for the Dartmouth Summer Research Project on Artificial Intelligence, archived from the original on 30 September 2008, retrieved 16 October 2008

- McCarthy J, Mitchie D (eds.), Machine Intelligence 4, Edinburgh University Press, pp. 463–502, retrieved 16 October 2008

- McCorduck P (2004), Machines Who Think (2nd ed.), Natick, MA: A. K. Peters, Ltd., OCLC 52197627.

- Menabrea LF, Lovelace A (1843), "Sketch of the Analytical Engine Invented by Charles Babbage", Scientific Memoirs, 3, retrieved 29 August 2008 With notes upon the Memoir by the Translator

- Minsky M (1967), Computation: Finite and Infinite Machines, Englewood Cliffs, N.J.: Prentice-Hall

- OCLC 16924756

- Minsky M (1974), A Framework for Representing Knowledge, retrieved 16 October 2008

- OCLC 223353010

- Minsky M (2001), It's 2001. Where Is HAL?, Dr. Dobb's Technetcast, retrieved 8 August 2009

- Moravec H (1976), The Role of Raw Power in Intelligence, archived from the original on 3 March 2016, retrieved 16 October 2008

- Moravec H (1988), Mind Children, Harvard University Press, OCLC 245755104

- Mulvihill M (17 October 2012). "1907: was the first portable computer design Irish?". Ingenious Ireland.

- Needham J (1986). Science and Civilization in China: Volume 2. Taipei: Caves Books Ltd.

- OCLC 246968117

- OCLC 313139906

- OCLC 246584055

- Nick M (2005), Al Jazari: The Ingenious 13th Century Muslim Mechanic, Al Shindagah, retrieved 16 October 2008.*

- O'Connor KM (1994), The alchemical creation of life (takwin) and other concepts of Genesis in medieval Islam, University of Pennsylvania, pp. 1–435, retrieved 10 January 2007

- Olsen S (10 May 2004), Newsmaker: Google's man behind the curtain, CNET, retrieved 17 October 2008.

- Olsen S (18 August 2006), Spying an intelligent search engine, CNET, retrieved 17 October 2008.

- OCLC 249625842.

- Poole D, Mackworth A, Goebel R (1998), Computational Intelligence: A Logical Approach, Oxford University Press., ISBN 978-0-19-510270-3.

- Quevedo LT (1914), "Revista de la Academia de Ciencias Exacta", Ensayos sobre Automática – Su definicion. Extension teórica de sus aplicaciones, vol. 12, pp. 391–418

- Quevedo LT (1915), "Revue Génerale des Sciences Pures et Appliquées", Essais sur l'Automatique - Sa définition. Etendue théorique de ses applications, vol. 2, pp. 601–611

- Randall B (1982), "From Analytical Engine to Electronic Digital Computer: The Contributions of Ludgate, Torres, and Bush", fano.co.uk, retrieved 29 October 2018

- ISBN 0-13-790395-2.

- LCCN 20190474.

- S2CID 2126705, archived from the originalon 3 March 2016, retrieved 20 August 2007.

- doi:10.1017/S0140525X00005756, archived from the originalon 10 December 2007, retrieved 13 May 2009.

- .

- Simon HA (1965), The Shape of Automation for Men and Management, New York: Harper & Row.

- Skillings J (2006), Newsmaker: Getting machines to think like us, CNET, retrieved 8 October 2008.

- Tascarella P (14 August 2006), "Robotics firms find fundraising struggle, with venture capital shy", Pittsburgh Business Times, retrieved 15 March 2016.

- Turing A (1936–1937), "On Computable Numbers, with an Application to the Entscheidungsproblem", Proceedings of the London Mathematical Society, 2, 42 (42): 230–265, S2CID 73712, retrieved 8 October 2008.

- ISSN 0026-4423.

- Turkle S (1984). The second self: computers and the human spirit. Simon and Schuster. OCLC 895659909.

- Wason PC, Shapiro D (1966). "Reasoning". In Foss, B. M. (ed.). New horizons in psychology. Harmondsworth: Penguin. Retrieved 18 November 2019.

- OCLC 10952283.