Logarithm

|

Arithmetic operations | ||||||||||||||||||||||||||||||||||||||||||

|

||||||||||||||||||||||||||||||||||||||||||

In

The logarithm base 10 is called the decimal or common logarithm and is commonly used in science and engineering. The natural logarithm has the number e ≈ 2.718 as its base; its use is widespread in mathematics and physics, because of its very simple derivative. The binary logarithm uses base 2 and is frequently used in computer science.

Logarithms were introduced by John Napier in 1614 as a means of simplifying calculations.[1] They were rapidly adopted by navigators, scientists, engineers, surveyors and others to perform high-accuracy computations more easily. Using logarithm tables, tedious multi-digit multiplication steps can be replaced by table look-ups and simpler addition. This is possible because the logarithm of a product is the sum of the logarithms of the factors:

The concept of logarithm as the inverse of exponentiation extends to other mathematical structures as well. However, in general settings, the logarithm tends to be a multi-valued function. For example, the complex logarithm is the multi-valued inverse of the complex exponential function. Similarly, the discrete logarithm is the multi-valued inverse of the exponential function in finite groups; it has uses in public-key cryptography.

Motivation

Addition, multiplication, and exponentiation are three of the most fundamental arithmetic operations. The inverse of addition is subtraction, and the inverse of multiplication is division. Similarly, a logarithm is the inverse operation of exponentiation. Exponentiation is when a number b, the base, is raised to a certain power y, the exponent, to give a value x; this is denoted

The logarithm of base b is the inverse operation, that provides the output y from the input x. That is, is equivalent to if b is a positive real number. (If b is not a positive real number, both exponentiation and logarithm can be defined but may take several values, which makes definitions much more complicated.)

One of the main historical motivations of introducing logarithms is the formula

Definition

Given a positive real number b such that b ≠ 1, the logarithm of a positive real number x with respect to base b[nb 1] is the exponent by which b must be raised to yield x. In other words, the logarithm of x to base b is the unique real number y such that .[3]

The logarithm is denoted "logb x" (pronounced as "the logarithm of x to base b", "the base-b logarithm of x", or most commonly "the log, base b, of x").

An equivalent and more succinct definition is that the function logb is the inverse function to the function .

Examples

- log2 16 = 4, since 24 = 2 × 2 × 2 × 2 = 16.

- Logarithms can also be negative: since

- log10 150 is approximately 2.176, which lies between 2 and 3, just as 150 lies between 102 = 100 and 103 = 1000.

- For any base b, logb b = 1 and logb 1 = 0, since b1 = b and b0 = 1, respectively.

Logarithmic identities

Several important formulas, sometimes called logarithmic identities or logarithmic laws, relate logarithms to one another.[4]

Product, quotient, power, and root

The logarithm of a product is the sum of the logarithms of the numbers being multiplied; the logarithm of the ratio of two numbers is the difference of the logarithms. The logarithm of the p-th power of a number is p times the logarithm of the number itself; the logarithm of a p-th root is the logarithm of the number divided by p. The following table lists these identities with examples. Each of the identities can be derived after substitution of the logarithm definitions or in the left hand sides.

| Formula | Example | |

|---|---|---|

| Product | ||

| Quotient | ||

| Power | ||

| Root |

Change of base

The logarithm logb x can be computed from the logarithms of x and b with respect to an arbitrary base k using the following formula:[nb 2]

Typical

Logarithms with respect to any base b can be determined using either of these two logarithms by the previous formula:Given a number x and its logarithm y = logb x to an unknown base b, the base is given by:

which can be seen from taking the defining equation to the power of

Particular bases

Among all choices for the base, three are particularly common. These are b = 10, b = e (the irrational mathematical constant e ≈ 2.71828183 ), and b = 2 (the binary logarithm). In mathematical analysis, the logarithm base e is widespread because of analytical properties explained below. On the other hand, base 10 logarithms (the common logarithm) are easy to use for manual calculations in the decimal number system:[6]

Thus, log10 (x) is related to the number of

Many disciplines write log x as an abbreviation for logb x when the intended base can be inferred based on the context or discipline (or when the base is indeterminate or immaterial). In computer science, log usually refers to log2, and in mathematics log usually refers to loge .[10] In other contexts, log often means log10 .[11] The following table lists common notations for logarithms to these bases and the fields where they are used. The "ISO notation" column lists designations suggested by the International Organization for Standardization.[12]

Base b Name for logb x ISO notation Other notations Used in 2 binary logarithm lb x[13] ld x, log x, lg x,[14] log2 x computer science, information theory, bioinformatics, music theory, photography e natural logarithm ln x [nb 3] log x

(in mathematics[18] and many programming languages[nb 4]), loge xmathematics, physics, chemistry,

statistics, economics, information theory, and engineering10 common logarithm lg x log x, log10 x

(in engineering, biology, astronomy)various engineering fields (see decibel and see below),

logarithm tables, handheld calculators, spectroscopyb logarithm to base b logb x mathematics

History

The history of logarithms in seventeenth-century Europe saw the discovery of a new function that extended the realm of analysis beyond the scope of algebraic methods. The method of logarithms was publicly propounded by John Napier in 1614, in a book titled Mirifici Logarithmorum Canonis Descriptio (Description of the Wonderful Canon of Logarithms).[19][20] Prior to Napier's invention, there had been other techniques of similar scopes, such as the prosthaphaeresis or the use of tables of progressions, extensively developed by Jost Bürgi around 1600.[21][22] Napier coined the term for logarithm in Middle Latin, "logarithmus," derived from the Greek, literally meaning, "ratio-number," from logos "proportion, ratio, word" + arithmos "number".

The common logarithm of a number is the index of that power of ten which equals the number.[23] Speaking of a number as requiring so many figures is a rough allusion to common logarithm, and was referred to by Archimedes as the "order of a number".[24] The first real logarithms were heuristic methods to turn multiplication into addition, thus facilitating rapid computation. Some of these methods used tables derived from trigonometric identities.[25] Such methods are called prosthaphaeresis.

Invention of the

Before Euler developed his modern conception of complex natural logarithms, Roger Cotes had a nearly equivalent result when he showed in 1714 that[27]

Logarithm tables, slide rules, and historical applications

By simplifying difficult calculations before calculators and computers became available, logarithms contributed to the advance of science, especially astronomy. They were critical to advances in surveying, celestial navigation, and other domains. Pierre-Simon Laplace called logarithms

- "...[a]n admirable artifice which, by reducing to a few days the labour of many months, doubles the life of the astronomer, and spares him the errors and disgust inseparable from long calculations."[28]

As the function f(x) = bx is the inverse function of logb x, it has been called an antilogarithm.[29] Nowadays, this function is more commonly called an exponential function.

Log tables

A key tool that enabled the practical use of logarithms was the

Greater accuracy can be obtained by interpolation:

The value of 10x can be determined by reverse look up in the same table, since the logarithm is a monotonic function.

Computations

The product and quotient of two positive numbers c and d were routinely calculated as the sum and difference of their logarithms. The product cd or quotient c/d came from looking up the antilogarithm of the sum or difference, via the same table:

For manual calculations that demand any appreciable precision, performing the lookups of the two logarithms, calculating their sum or difference, and looking up the antilogarithm is much faster than performing the multiplication by earlier methods such as

Calculations of powers and roots are reduced to multiplications or divisions and lookups by

and

Trigonometric calculations were facilitated by tables that contained the common logarithms of

Slide rules

Another critical application was the

For example, adding the distance from 1 to 2 on the lower scale to the distance from 1 to 3 on the upper scale yields a product of 6, which is read off at the lower part. The slide rule was an essential calculating tool for engineers and scientists until the 1970s, because it allows, at the expense of precision, much faster computation than techniques based on tables.[32]

Analytic properties

A deeper study of logarithms requires the concept of a function. A function is a rule that, given one number, produces another number.[33] An example is the function producing the x-th power of b from any real number x, where the base b is a fixed number. This function is written as f(x) = b x. When b is positive and unequal to 1, we show below that f is invertible when considered as a function from the reals to the positive reals.

Existence

Let b be a positive real number not equal to 1 and let f(x) = b x.

It is a standard result in real analysis that any continuous strictly monotonic function is bijective between its domain and range. This fact follows from the intermediate value theorem.[34] Now, f is strictly increasing (for b > 1), or strictly decreasing (for 0 < b < 1),[35] is continuous, has domain , and has range . Therefore, f is a bijection from to . In other words, for each positive real number y, there is exactly one real number x such that .

We let denote the inverse of f. That is, logb y is the unique real number x such that . This function is called the base-b logarithm function or logarithmic function (or just logarithm).

Characterization by the product formula

The function logb x can also be essentially characterized by the product formula

Graph of the logarithm function

As discussed above, the function logb is the inverse to the exponential function . Therefore, their

Derivative and antiderivative

Analytic properties of functions pass to their inverses.[34] Thus, as f(x) = bx is a continuous and differentiable function, so is logb y. Roughly, a continuous function is differentiable if its graph has no sharp "corners". Moreover, as the derivative of f(x) evaluates to ln(b) bx by the properties of the exponential function, the chain rule implies that the derivative of logb x is given by[35][37]

The derivative of ln(x) is 1/x; this implies that ln(x) is the unique antiderivative of 1/x that has the value 0 for x = 1. It is this very simple formula that motivated to qualify as "natural" the natural logarithm; this is also one of the main reasons of the importance of the constant e.

The derivative with a generalized functional argument f(x) is

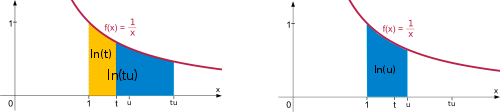

Integral representation of the natural logarithm

The

The equality (1) splits the integral into two parts, while the equality (2) is a change of variable (w = x/t). In the illustration below, the splitting corresponds to dividing the area into the yellow and blue parts. Rescaling the left hand blue area vertically by the factor t and shrinking it by the same factor horizontally does not change its size. Moving it appropriately, the area fits the graph of the function f(x) = 1/x again. Therefore, the left hand blue area, which is the integral of f(x) from t to tu is the same as the integral from 1 to u. This justifies the equality (2) with a more geometric proof.

The power formula ln(tr) = r ln(t) may be derived in a similar way:

The sum over the reciprocals of natural numbers,

Transcendence of the logarithm

Real numbers that are not algebraic are called transcendental;[43] for example, π and e are such numbers, but is not. Almost all real numbers are transcendental. The logarithm is an example of a transcendental function. The Gelfond–Schneider theorem asserts that logarithms usually take transcendental, i.e. "difficult" values.[44]

Calculation

Logarithms are easy to compute in some cases, such as log10 (1000) = 3. In general, logarithms can be calculated using

Power series

Taylor series

For any real number z that satisfies 0 < z ≤ 2, the following formula holds:[nb 5][50]

Equating the function ln(z) to this infinite sum (

For example, with z = 1.5 the third approximation yields 0.4167, which is about 0.011 greater than ln(1.5) = 0.405465, and the ninth approximation yields 0.40553, which is only about 0.0001 greater. The nth partial sum can approximate ln(z) with arbitrary precision, provided the number of summands n is large enough.

In elementary calculus, the series is said to converge to the function ln(z), and the function is the limit of the series. It is the Taylor series of the natural logarithm at z = 1. The Taylor series of ln(z) provides a particularly useful approximation to ln(1 + z) when z is small, |z| < 1, since then

For example, with z = 0.1 the first-order approximation gives ln(1.1) ≈ 0.1, which is less than 5% off the correct value 0.0953.

Inverse hyperbolic tangent

Another series is based on the

A closely related method can be used to compute the logarithm of integers. Putting in the above series, it follows that:

Arithmetic–geometric mean approximation

The arithmetic–geometric mean yields high-precision approximations of the natural logarithm. Sasaki and Kanada showed in 1982 that it was particularly fast for precisions between 400 and 1000 decimal places, while Taylor series methods were typically faster when less precision was needed. In their work ln(x) is approximated to a precision of 2−p (or p precise bits) by the following formula (due to Carl Friedrich Gauss):[51][52]

Here M(x, y) denotes the arithmetic–geometric mean of x and y. It is obtained by repeatedly calculating the average (x + y)/2 (arithmetic mean) and (geometric mean) of x and y then let those two numbers become the next x and y. The two numbers quickly converge to a common limit which is the value of M(x, y). m is chosen such that

to ensure the required precision. A larger m makes the M(x, y) calculation take more steps (the initial x and y are farther apart so it takes more steps to converge) but gives more precision. The constants π and ln(2) can be calculated with quickly converging series.

Feynman's algorithm

While at Los Alamos National Laboratory working on the Manhattan Project, Richard Feynman developed a bit-processing algorithm to compute the logarithm that is similar to long division and was later used in the Connection Machine. The algorithm relies on the fact that every real number x where 1 < x < 2 can be represented as a product of distinct factors of the form 1 + 2−k. The algorithm sequentially builds that product P, starting with P = 1 and k = 1: if P · (1 + 2−k) < x, then it changes P to P · (1 + 2−k). It then increases by one regardless. The algorithm stops when k is large enough to give the desired accuracy. Because log(x) is the sum of the terms of the form log(1 + 2−k) corresponding to those k for which the factor 1 + 2−k was included in the product P, log(x) may be computed by simple addition, using a table of log(1 + 2−k) for all k. Any base may be used for the logarithm table.[53]

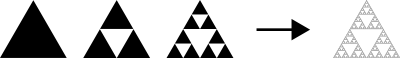

Applications

Logarithms have many applications inside and outside mathematics. Some of these occurrences are related to the notion of scale invariance. For example, each chamber of the shell of a nautilus is an approximate copy of the next one, scaled by a constant factor. This gives rise to a logarithmic spiral.[54] Benford's law on the distribution of leading digits can also be explained by scale invariance.[55] Logarithms are also linked to self-similarity. For example, logarithms appear in the analysis of algorithms that solve a problem by dividing it into two similar smaller problems and patching their solutions.[56] The dimensions of self-similar geometric shapes, that is, shapes whose parts resemble the overall picture are also based on logarithms. Logarithmic scales are useful for quantifying the relative change of a value as opposed to its absolute difference. Moreover, because the logarithmic function log(x) grows very slowly for large x, logarithmic scales are used to compress large-scale scientific data. Logarithms also occur in numerous scientific formulas, such as the Tsiolkovsky rocket equation, the Fenske equation, or the Nernst equation.

Logarithmic scale

Scientific quantities are often expressed as logarithms of other quantities, using a logarithmic scale. For example, the

The strength of an earthquake is measured by taking the common logarithm of the energy emitted at the quake. This is used in the

take in water).[63] The activity of hydronium ions in neutral water is 10−7 mol·L−1

Psychology

Logarithms occur in several laws describing

Psychological studies found that individuals with little mathematics education tend to estimate quantities logarithmically, that is, they position a number on an unmarked line according to its logarithm, so that 10 is positioned as close to 100 as 100 is to 1000. Increasing education shifts this to a linear estimate (positioning 1000 10 times as far away) in some circumstances, while logarithms are used when the numbers to be plotted are difficult to plot linearly.[71][72]

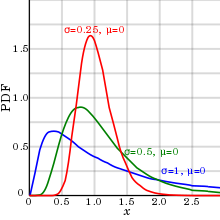

Probability theory and statistics

Logarithms arise in probability theory: the law of large numbers dictates that, for a fair coin, as the number of coin-tosses increases to infinity, the observed proportion of heads approaches one-half. The fluctuations of this proportion about one-half are described by the law of the iterated logarithm.[73]

Logarithms also occur in log-normal distributions. When the logarithm of a random variable has a normal distribution, the variable is said to have a log-normal distribution.[74] Log-normal distributions are encountered in many fields, wherever a variable is formed as the product of many independent positive random variables, for example in the study of turbulence.[75]

Logarithms are used for

Benford's law describes the occurrence of digits in many data sets, such as heights of buildings. According to Benford's law, the probability that the first decimal-digit of an item in the data sample is d (from 1 to 9) equals log10 (d + 1) − log10 (d), regardless of the unit of measurement.[77] Thus, about 30% of the data can be expected to have 1 as first digit, 18% start with 2, etc. Auditors examine deviations from Benford's law to detect fraudulent accounting.[78]

The

Computational complexity

For example, to find a number in a sorted list, the

A function f(x) is said to

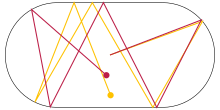

Entropy and chaos

Lyapunov exponents use logarithms to gauge the degree of chaoticity of a dynamical system. For example, for a particle moving on an oval billiard table, even small changes of the initial conditions result in very different paths of the particle. Such systems are chaotic in a deterministic way, because small measurement errors of the initial state predictably lead to largely different final states.[86] At least one Lyapunov exponent of a deterministically chaotic system is positive.

Fractals

Logarithms occur in definitions of the

Music

Logarithms are related to musical tones and intervals. In equal temperament, the frequency ratio depends only on the interval between two tones, not on the specific frequency, or pitch, of the individual tones. For example, the note A has a frequency of 440 Hz and B-flat has a frequency of 466 Hz. The interval between A and B-flat is a semitone, as is the one between B-flat and B (frequency 493 Hz). Accordingly, the frequency ratios agree:

| Interval (the two tones are played at the same time) |

1/12 tone ⓘ

|

Semitone ⓘ | Just major third ⓘ

|

Major third ⓘ | Tritone ⓘ | Octave ⓘ |

| Frequency ratio r | ||||||

| Corresponding number of semitones |

||||||

| Corresponding number of cents |

Number theory

Natural logarithms are closely linked to counting prime numbers (2, 3, 5, 7, 11, ...), an important topic in number theory. For any integer x, the quantity of prime numbers less than or equal to x is denoted π(x). The prime number theorem asserts that π(x) is approximately given by

The logarithm of n factorial, n! = 1 · 2 · ... · n, is given by

Generalizations

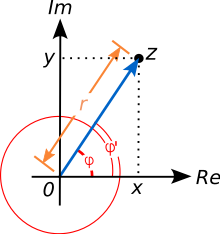

Complex logarithm

All the complex numbers a that solve the equation

are called complex logarithms of z, when z is (considered as) a complex number. A complex number is commonly represented as z = x + iy, where x and y are real numbers and i is an

The absolute value r of z is given by

Using the geometrical interpretation of

for any integer number k. Evidently the argument of z is not uniquely specified: both φ and φ' = φ + 2kπ are valid arguments of z for all integers k, because adding 2kπ

Using this formula, and again the periodicity, the following identities hold:[94]

where ln(r) is the unique real natural logarithm, ak denote the complex logarithms of z, and k is an arbitrary integer. Therefore, the complex logarithms of z, which are all those complex values ak for which the ak-th power of e equals z, are the infinitely many values

Taking k such that φ + 2kπ is within the defined interval for the principal arguments, then ak is called the principal value of the logarithm, denoted Log(z), again with a capital L. The principal argument of any positive real number x is 0; hence Log(x) is a real number and equals the real (natural) logarithm. However, the above formulas for logarithms of products and powers do not generalize to the principal value of the complex logarithm.[95]

The illustration at the right depicts Log(z), confining the arguments of z to the interval (−π, π]. This way the corresponding branch of the complex logarithm has discontinuities all along the negative real x axis, which can be seen in the jump in the hue there. This discontinuity arises from jumping to the other boundary in the same branch, when crossing a boundary, i.e. not changing to the corresponding k-value of the continuously neighboring branch. Such a locus is called a

Inverses of other exponential functions

Exponentiation occurs in many areas of mathematics and its inverse function is often referred to as the logarithm. For example, the

In the context of finite groups exponentiation is given by repeatedly multiplying one group element b with itself. The discrete logarithm is the integer n solving the equation

Further logarithm-like inverse functions include the double logarithm ln(ln(x)), the super- or hyper-4-logarithm (a slight variation of which is called iterated logarithm in computer science), the Lambert W function, and the logit. They are the inverse functions of the double exponential function, tetration, of f(w) = wew,[101] and of the logistic function, respectively.[102]

Related concepts

From the perspective of

The polylogarithm is the function defined by

See also

- Decimal exponent(dex)

- Exponential function

- Index of logarithm articles

Notes

- ^ The restrictions on x and b are explained in the section "Analytic properties".

- ^ Proof: Taking the logarithm to base k of the defining identity one gets The formula follows by solving for

- ^ z Some mathematicians disapprove of this notation. In his 1985 autobiography, Paul Halmos criticized what he considered the "childish ln notation", which he said no mathematician had ever used.[15] The notation was invented by the 19th century mathematician I. Stringham.[16][17]

- ^

For example BASIC.

- ^ The same series holds for the principal value of the complex logarithm for complex numbers z satisfying |z − 1| < 1.

- ^ The same series holds for the principal value of the complex logarithm for complex numbers z with positive real part.

- ^ See radian for the conversion between 2π and 360 degree.

References

- ^ Hobson, Ernest William (1914), John Napier and the invention of logarithms, 1614; a lecture, University of California Libraries, Cambridge : University Press

- OCLC 21118309

- ISBN 978-81-8431-755-8, chapter 1

- ^ All statements in this section can be found in Douglas Downing 2003, p. 275 or Kate & Bhapkar 2009, p. 1-1, for example.

- ISBN 978-0-07-005023-5, p. 21

- ^

Downing, Douglas (2003). Algebra the Easy Way. Barron's Educational Series. Hauppauge, NY: Barron's. chapter 17, p. 275. ISBN 978-0-7641-1972-9.

- ^

Wegener, Ingo (2005). Complexity Theory: Exploring the limits of efficient algorithms. Berlin, DE / New York, NY: ISBN 978-3-540-21045-0.

- ^

van der Lubbe, Jan C.A. (1997). Information Theory. Cambridge University Press. p. 3. ISBN 978-0-521-46760-5.

- ^

Allen, Elizabeth; Triantaphillidou, Sophie (2011). The Manual of Photography. Taylor & Francis. p. 228. ISBN 978-0-240-52037-7.

- ^

Goodrich, Michael T.; Tamassia, Roberto (2002). Algorithm Design: Foundations, analysis, and internet examples. John Wiley & Sons. p. 23.

One of the interesting and sometimes even surprising aspects of the analysis of data structures and algorithms is the ubiquitous presence of logarithms ... As is the custom in the computing literature, we omit writing the base b of the logarithm when b = 2 .

- ^

Parkhurst, David F. (2007). Introduction to Applied Mathematics for Environmental Science (illustrated ed.). Springer Science & Business Media. p. 288. ISBN 978-0-387-34228-3.

- ^

"Part 2: Mathematics". [title not cited]. Quantities and units (Report). ISO 80000-2.ISO 80000-2.

- ^

Gullberg, Jan (1997). Mathematics: From the birth of numbers. New York, NY: W.W. Norton & Co. ISBN 978-0-393-04002-9.

- ^ Perl, Yehoshua; Reingold, Edward M. (December 1977). "Understanding the complexity of interpolation search". .

- ^

ISBN 978-0-387-96078-4.

- ^

Stringham, I. (1893). Uniplanar Algebra. The Berkeley Press. p. xiii.

Being part I of a propædeutic to the higher mathematical analysis

- ^

Freedman, Roy S. (2006). Introduction to Financial Technology. Amsterdam: Academic Press. p. 59. ISBN 978-0-12-370478-8.

- ^

Rudin, Walter (1984). "Theorem 3.29". Principles of Mathematical Analysis (3rd ed., International student ed.). Auckland, NZ: McGraw-Hill International. ISBN 978-0-07-085613-4.

- ^ Napier, John (1614), Mirifici Logarithmorum Canonis Descriptio [The Description of the Wonderful Canon of Logarithms] (in Latin), Edinburgh, Scotland: Andrew Hart The sequel ... Constructio was published posthumously: Napier, John (1619), Mirifici Logarithmorum Canonis Constructio [The Construction of the Wonderful Rule of Logarithms] (in Latin), Edinburgh: Andrew Hart Ian Bruce has made an annotated translation of both books (2012), available from 17centurymaths.com.

- ^ Hobson, Ernest William (1914), John Napier and the invention of logarithms, 1614, Cambridge: The University Press

- S2CID 119326088

- ^ O'Connor, John J.; Robertson, Edmund F., "Jost Bürgi (1552 – 1632)", MacTutor History of Mathematics Archive, University of St Andrews

- ^ William Gardner (1742) Tables of Logarithms

- JSTOR 3026878

- ISBN 978-0-387-92153-2

- American Mathematical Monthly20: 5, 35, 75, 107, 148, 173, 205.

- ^ Stillwell, J. (2010), Mathematics and Its History (3rd ed.), Springer

- ^ Bryant, Walter W. (1907), A History of Astronomy, London: Methuen & Co, p. 44

- ISBN 978-0-486-61272-0, section 4.7., p. 89

- ISBN 978-0-19-850841-0, section 2

- ISBN 978-0-07-145227-4, p. 264

- ISBN 978-0-691-14134-3

- ISBN 978-1-58488-449-1, or see the references in function

- ^ MR 1476913, section III.3

- ^ a b Lang 1997, section IV.2

- ^ Dieudonné, Jean (1969), Foundations of Modern Analysis, vol. 1, Academic Press, p. 84 item (4.3.1)

- ^ "Calculation of d/dx(Log(b,x))", Wolfram Alpha, Wolfram Research, retrieved 15 March 2011

- ISBN 978-0-486-40453-0, p. 386

- ^ "Calculation of Integrate(ln(x))", Wolfram Alpha, Wolfram Research, retrieved 15 March 2011

- ^ Abramowitz & Stegun, eds. 1972, p. 69

- MR 1009558, section III.6

- ISBN 978-0-691-09983-5, sections 11.5 and 13.8

- ISBN 978-0-8218-0445-2

- ISBN 978-0-521-20461-3, p. 10

- ISBN 978-0-8176-4372-0, sections 4.2.2 (p. 72) and 5.5.2 (p. 95)

- ^ Hart; Cheney; Lawson; et al. (1968), Computer Approximations, SIAM Series in Applied Mathematics, New York: John Wiley, section 6.3, pp. 105–11

- ISSN 1350-2387, section 1 for an overview

- S2CID 19387286

- ^ Kahan, W. (20 May 2001), Pseudo-Division Algorithms for Floating-Point Logarithms and Exponentials

- ^ a b Abramowitz & Stegun, eds. 1972, p. 68

- ^ Sasaki, T.; Kanada, Y. (1982), "Practically fast multiple-precision evaluation of log(x)", Journal of Information Processing, 5 (4): 247–50, retrieved 30 March 2011

- ISBN 978-3-540-65691-3

- doi:10.1063/1.881196

- ^ Maor 2009, p. 135

- ISBN 978-0-596-10164-0, chapter 6, section 64

- ISBN 978-0-7190-2671-3, p. 21, section 1.3.2

- ISBN 978-0-387-30446-5, section 23.0.2

- ISBN 978-0-470-31983-3

- ISBN 978-0-89871-384-8

- ISBN 978-0-547-15669-9, section 4.4.

- ISBN 978-0-521-53551-9, section 8.3, p. 231

- PMID 10637613.

- ISBN 978-0-9678550-9-7

- ISBN 978-0-7506-4992-6, section 34

- ISBN 978-1-4129-4081-8, pp. 355–56

- ISBN 978-0-415-04406-6, p. 48

- OCLC 219156, p. 61

- PMID 1402698, retrieved 30 March 2011

- OCLC 33860167

- ISBN 978-0-470-01619-0, lemmas Psychophysics and Perception: Overview

- ^

Siegler, Robert S.; Opfer, John E. (2003), "The Development of Numerical Estimation. Evidence for Multiple Representations of Numerical Quantity" (PDF), Psychological Science, 14 (3): 237–43, S2CID 9583202, archived from the original(PDF) on 17 May 2011, retrieved 7 January 2011

- PMID 18511690

- ISBN 978-0-89871-296-4, section 12.9

- OCLC 301100935

- ^

Jean Mathieu and Julian Scott (2000), An introduction to turbulent flow, Cambridge University Press, p. 50, ISBN 978-0-521-77538-0

- ISBN 978-0-387-95234-5, section 11.3

- ISBN 978-0-8218-3919-5, section 2.1

- ^ Durtschi, Cindy; Hillison, William; Pacini, Carl (2004), "The Effective Use of Benford's Law in Detecting Fraud in Accounting Data" (PDF), Journal of Forensic Accounting, V: 17–34, archived from the original (PDF) on 29 August 2017, retrieved 28 May 2018

- ISBN 978-3-540-21045-0, pp. 1–2

- ISBN 978-0-321-11784-7, p. 143

- ISBN 978-0-201-89685-5, section 6.2.1, pp. 409–26

- ^ Donald Knuth 1998, section 5.2.4, pp. 158–68

- ISBN 978-3-540-21045-0

- ISBN 978-3-540-58016-4, chapter 19, p. 298

- ISBN 978-0-674-63976-8, section III.I

- ISBN 978-981-283-881-0, section 1.9

- ISBN 978-3-11-019092-2

- ISBN 978-0-8218-4873-9, chapter 5

- OCLC 492669517, theorem 4.1

- ^ P. T. Bateman & Diamond 2004, Theorem 8.15

- ISBN 978-0-412-35370-3, chapter 4

- ISBN 978-81-87504-86-3, Definition 1.6.3

- ISBN 978-0-8218-4399-4, section 5.9

- ISBN 978-981-02-0246-0, section 1.2

- ISBN 978-1-86094-642-4, theorem 6.1.

- ISBN 978-0-89871-646-7, chapter 11.

- Zbl 0956.11021, section II.5.

- ISBN 978-3-642-03595-1

- ISBN 978-1-58488-508-5

- ISBN 978-0-521-39231-0

- S2CID 29028411, archived from the original(PDF) on 14 December 2010, retrieved 13 February 2011

- ISBN 978-0-471-68182-3, p. 357

- MR 1726872, section V.4.1

- ISBN 978-0-521-34535-4, section 1.4

- MR 1193913, section 2

- MR 2723248.

External links

Media related to Logarithm at Wikimedia Commons

Media related to Logarithm at Wikimedia Commons The dictionary definition of logarithm at Wiktionary

The dictionary definition of logarithm at Wiktionary A lesson on logarithms can be found on Wikiversity

A lesson on logarithms can be found on Wikiversity- Weisstein, Eric W., "Logarithm", MathWorld

- Khan Academy: Logarithms, free online micro lectures

- "Logarithmic function", Encyclopedia of Mathematics, EMS Press, 2001 [1994]

- Colin Byfleet, Educational video on logarithms, retrieved 12 October 2010

- Edward Wright, Translation of Napier's work on logarithms, archived from the original on 3 December 2002, retrieved 12 October 2010

{{citation}}: CS1 maint: unfit URL (link) - Glaisher, James Whitbread Lee (1911), , in Chisholm, Hugh (ed.), Encyclopædia Britannica, vol. 16 (11th ed.), Cambridge University Press, pp. 868–77

![{\displaystyle \scriptstyle \left.{\begin{matrix}\scriptstyle {\frac {\scriptstyle {\text{dividend}}}{\scriptstyle {\text{divisor}}}}\\[1ex]\scriptstyle {\frac {\scriptstyle {\text{numerator}}}{\scriptstyle {\text{denominator}}}}\end{matrix}}\right\}\,=\,}](https://wikimedia.org/api/rest_v1/media/math/render/svg/5d5d22ff59234f0d437be740306e8dd905991e1e)

![{\displaystyle \scriptstyle {\sqrt[{\text{degree}}]{\scriptstyle {\text{radicand}}}}\,=\,}](https://wikimedia.org/api/rest_v1/media/math/render/svg/5582d567e7e7fbcdb728291770905e09beb0ea18)

![{\textstyle \log _{b}{\sqrt[{p}]{x}}={\frac {\log _{b}x}{p}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/68ca3b6cc8ff1c0192fb0e9206d32b14aec60e02)

![{\displaystyle {\sqrt[{d}]{c}}=c^{\frac {1}{d}}=10^{{\frac {1}{d}}\log _{10}c}.}](https://wikimedia.org/api/rest_v1/media/math/render/svg/2cee4c3ee52a4b250c30c38836d4d58a006ce74c)

![{\displaystyle {\frac {466}{440}}\approx {\frac {493}{466}}\approx 1.059\approx {\sqrt[{12}]{2}}.}](https://wikimedia.org/api/rest_v1/media/math/render/svg/55acf246da64ba711e1717eb43ad81792220ab32)

![{\displaystyle {\begin{aligned}2^{\frac {4}{12}}&={\sqrt[{3}]{2}}\\&\approx 1.2599\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/76610ca7878ea438fa73bd50ac4df1fecce09b9f)

![{\displaystyle \log _{\sqrt[{12}]{2}}(r)=12\log _{2}(r)}](https://wikimedia.org/api/rest_v1/media/math/render/svg/173477b6bc89e2396abacc83ca5015ac01b0747b)

![{\displaystyle \log _{\sqrt[{1200}]{2}}(r)=1200\log _{2}(r)}](https://wikimedia.org/api/rest_v1/media/math/render/svg/a1ccc3b05bf5ae0d41f85c50ab1a7ceec4e95713)