Outlier

In

Outliers can occur by chance in any distribution, but they can indicate novel behaviour or structures in the data-set,

In most larger samplings of data, some data points will be further away from the

Outliers, being the most extreme observations, may include the

Naive interpretation of statistics derived from data sets that include outliers may be misleading. For example, if one is calculating the

Estimators capable of coping with outliers are said to be robust: the median is a robust statistic of central tendency, while the mean is not.[5] However, the mean is generally a more precise estimator.[6]

Occurrence and causes

In the case of

In general, if the nature of the population distribution is known a priori, it is possible to test if the number of outliers deviate significantly from what can be expected: for a given cutoff (so samples fall beyond the cutoff with probability p) of a given distribution, the number of outliers will follow a binomial distribution with parameter p, which can generally be well-approximated by the Poisson distribution with λ = pn. Thus if one takes a normal distribution with cutoff 3 standard deviations from the mean, p is approximately 0.3%, and thus for 1000 trials one can approximate the number of samples whose deviation exceeds 3 sigmas by a Poisson distribution with λ = 3.

Causes

Outliers can have many anomalous causes. A physical apparatus for taking measurements may have suffered a transient malfunction. There may have been an error in data transmission or transcription. Outliers arise due to changes in system behaviour, fraudulent behaviour, human error, instrument error or simply through natural deviations in populations. A sample may have been contaminated with elements from outside the population being examined. Alternatively, an outlier could be the result of a flaw in the assumed theory, calling for further investigation by the researcher. Additionally, the pathological appearance of outliers of a certain form appears in a variety of datasets, indicating that the causative mechanism for the data might differ at the extreme end (King effect).

Definitions and detection

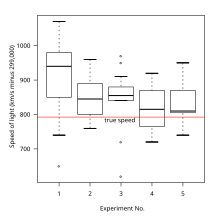

There is no rigid mathematical definition of what constitutes an outlier; determining whether or not an observation is an outlier is ultimately a subjective exercise.[8] There are various methods of outlier detection, some of which are treated as synonymous with novelty detection.[9][10][11][12][13] Some are graphical such as normal probability plots. Others are model-based. Box plots are a hybrid.

Model-based methods which are commonly used for identification assume that the data are from a normal distribution, and identify observations which are deemed "unlikely" based on mean and standard deviation:

- Chauvenet's criterion

- Grubbs's test for outliers

- Dixon's Q test

- ASTM E178: Standard Practice for Dealing With Outlying Observations[14]

- Mahalanobis distance and leverage are often used to detect outliers, especially in the development of linear regression models.

- Subspace and correlation based techniques for high-dimensional numerical data[13]

Peirce's criterion

It is proposed to determine in a series of observations the limit of error, beyond which all observations involving so great an error may be rejected, provided there are as many as such observations. The principle upon which it is proposed to solve this problem is, that the proposed observations should be rejected when the probability of the system of errors obtained by retaining them is less than that of the system of errors obtained by their rejection multiplied by the probability of making so many, and no more, abnormal observations. (Quoted in the editorial note on page 516 to Peirce (1982 edition) from A Manual of Astronomy 2:558 by Chauvenet.) [15][16][17][18]

Tukey's fences

Other methods flag observations based on measures such as the interquartile range. For example, if and are the lower and upper quartiles respectively, then one could define an outlier to be any observation outside the range:

for some nonnegative constant . John Tukey proposed this test, where indicates an "outlier", and indicates data that is "far out".[19]

In anomaly detection

In various domains such as, but not limited to,

Modified Thompson Tau test

The modified Thompson Tau test[citation needed] is a method used to determine if an outlier exists in a data set. The strength of this method lies in the fact that it takes into account a data set's standard deviation, average and provides a statistically determined rejection zone; thus providing an objective method to determine if a data point is an outlier.[citation needed][24] How it works: First, a data set's average is determined. Next the absolute deviation between each data point and the average are determined. Thirdly, a rejection region is determined using the formula:

- ;

where is the critical value from the Student t distribution with n-2 degrees of freedom, n is the sample size, and s is the sample standard deviation. To determine if a value is an outlier: Calculate . If δ > Rejection Region, the data point is an outlier. If δ ≤ Rejection Region, the data point is not an outlier.

The modified Thompson Tau test is used to find one outlier at a time (largest value of δ is removed if it is an outlier). Meaning, if a data point is found to be an outlier, it is removed from the data set and the test is applied again with a new average and rejection region. This process is continued until no outliers remain in a data set.

Some work has also examined outliers for nominal (or categorical) data. In the context of a set of examples (or instances) in a data set, instance hardness measures the probability that an instance will be misclassified ( where y is the assigned class label and x represent the input attribute value for an instance in the training set t).[25] Ideally, instance hardness would be calculated by summing over the set of all possible hypotheses H:

Practically, this formulation is unfeasible as H is potentially infinite and calculating is unknown for many algorithms. Thus, instance hardness can be approximated using a diverse subset :

where is the hypothesis induced by learning algorithm trained on training set t with hyperparameters . Instance hardness provides a continuous value for determining if an instance is an outlier instance.

Working with outliers

The choice of how to deal with an outlier should depend on the cause. Some estimators are highly sensitive to outliers, notably estimation of covariance matrices.

Retention

Even when a normal distribution model is appropriate to the data being analyzed, outliers are expected for large sample sizes and should not automatically be discarded if that is the case.[26] Instead, one should use a method that is robust to outliers to model or analyze data with naturally occurring outliers.[26]

Exclusion

When deciding whether to remove an outlier, the cause has to be considered. As mentioned earlier, if the outlier's origin can be attributed to an experimental error, or if it can be otherwise determined that the outlying data point is erroneous, it is generally recommended to remove it.[26][27] However, it is more desirable to correct the erroneous value, if possible.

Removing a data point solely because it is an outlier, on the other hand, is a controversial practice, often frowned upon by many scientists and science instructors, as it typically invalidates statistical results.[26][27] While mathematical criteria provide an objective and quantitative method for data rejection, they do not make the practice more scientifically or methodologically sound, especially in small sets or where a normal distribution cannot be assumed. Rejection of outliers is more acceptable in areas of practice where the underlying model of the process being measured and the usual distribution of measurement error are confidently known.

The two common approaches to exclude outliers are

In regression problems, an alternative approach may be to only exclude points which exhibit a large degree of influence on the estimated coefficients, using a measure such as Cook's distance.[30]

If a data point (or points) is excluded from the data analysis, this should be clearly stated on any subsequent report.

Non-normal distributions

The possibility should be considered that the underlying distribution of the data is not approximately normal, having "

Set-membership uncertainties

A set membership approach considers that the uncertainty corresponding to the ith measurement of an unknown random vector x is represented by a set Xi (instead of a probability density function). If no outliers occur, x should belong to the intersection of all Xi's. When outliers occur, this intersection could be empty, and we should relax a small number of the sets Xi (as small as possible) in order to avoid any inconsistency.[32] This can be done using the notion of q-relaxed intersection. As illustrated by the figure, the q-relaxed intersection corresponds to the set of all x which belong to all sets except q of them. Sets Xi that do not intersect the q-relaxed intersection could be suspected to be outliers.

Alternative models

In cases where the cause of the outliers is known, it may be possible to incorporate this effect into the model structure, for example by using a

See also

- Anomaly (natural sciences)

- Novelty detection

- Anscombe's quartet

- Data transformation (statistics)

- Extreme value theory

- Influential observation

- Random sample consensus

- Robust regression

- Studentized residual

- Winsorizing

References

- .

An outlying observation, or "outlier," is one that appears to deviate markedly from other members of the sample in which it occurs.

- ISBN 978-0-02-374545-4.

An outlier is an observation that is far removed from the rest of the observations.

- ^ Pimentel, M. A., Clifton, D. A., Clifton, L., & Tarassenko, L. (2014). A review of novelty detection. Signal Processing, 99, 215-249.

- ^ Grubbs 1969, p. 1 stating "An outlying observation may be merely an extreme manifestation of the random variability inherent in the data. ... On the other hand, an outlying observation may be the result of gross deviation from prescribed experimental procedure or an error in calculating or recording the numerical value."

- ^ Ripley, Brian D. 2004. Robust statistics Archived 2012-10-21 at the Wayback Machine

- ^ Chandan Mukherjee, Howard White, Marc Wuyts, 1998, "Econometrics and Data Analysis for Developing Countries Vol. 1" [1]

- ISBN 978-3-540-26256-5.

- S2CID 53305944. Archived from the original(PDF) on 2021-11-14. Retrieved 2019-12-11.

- ^ Pimentel, M. A., Clifton, D. A., Clifton, L., & Tarassenko, L. (2014). A review of novelty detection. Signal Processing, 99, 215-249.

- ^ Rousseeuw, P; Leroy, A. (1996), Robust Regression and Outlier Detection (3rd ed.), John Wiley & Sons

- S2CID 3330313

- ISBN 978-0-471-93094-5

- ^ S2CID 6724536.

- ^ E178: Standard Practice for Dealing With Outlying Observations

- ^ Benjamin Peirce, "Criterion for the Rejection of Doubtful Observations", Astronomical Journal II 45 (1852) and Errata to the original paper.

- JSTOR 25138498.

- ^ Peirce, Charles Sanders (1873) [1870]. "Appendix No. 21. On the Theory of Errors of Observation". Report of the Superintendent of the United States Coast Survey Showing the Progress of the Survey During the Year 1870: 200–224.. NOAA PDF Eprint (goes to Report p. 200, PDF's p. 215).

- ISBN 978-0-253-37201-7. – Appendix 21, according to the editorial note on page 515

- OCLC 3058187.

- S2CID 11707259.

- ISBN 1581132174.

- ISBN 1-58113-217-4.

- S2CID 19036098.

- ^ Thompson .R. (1985). "A Note on Restricted Maximum Likelihood Estimation with an Alternative Outlier Model".Journal of the Royal Statistical Society. Series B (Methodological), Vol. 47, No. 1, pp. 53-55

- ^ Smith, M.R.; Martinez, T.; Giraud-Carrier, C. (2014). "An Instance Level Analysis of Data Complexity". Machine Learning, 95(2): 225-256.

- ^ S2CID 258376426.

- ^ PMID 24773354.

- ISBN 9780202365350.

- .

- ^ Cook, R. Dennis (Feb 1977). "Detection of Influential Observations in Linear Regression". Technometrics (American Statistical Association) 19 (1): 15–18.

- ^ Weisstein, Eric W. Cauchy Distribution. From MathWorld--A Wolfram Web Resource

- S2CID 16500768.

- ^ Roberts, S. and Tarassenko, L.: 1995, A probabilistic resource allocating network for novelty detection. Neural Computation 6, 270–284.

- .

External links

- Renze, John. "Outlier". MathWorld.

- Balakrishnan, N.; Childs, A. (2001) [1994], "Outlier", Encyclopedia of Mathematics, EMS Press

- Grubbs test described by NIST manual

![{\displaystyle {\big [}Q_{1}-k(Q_{3}-Q_{1}),Q_{3}+k(Q_{3}-Q_{1}){\big ]}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/efa0c7280d2bbf322c81de7c0a7d65296a6e5d18)