Reward system

The reward system (the mesocorticolimbic circuit) is a group of neural structures responsible for

| Addiction and dependence glossary[5][6][7] | |

|---|---|

| |

The reward system motivates animals to approach stimuli or engage in behaviour that increases fitness (sex, energy-dense foods, etc.). Survival for most animal species depends upon maximizing contact with beneficial stimuli and minimizing contact with harmful stimuli. Reward cognition serves to increase the likelihood of survival and reproduction by causing associative learning, eliciting approach and consummatory behavior, and triggering positively-valenced emotions.[3] Thus, reward is a mechanism that evolved to help increase the adaptive fitness of animals.[8] In drug addiction, certain substances over-activate the reward circuit, leading to compulsive substance-seeking behavior resulting from synaptic plasticity in the circuit.[9]

Primary rewards are a class of rewarding stimuli which facilitate the

Definition

In neuroscience, the reward system is a collection of brain structures and neural pathways that are responsible for reward-related cognition, including

Terms that are commonly used to describe behavior related to the "wanting" or desire component of reward include appetitive behavior, approach behavior, preparatory behavior, instrumental behavior, anticipatory behavior, and seeking.[12] Terms that are commonly used to describe behavior related to the "liking" or pleasure component of reward include consummatory behavior and taking behavior.[12]

The three primary functions of rewards are their capacity to:

- produce operant reinforcement);[3]

- affect decision-making and induce approach behavior (via the assignment of motivational salience to rewarding stimuli);[3]

- elicit positively-valenced emotions, particularly pleasure.[3]

Neuroanatomy

Overview

The brain structures that compose the reward system are located primarily within the

Most of the

Two theories exist with regard to the activity of the nucleus accumbens and the generation liking and wanting. The inhibition (or hyperpolarization) hypothesis proposes that the nucleus accumbens exerts tonic inhibitory effects on downstream structures such as the ventral pallidum, hypothalamus or ventral tegmental area, and that in inhibiting MSNs in the nucleus accumbens (NAcc), these structures are excited, "releasing" reward related behavior. While GABA receptor agonists are capable of eliciting both "liking" and "wanting" reactions in the nucleus accumbens, glutaminergic inputs from the basolateral amygdala, ventral hippocampus, and medial prefrontal cortex can drive incentive salience. Furthermore, while most studies find that NAcc neurons reduce firing in response to reward, a number of studies find the opposite response. This had led to the proposal of the disinhibition (or depolarization) hypothesis, that proposes that excitation or NAcc neurons, or at least certain subsets, drives reward related behavior.[2][27][28]

After nearly 50 years of research on brain-stimulation reward, experts have certified that dozens of sites in the brain will maintain

Key pathway

Ventral tegmental area

- The ventral tegmental area (VTA) is important in responding to stimuli and cues that indicate a reward is present. Rewarding stimuli (and all addictive drugs) act on the circuit by triggering the VTA to release dopamine signals to the nucleus accumbens, either directly or indirectly.[citation needed] The VTA has two important pathways: The mesolimbic pathway projecting to limbic (striatal) regions and underpinning the motivational behaviors and processes, and the mesocortical pathway projecting to the prefrontal cortex, underpinning cognitive functions, such as learning external cues, etc.[31]

- Dopaminergic neurons in this region converts the amino acid tyrosine into DOPA using the enzyme tyrosine hydroxylase, which is then converted to dopamine using the enzyme dopa-decarboxylase.[32]

Striatum (Nucleus Accumbens)

- The striatum is broadly involved in acquiring and eliciting learned behaviors in response to a rewarding cue. The VTA projects to the striatum, and activates the GABA-ergic Medium Spiny Neurons via D1 and D2 receptors within the ventral (Nucleus Accumbens) and dorsal striatum.[33]

- The Ventral Striatum (the Nucleus Accumbens) is broadly involved in acquiring behavior when fed into by the VTA, and eliciting behavior when fed into by the PFC. The NAc shell projects to the pallidum and the VTA, regulating limbic and autonomic functions. This modulates the reinforcing properties of stimuli, and short term aspects of reward. The NAc Core projects to the substantia nigra and is involved in the development of reward-seeking behaviors and its expression. It is involved in spatial learning, conditional response, and impulsive choice; the long term elements of reward.[31]

- The Dorsal Striatum is involved in learning, the Dorsal Medial Striatum in goal directed learning, and the Dorsal Lateral Striatum in stimulus-response learning foundational to Pavlovian response.[34] On repeated activation by a stimuli, the Nucleus Accumbens can activate the Dorsal Striatum via an intrastriatal loop. The transition of signals from the NAc to the DS allows reward associated cues to activate the DS without the reward itself being present. This can activate cravings and reward-seeking behaviors (and is responsible for triggering relapse during abstinence in addiction).[35]

Prefrontal Cortex

- The VTA dopaminergic neurons project to the PFC, activating glutaminergic neurons that project to multiple other regions, including the Dorsal Striatum and NAc, ultimately allowing the PFC to mediate salience and conditional behaviors in response to stimuli.[35]

- Notably, abstinence from addicting drugs activates the PFC, glutamatergic projection to the NAc, which leads to strong cravings, and modulates reinstatement of addiction behaviors resulting from abstinence. The PFC also interacts with the VTA through the mesocortical pathway, and helps associate environmental cues with the reward.[35]

Hippocampus

- The Hippocampus has multiple functions, including in the creation and storage of memories . In the reward circuit, it serves to contextual memories and associated cues. It ultimately underpins the reinstatement of reward-seeking behaviors via cues, and contextual triggers.[36]

Amygdala

- The AMY receives input from the VTA, and outputs to the NAc. The amygdala is important in creating powerful emotional

Pleasure centers

The reward system contains pleasure centers or hedonic hotspots – i.e., brain structures that mediate pleasure or "liking" reactions from intrinsic rewards. As of October 2017,[update] hedonic hotspots have been identified in subcompartments within the

Hedonic hotspots are functionally linked, in that activation of one hotspot results in the recruitment of the others, as indexed by the

Wanting and liking

Activation of the dorsorostral region of the nucleus accumbens correlates with increases in wanting without concurrent increases in liking.[43] However, dopaminergic neurotransmission into the nucleus accumbens shell is responsible not only for appetitive motivational salience (i.e., incentive salience) towards rewarding stimuli, but also for aversive motivational salience, which directs behavior away from undesirable stimuli.[12][44][45] In the dorsal striatum, activation of D1 expressing MSNs produces appetitive incentive salience, while activation of D2 expressing MSNs produces aversion. In the NAcc, such a dichotomy is not as clear cut, and activation of both D1 and D2 MSNs is sufficient to enhance motivation,[46][47] likely via disinhibiting the VTA through inhibiting the ventral pallidum.[48][49]

Robinson and Berridge's 1993 incentive-sensitization theory proposed that reward contains separable psychological components: wanting (incentive) and liking (pleasure). To explain increasing contact with a certain stimulus such as chocolate, there are two independent factors at work – our desire to have the chocolate (wanting) and the pleasure effect of the chocolate (liking). According to Robinson and Berridge, wanting and liking are two aspects of the same process, so rewards are usually wanted and liked to the same degree. However, wanting and liking also change independently under certain circumstances. For example, rats that do not eat after receiving dopamine (experiencing a loss of desire for food) act as though they still like food. In another example, activated self-stimulation electrodes in the lateral hypothalamus of rats increase appetite, but also cause more adverse reactions to tastes such as sugar and salt; apparently, the stimulation increases wanting but not liking. Such results demonstrate that the reward system of rats includes independent processes of wanting and liking. The wanting component is thought to be controlled by dopaminergic pathways, whereas the liking component is thought to be controlled by opiate-GABA-endocannabinoids systems.[8]

Anti-reward system

Koobs & Le Moal proposed that there exists a separate circuit responsible for the attenuation of reward-pursuing behavior, which they termed the anti-reward circuit. This component acts as brakes on the reward circuit, thus preventing the over pursuit of food, sex, etc. This circuit involves multiple parts of the amygdala (the bed nucleus of the stria terminalis, the central nucleus), the Nucleus Accumbens, and signal molecules including norepinephrine, corticotropin-releasing factor, and dynorphin.[50] This circuit is also hypothesized to mediate the unpleasant components of stress, and is thus thought to be involved in addiction and withdrawal. While the reward circuit mediates the initial positive reinforcement involved in the development of addiction, it is the anti-reward circuit that later dominates via negative reinforcement that motivates the pursuit of the rewarding stimuli.[51]

Learning

Rewarding stimuli can drive

Distinct neural systems are responsible for learning associations between stimuli and outcomes, actions and outcomes, and stimuli and responses. Although classical conditioning is not limited to the reward system, the enhancement of instrumental performance by stimuli (i.e., Pavlovian-instrumental transfer) requires the nucleus accumbens. Habitual and goal directed instrumental learning are dependent upon the lateral striatum and the medial striatum, respectively.[52]

During instrumental learning, opposing changes in the ratio of

Disorders

Addiction

The

Motivation

Dysfunctional motivational salience appears in a number of psychiatric symptoms and disorders.

Neuroimaging studies across diagnoses associated with anhedonia have reported reduced activity in the OFC and ventral striatum.[76] One meta analysis reported anhedonia was associated with reduced neural response to reward anticipation in the caudate nucleus, putamen, nucleus accumbens and medial prefrontal cortex (mPFC).[77]

Mood disorders

Certain types of depression are associated with reduced motivation, as assessed by willingness to expend effort for reward. These abnormalities have been tentatively linked to reduced activity in areas of the striatum, and while dopaminergic abnormalities are hypothesized to play a role, most studies probing dopamine function in depression have reported inconsistent results.[78][79] Although postmortem and neuroimaging studies have found abnormalities in numerous regions of the reward system, few findings are consistently replicated. Some studies have reported reduced NAcc, hippocampus, medial prefrontal cortex (mPFC), and orbitofrontal cortex (OFC) activity, as well as elevated basolateral amygdala and subgenual cingulate cortex (sgACC) activity during tasks related to reward or positive stimuli. These neuroimaging abnormalities are complemented by little post mortem research, but what little research has been done suggests reduced excitatory synapses in the mPFC.[80] Reduced activity in the mPFC during reward related tasks appears to be localized to more dorsal regions(i.e. the pregenual cingulate cortex), while the more ventral sgACC is hyperactive in depression.[81]

Attempts to investigate underlying neural circuitry in animal models has also yielded conflicting results. Two paradigms are commonly used to simulate depression, chronic social defeat (CSDS), and chronic mild stress (CMS), although many exist. CSDS produces reduced preference for sucrose, reduced social interactions, and increased immobility in the forced swim test. CMS similarly reduces sucrose preference, and behavioral despair as assessed by tail suspension and forced swim tests. Animals susceptible to CSDS exhibit increased phasic VTA firing, and inhibition of VTA-NAcc projections attenuates behavioral deficits induced by CSDS.[82] However, inhibition of VTA-mPFC projections exacerbates social withdrawal. On the other hand, CMS associated reductions in sucrose preference and immobility were attenuated and exacerbated by VTA excitation and inhibition, respectively.[83][84] Although these differences may be attributable to different stimulation protocols or poor translational paradigms, variable results may also lie in the heterogenous functionality of reward related regions.[85]

Schizophrenia

Attention deficit hyperactivity disorder

In those with

Impairments of dopaminergic and serotonergic function are said to be key factors in ADHD.[91] These impairments can lead to executive dysfunction such as dysregulation of reward processing and motivational dysfunction, including anhedonia.[92]

History

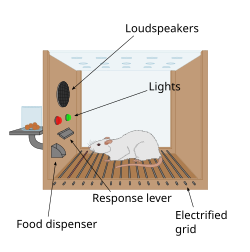

The first clue to the presence of a reward system in the brain came with an accidental discovery by James Olds and Peter Milner in 1954. They discovered that rats would perform behaviors such as pressing a bar, to administer a brief burst of electrical stimulation to specific sites in their brains. This phenomenon is called

In a fundamental discovery made in 1954, researchers

Ivan Pavlov was a psychologist who used the reward system to study classical conditioning. Pavlov used the reward system by rewarding dogs with food after they had heard a bell or another stimulus. Pavlov was rewarding the dogs so that the dogs associated food, the reward, with the bell, the stimulus.[97] Edward L. Thorndike used the reward system to study operant conditioning. He began by putting cats in a puzzle box and placing food outside of the box so that the cat wanted to escape. The cats worked to get out of the puzzle box to get to the food. Although the cats ate the food after they escaped the box, Thorndike learned that the cats attempted to escape the box without the reward of food. Thorndike used the rewards of food and freedom to stimulate the reward system of the cats. Thorndike used this to see how the cats learned to escape the box.[98] More recently, Ivan De Araujo and colleagues used nutrients inside the gut to stimulate the reward system via the vagus nerve.[99]

Other species

Animals quickly learn to press a bar to obtain an injection of opiates directly into the midbrain tegmentum or the nucleus accumbens. The same animals do not work to obtain the opiates if the dopaminergic neurons of the mesolimbic pathway are inactivated. In this perspective, animals, like humans, engage in behaviors that increase dopamine release.

Berridge developed the

See also

References

- ^ PMID 26109341.

- ^ PMID 25950633.

In the prefrontal cortex, recent evidence indicates that the [orbitofrontal cortex] OFC and insula cortex may each contain their own additional hot spots (D.C. Castro et al., Soc. Neurosci., abstract). In specific subregions of each area, either opioid-stimulating or orexin-stimulating microinjections appear to enhance the number of liking reactions elicited by sweetness, similar to the [nucleus accumbens] NAc and [ventral pallidum] VP hot spots. Successful confirmation of hedonic hot spots in the OFC or insula would be important and possibly relevant to the orbitofrontal mid-anterior site mentioned earlier that especially tracks the subjective pleasure of foods in humans (Georgiadis et al., 2012; Kringelbach, 2005; Kringelbach et al., 2003; Small et al., 2001; Veldhuizen et al., 2010). Finally, in the brainstem, a hindbrain site near the parabrachial nucleus of dorsal pons also appears able to contribute to hedonic gains of function (Söderpalm and Berridge, 2000). A brainstem mechanism for pleasure may seem more surprising than forebrain hot spots to anyone who views the brainstem as merely reflexive, but the pontine parabrachial nucleus contributes to taste, pain, and many visceral sensations from the body and has also been suggested to play an important role in motivation (Wu et al., 2012) and in human emotion (especially related to the somatic marker hypothesis) (Damasio, 2010).

- ^ PMID 26109341.

Rewards in operant conditioning are positive reinforcers. ... Operant behavior gives a good definition for rewards. Anything that makes an individual come back for more is a positive reinforcer and therefore a reward. Although it provides a good definition, positive reinforcement is only one of several reward functions. ... Rewards are attractive. They are motivating and make us exert an effort. ... Rewards induce approach behavior, also called appetitive or preparatory behavior, sexual behavior, and consummatory behavior. ... Thus any stimulus, object, event, activity, or situation that has the potential to make us approach and consume it is by definition a reward. ... Rewarding stimuli, objects, events, situations, and activities consist of several major components. First, rewards have basic sensory components (visual, auditory, somatosensory, gustatory, and olfactory) ... Second, rewards are salient and thus elicit attention, which are manifested as orienting responses. The salience of rewards derives from three principal factors, namely, their physical intensity and impact (physical salience), their novelty and surprise (novelty/surprise salience), and their general motivational impact shared with punishers (motivational salience). A separate form not included in this scheme, incentive salience, primarily addresses dopamine function in addiction and refers only to approach behavior (as opposed to learning) ... Third, rewards have a value component that determines the positively motivating effects of rewards and is not contained in, nor explained by, the sensory and attentional components. This component reflects behavioral preferences and thus is subjective and only partially determined by physical parameters. Only this component constitutes what we understand as a reward. It mediates the specific behavioral reinforcing, approach generating, and emotional effects of rewards that are crucial for the organism's survival and reproduction, whereas all other components are only supportive of these functions. ... Rewards can also be intrinsic to behavior. They contrast with extrinsic rewards that provide motivation for behavior and constitute the essence of operant behavior in laboratory tests. Intrinsic rewards are activities that are pleasurable on their own and are undertaken for their own sake, without being the means for getting extrinsic rewards. ... Intrinsic rewards are genuine rewards in their own right, as they induce learning, approach, and pleasure, like perfectioning, playing, and enjoying the piano. Although they can serve to condition higher order rewards, they are not conditioned, higher order rewards, as attaining their reward properties does not require pairing with an unconditioned reward. ... These emotions are also called liking (for pleasure) and wanting (for desire) in addiction research and strongly support the learning and approach generating functions of reward.

- PMID 27974615.

- ISBN 9780071481274.

- ^ PMID 24459410.

Despite the importance of numerous psychosocial factors, at its core, drug addiction involves a biological process: the ability of repeated exposure to a drug of abuse to induce changes in a vulnerable brain that drive the compulsive seeking and taking of drugs, and loss of control over drug use, that define a state of addiction. ... A large body of literature has demonstrated that such ΔFosB induction in D1-type [nucleus accumbens] neurons increases an animal's sensitivity to drug as well as natural rewards and promotes drug self-administration, presumably through a process of positive reinforcement ... Another ΔFosB target is cFos: as ΔFosB accumulates with repeated drug exposure it represses c-Fos and contributes to the molecular switch whereby ΔFosB is selectively induced in the chronic drug-treated state.41. ... Moreover, there is increasing evidence that, despite a range of genetic risks for addiction across the population, exposure to sufficiently high doses of a drug for long periods of time can transform someone who has relatively lower genetic loading into an addict.

- PMID 26816013.

Substance-use disorder: A diagnostic term in the fifth edition of the Diagnostic and Statistical Manual of Mental Disorders (DSM-5) referring to recurrent use of alcohol or other drugs that causes clinically and functionally significant impairment, such as health problems, disability, and failure to meet major responsibilities at work, school, or home. Depending on the level of severity, this disorder is classified as mild, moderate, or severe.

Addiction: A term used to indicate the most severe, chronic stage of substance-use disorder, in which there is a substantial loss of self-control, as indicated by compulsive drug taking despite the desire to stop taking the drug. In the DSM-5, the term addiction is synonymous with the classification of severe substance-use disorder. - ^ ISBN 9780716751694.

- ^ Brain & Behavior Research Foundation (13 March 2019). "The Biology of Addiction". YouTube.

- ^ "Dopamine Involved In Aggression". Medical News Today. 15 January 2008. Archived from the original on 23 September 2010. Retrieved 14 November 2010.

- PMID 28338882.

- ^ PMID 23141060.

- ^ PMID 26116518.

[The striatum] receives dopaminergic inputs from the ventral tegmental area (VTA) and the substantia nigra (SNr) and glutamatergic inputs from several areas, including the cortex, hippocampus, amygdala, and thalamus (Swanson, 1982; Phillipson and Griffiths, 1985; Finch, 1996; Groenewegen et al., 1999; Britt et al., 2012). These glutamatergic inputs make contact on the heads of dendritic spines of the striatal GABAergic medium spiny projection neurons (MSNs) whereas dopaminergic inputs synapse onto the spine neck, allowing for an important and complex interaction between these two inputs in modulation of MSN activity ... It should also be noted that there is a small population of neurons in the [nucleus accumbens] NAc that coexpress both D1 and D2 receptors, though this is largely restricted to the NAc shell (Bertran- Gonzalez et al., 2008). ... Neurons in the NAc core and NAc shell subdivisions also differ functionally. The NAc core is involved in the processing of conditioned stimuli whereas the NAc shell is more important in the processing of unconditioned stimuli; Classically, these two striatal MSN populations are thought to have opposing effects on basal ganglia output. Activation of the dMSNs causes a net excitation of the thalamus resulting in a positive cortical feedback loop; thereby acting as a 'go' signal to initiate behavior. Activation of the iMSNs, however, causes a net inhibition of thalamic activity resulting in a negative cortical feedback loop and therefore serves as a 'brake' to inhibit behavior ... there is also mounting evidence that iMSNs play a role in motivation and addiction (Lobo and Nestler, 2011; Grueter et al., 2013). For example, optogenetic activation of NAc core and shell iMSNs suppressed the development of a cocaine CPP whereas selective ablation of NAc core and shell iMSNs ... enhanced the development and the persistence of an amphetamine CPP (Durieux et al., 2009; Lobo et al., 2010). These findings suggest that iMSNs can bidirectionally modulate drug reward. ... Together these data suggest that iMSNs normally act to restrain drug-taking behavior and recruitment of these neurons may in fact be protective against the development of compulsive drug use.

- PMID 24648786.

Regions of the basal ganglia, which include the dorsal and ventral striatum, internal and external segments of the globus pallidus, subthalamic nucleus, and dopaminergic cell bodies in the substantia nigra, are highly implicated not only in fine motor control but also in [prefrontal cortex] PFC function.43 Of these regions, the [nucleus accumbens] NAc (described above) and the [dorsal striatum] DS (described below) are most frequently examined with respect to addiction. Thus, only a brief description of the modulatory role of the basal ganglia in addiction-relevant circuits will be mentioned here. The overall output of the basal ganglia is predominantly via the thalamus, which then projects back to the PFC to form cortico-striatal-thalamo-cortical (CSTC) loops. Three CSTC loops are proposed to modulate executive function, action selection, and behavioral inhibition. In the dorsolateral prefrontal circuit, the basal ganglia primarily modulate the identification and selection of goals, including rewards.44 The [orbitofrontal cortex] OFC circuit modulates decision-making and impulsivity, and the anterior cingulate circuit modulates the assessment of consequences.44 These circuits are modulated by dopaminergic inputs from the [ventral tegmental area] VTA to ultimately guide behaviors relevant to addiction, including the persistence and narrowing of the behavioral repertoire toward drug seeking, and continued drug use despite negative consequences.43–45

- PMID 25454360.

Studies have shown that cravings are underpinned by activation of the reward and motivation circuits (McBride et al., 2006, Wang et al., 2007, Wing et al., 2012, Goldman et al., 2013, Jansen et al., 2013 and Volkow et al., 2013). According to these authors, the main neural structures involved are: the nucleus accumbens, dorsal striatum, orbitofrontal cortex, anterior cingulate cortex, dorsolateral prefrontal cortex (DLPFC), amygdala, hippocampus and insula.

- ^ ISBN 978-0-07-148127-4.

The neural substrates that underlie the perception of reward and the phenomenon of positive reinforcement are a set of interconnected forebrain structures called brain reward pathways; these include the nucleus accumbens (NAc; the major component of the ventral striatum), the basal forebrain (components of which have been termed the extended amygdala, as discussed later in this chapter), hippocampus, hypothalamus, and frontal regions of cerebral cortex. These structures receive rich dopaminergic innervation from the ventral tegmental area (VTA) of the midbrain. Addictive drugs are rewarding and reinforcing because they act in brain reward pathways to enhance either dopamine release or the effects of dopamine in the NAc or related structures, or because they produce effects similar to dopamine. ... A macrostructure postulated to integrate many of the functions of this circuit is described by some investigators as the extended amygdala. The extended amygdala is said to comprise several basal forebrain structures that share similar morphology, immunocytochemical features, and connectivity and that are well suited to mediating aspects of reward function; these include the bed nucleus of the stria terminalis, the central medial amygdala, the shell of the NAc, and the sublenticular substantia innominata.

- ^

- PMID 26286655.

- PMID 24851284.

- PMID 26873754.

- S2CID 5089422.

- S2CID 10311562.

- PMID 23578393.

- PMID 23142759.

- PMID 15564581.

- S2CID 33615497.

- ^ PMID 26124708.

- PMID 18675281.

- PMID 2648975.

- S2CID 16547037.

- ^ a b Kokane, S. S., & Perrotti, L. I. (2020). Sex Differences and the Role of Estradiol in Mesolimbic Reward Circuits and Vulnerability to Cocaine and Opiate Addiction. Frontiers in Behavioral Neuroscience, 14.

- ^ Becker, J. B., & Chartoff, E. (2019). Sex differences in neural mechanisms mediating reward and addiction. Neuropsychopharmacology, 44(1), 166-183.

- ^ Stoof, J. C., & Kebabian, J. W. (1984). Two dopamine receptors: biochemistry, physiology and pharmacology. Life sciences, 35(23), 2281-2296.

- ^ Yin, H. H., Knowlton, B. J., & Balleine, B. W. (2005). Blockade of NMDA receptors in the dorsomedial striatum prevents action–outcome learning in instrumental conditioning. European Journal of Neuroscience, 22(2), 505-512.

- ^ a b c Koob, G. F., & Volkow, N. D. (2016). Neurobiology of addiction: a neurocircuitry analysis. The Lancet Psychiatry, 3(8), 760-773.

- ^ Kutlu, M. G., & Gould, T. J. (2016). Effects of drugs of abuse on hippocampal plasticity and hippocampus-dependent learning and memory: contributions to development and maintenance of addiction. Learning & memory, 23(10), 515-533.

- ^ McGaugh, J. L. (July 2004). "The amygdala modulates the consolidation of memories of emotionally arousing experiences". Annual Review of Neuroscience. 27 (1): 1–28.

- ^ Koob G. F., Le Moal M. (2008). Addiction and the brain antireward system. Annu. Rev. Psychol. 59 29–53. 10.1146/annurev.psych.59.103006.093548 [PubMed] [CrossRef] [Google Scholar] Koob G. F., Sanna P. P., Bloom F. E. (1998). Neuroscience of addiction. Neuron 21 467–476.

- ^ PMID 29073109.

Here, we show that opioid or orexin stimulations in orbitofrontal cortex and insula causally enhance hedonic "liking" reactions to sweetness and find a third cortical site where the same neurochemical stimulations reduce positive hedonic impact.

- PMID 22844850. Archived from the original(PDF) on 29 March 2017. Retrieved 17 January 2017.

So it makes sense that the real pleasure centers in the brain – those directly responsible for generating pleasurable sensations – turn out to lie within some of the structures previously identified as part of the reward circuit. One of these so-called hedonic hotspots lies in a subregion of the nucleus accumbens called the medial shell. A second is found within the ventral pallidum, a deep-seated structure near the base of the forebrain that receives most of its signals from the nucleus accumbens. ...

On the other hand, intense euphoria is harder to come by than everyday pleasures. The reason may be that strong enhancement of pleasure – like the chemically induced pleasure bump we produced in lab animals – seems to require activation of the entire network at once. Defection of any single component dampens the high.

Whether the pleasure circuit – and in particular, the ventral pallidum – works the same way in humans is unclear. - ^ PMID 22487042.

Here I discuss how mesocorticolimbic mechanisms generate the motivation component of incentive salience. Incentive salience takes Pavlovian learning and memory as one input and as an equally important input takes neurobiological state factors (e.g. drug states, appetite states, satiety states) that can vary independently of learning. Neurobiological state changes can produce unlearned fluctuations or even reversals in the ability of a previously learned reward cue to trigger motivation. Such fluctuations in cue-triggered motivation can dramatically depart from all previously learned values about the associated reward outcome. ... Associative learning and prediction are important contributors to motivation for rewards. Learning gives incentive value to arbitrary cues such as a Pavlovian conditioned stimulus (CS) that is associated with a reward (unconditioned stimulus or UCS). Learned cues for reward are often potent triggers of desires. For example, learned cues can trigger normal appetites in everyone, and can sometimes trigger compulsive urges and relapse in addicts.

Cue-triggered 'wanting' for the UCS

A brief CS encounter (or brief UCS encounter) often primes a pulse of elevated motivation to obtain and consume more reward UCS. This is a signature feature of incentive salience.

Cue as attractive motivational magnets

When a Pavlovian CS+ is attributed with incentive salience it not only triggers 'wanting' for its UCS, but often the cue itself becomes highly attractive – even to an irrational degree. This cue attraction is another signature feature of incentive salience ... Two recognizable features of incentive salience are often visible that can be used in neuroscience experiments: (i) UCS-directed 'wanting' – CS-triggered pulses of intensified 'wanting' for the UCS reward; and (ii) CS-directed 'wanting' – motivated attraction to the Pavlovian cue, which makes the arbitrary CS stimulus into a motivational magnet. - ISBN 978-0-07-148127-4.

VTA DA neurons play a critical role in motivation, reward-related behavior (Chapter 15), attention, and multiple forms of memory. This organization of the DA system, wide projection from a limited number of cell bodies, permits coordinated responses to potent new rewards. Thus, acting in diverse terminal fields, dopamine confers motivational salience ("wanting") on the reward itself or associated cues (nucleus accumbens shell region), updates the value placed on different goals in light of this new experience (orbital prefrontal cortex), helps consolidate multiple forms of memory (amygdala and hippocampus), and encodes new motor programs that will facilitate obtaining this reward in the future (nucleus accumbens core region and dorsal striatum). In this example, dopamine modulates the processing of sensorimotor information in diverse neural circuits to maximize the ability of the organism to obtain future rewards. ...

The brain reward circuitry that is targeted by addictive drugs normally mediates the pleasure and strengthening of behaviors associated with natural reinforcers, such as food, water, and sexual contact. Dopamine neurons in the VTA are activated by food and water, and dopamine release in the NAc is stimulated by the presence of natural reinforcers, such as food, water, or a sexual partner. ...

The NAc and VTA are central components of the circuitry underlying reward and memory of reward. As previously mentioned, the activity of dopaminergic neurons in the VTA appears to be linked to reward prediction. The NAc is involved in learning associated with reinforcement and the modulation of motoric responses to stimuli that satisfy internal homeostatic needs. The shell of the NAc appears to be particularly important to initial drug actions within reward circuitry; addictive drugs appear to have a greater effect on dopamine release in the shell than in the core of the NAc. - PMID 23375169.

For instance, mesolimbic dopamine, probably the most popular brain neurotransmitter candidate for pleasure two decades ago, turns out not to cause pleasure or liking at all. Rather dopamine more selectively mediates a motivational process of incentive salience, which is a mechanism for wanting rewards but not for liking them .... Rather opioid stimulation has the special capacity to enhance liking only if the stimulation occurs within an anatomical hotspot

- PMID 26831103.

- PMID 24107968.

- S2CID 207092810.

- PMID 29420932.

- PMID 27337658.

- PMID 29780881.

- ^ Koob G. F., Le Moal M. (2008). Addiction and the brain antireward system. Annu. Rev. Psychol. 59 29–53. 10.1146/annurev.psych.59.103006.093548 [PubMed] [CrossRef] [Google Scholar] Koob G. F., Sanna P. P., Bloom F. E. (1998). Neuroscience of addiction. Neuron 21 467–476

- ^ Meyer, J. S., & Quenzer, L. F. (2013). Psychopharmacology: Drugs, the brain, and behavior. Sinauer Associates.

- ^ PMID 18793321.

- PMID 24647659.

- S2CID 11776683.

Importantly, we found evidence of increased activity in the direct pathway; both intracellular changes in the expression of the plasticity marker pERK and AMPA/NMDA ratios evoked by stimulating cortical afferents were increased in the D1-direct pathway neurons. In contrast, D2 neurons showed an opposing change in plasticity; stimulation of cortical afferents reduced AMPA/NMDA ratios on those neurons (Shan et al., 2014).

- S2CID 21652525.

- PMID 21704115.

- PMID 23267662.

- PMID 21147168.

- ^ S2CID 19157711.

The strong correlation between chronic drug exposure and ΔFosB provides novel opportunities for targeted therapies in addiction (118), and suggests methods to analyze their efficacy (119). Over the past two decades, research has progressed from identifying ΔFosB induction to investigating its subsequent action (38). It is likely that ΔFosB research will now progress into a new era – the use of ΔFosB as a biomarker. ...

Conclusions

ΔFosB is an essential transcription factor implicated in the molecular and behavioral pathways of addiction following repeated drug exposure. The formation of ΔFosB in multiple brain regions, and the molecular pathway leading to the formation of AP-1 complexes is well understood. The establishment of a functional purpose for ΔFosB has allowed further determination as to some of the key aspects of its molecular cascades, involving effectors such as GluR2 (87,88), Cdk5 (93) and NFkB (100). Moreover, many of these molecular changes identified are now directly linked to the structural, physiological and behavioral changes observed following chronic drug exposure (60,95,97,102). New frontiers of research investigating the molecular roles of ΔFosB have been opened by epigenetic studies, and recent advances have illustrated the role of ΔFosB acting on DNA and histones, truly as a molecular switch (34). As a consequence of our improved understanding of ΔFosB in addiction, it is possible to evaluate the addictive potential of current medications (119), as well as use it as a biomarker for assessing the efficacy of therapeutic interventions (121,122,124). Some of these proposed interventions have limitations (125) or are in their infancy (75). However, it is hoped that some of these preliminary findings may lead to innovative treatments, which are much needed in addiction. - ^ PMID 21459101."

Functional neuroimaging studies in humans have shown that gambling (Breiter et al, 2001), shopping (Knutson et al, 2007), orgasm (Komisaruk et al, 2004), playing video games (Koepp et al, 1998; Hoeft et al, 2008) and the sight of appetizing food (Wang et al, 2004a) activate many of the same brain regions (i.e., the mesocorticolimbic system and extended amygdala) as drugs of abuse (Volkow et al, 2004). ... Cross-sensitization is also bidirectional, as a history of amphetamine administration facilitates sexual behavior and enhances the associated increase in NAc DA ... As described for food reward, sexual experience can also lead to activation of plasticity-related signaling cascades. The transcription factor delta FosB is increased in the NAc, PFC, dorsal striatum, and VTA following repeated sexual behavior (Wallace et al., 2008; Pitchers et al., 2010b). This natural increase in delta FosB or viral overexpression of delta FosB within the NAc modulates sexual performance, and NAc blockade of delta FosB attenuates this behavior (Hedges et al, 2009; Pitchers et al., 2010b). Further, viral overexpression of delta FosB enhances the conditioned place preference for an environment paired with sexual experience (Hedges et al., 2009). ... In some people, there is a transition from "normal" to compulsive engagement in natural rewards (such as food or sex), a condition that some have termed behavioral or non-drug addictions (Holden, 2001; Grant et al., 2006a). ... In humans, the role of dopamine signaling in incentive-sensitization processes has recently been highlighted by the observation of a dopamine dysregulation syndrome in some patients taking dopaminergic drugs. This syndrome is characterized by a medication-induced increase in (or compulsive) engagement in non-drug rewards such as gambling, shopping, or sex (Evans et al, 2006; Aiken, 2007; Lader, 2008)."

Table 1: Summary of plasticity observed following exposure to drug or natural reinforcers - ^ PMID 23020045.

For these reasons, ΔFosB is considered a primary and causative transcription factor in creating new neural connections in the reward centre, prefrontal cortex, and other regions of the limbic system. This is reflected in the increased, stable and long-lasting level of sensitivity to cocaine and other drugs, and tendency to relapse even after long periods of abstinence. These newly constructed networks function very efficiently via new pathways as soon as drugs of abuse are further taken ... In this way, the induction of CDK5 gene expression occurs together with suppression of the G9A gene coding for dimethyltransferase acting on the histone H3. A feedback mechanism can be observed in the regulation of these 2 crucial factors that determine the adaptive epigenetic response to cocaine. This depends on ΔFosB inhibiting G9a gene expression, i.e. H3K9me2 synthesis which in turn inhibits transcription factors for ΔFosB. For this reason, the observed hyper-expression of G9a, which ensures high levels of the dimethylated form of histone H3, eliminates the neuronal structural and plasticity effects caused by cocaine by means of this feedback which blocks ΔFosB transcription

- PMID 23426671.

Drugs of abuse induce neuroplasticity in the natural reward pathway, specifically the nucleus accumbens (NAc), thereby causing development and expression of addictive behavior. ... Together, these findings demonstrate that drugs of abuse and natural reward behaviors act on common molecular and cellular mechanisms of plasticity that control vulnerability to drug addiction, and that this increased vulnerability is mediated by ΔFosB and its downstream transcriptional targets. ... Sexual behavior is highly rewarding (Tenk et al., 2009), and sexual experience causes sensitized drug-related behaviors, including cross-sensitization to amphetamine (Amph)-induced locomotor activity (Bradley and Meisel, 2001; Pitchers et al., 2010a) and enhanced Amph reward (Pitchers et al., 2010a). Moreover, sexual experience induces neural plasticity in the NAc similar to that induced by psychostimulant exposure, including increased dendritic spine density (Meisel and Mullins, 2006; Pitchers et al., 2010a), altered glutamate receptor trafficking, and decreased synaptic strength in prefrontal cortex-responding NAc shell neurons (Pitchers et al., 2012). Finally, periods of abstinence from sexual experience were found to be critical for enhanced Amph reward, NAc spinogenesis (Pitchers et al., 2010a), and glutamate receptor trafficking (Pitchers et al., 2012). These findings suggest that natural and drug reward experiences share common mechanisms of neural plasticity

- S2CID 25317397.

- PMID 21989194.

ΔFosB serves as one of the master control proteins governing this structural plasticity. ... ΔFosB also represses G9a expression, leading to reduced repressive histone methylation at the cdk5 gene. The net result is gene activation and increased CDK5 expression. ... In contrast, ΔFosB binds to the c-fos gene and recruits several co-repressors, including HDAC1 (histone deacetylase 1) and SIRT 1 (sirtuin 1). ... The net result is c-fos gene repression.

Figure 4: Epigenetic basis of drug regulation of gene expression - )

- PMID 29478612.

- ISBN 978-0-443-07145-4.

- ^ a b Roy A. Wise, Drug-activation of brain reward pathways, Drug and Alcohol Dependence 1998; 51 13–22.

- PMID 6879176.

- PMID 8496810.

- PMID 4041681.

- PMID 6135645.

- PMID 25814941.

- PMID 26441781.

- PMID 29503841.

- ISBN 978-94-017-8590-7.

- PMID 26487590.

- ISBN 978-3-319-26933-7.)

In a relatively recent literature, studies of motivation and reinforcement in depression have been largely consistent in detecting differences as compared to healthy controls (Whitton et al. 2015). In several studies using the effort expenditure for reward task (EEfRT), patients with MDD expended less effort for rewards when compared with controls (Treadway et al. 2012; Yang et al. 2014)

{{cite book}}: CS1 maint: multiple names: authors list (link - PMID 27189581.

- PMID 23942470.

- PMID 20603146.

- PMID 24931766.

- PMID 23578393.

- PMID 29309799.

- ^ S2CID 18542868.

- S2CID 9923114.

They also provide a separate assessment of the consummatory anhedonia (reduced experience of pleasure derived from ongoing enjoyable activities) and anticipatory anhedonia (reduced ability to anticipate future pleasure). In fact, the former one seems to be relatively intact in schizophrenia, whereas the latter one seems to be impaired [32 – 34]. However, discrepant data have also been reported [35].

- ^ Young, Anticevic & Barch 2018, p. 215a,"Several recent reviews (e.g., Cohen and Minor, 2010) have found that individuals with schizophrenia show relatively intact self-reported emotional responses to affect-eliciting stimuli as well as other indicators of intact response...A more mixed picture arises from functional neuroimaging studies examining brain responses to other types of pleasurable stimuli in schizophrenia (Paradiso et al., 2003)"

- ^ Young, Anticevic & Barch 2018, p. 215b,"As such it is surprising that behavioral studies have suggested that reinforcement learning is intact in schizophrenia when learning is relatively implicit (though, see Siegert et al., 2008 for evidence of impaired Serial Reaction Time task learning), but more impaired when explicit representations of stimulus-reward contingencies are needed (see Gold et al., 2008). This pattern has given rise to the theory that the striatally mediated gradual reinforcement learning system may be intact in schizophrenia, while more rapid, on-line, cortically mediated learning systems are impaired."

- ^ Young, Anticevic & Barch 2018, p. 216, "We have recently shown that individuals with schizophrenia can show improved cognitive control performance when information about rewards are externally presented but not when they must be internally maintained (Mann et al., 2013), with some evidence for impairments in DLPFC and striatal activation during internal maintenance of reward information being associated with individuals' differences in motivation (Chung and Barch, 2016)."

- ^ Littman, Ph.D., Ellen (February 2017). "Never Enough? Why ADHD Brains Crave Stimulation". ADDditude Magazine. New Hope Media LLC. Retrieved 27 May 2021.

- PMID 24904299.

- PMID 19183781.

- PMID 8833446.

- ^ a b "human nervous system | Description, Development, Anatomy, & Function". Encyclopedia Britannica. 23 January 2024.

- ^ PMID 13233369. Archived from the originalon 5 February 2012. Retrieved 26 April 2011.

- PMID 20587348– via www.discoverymedicine.com.

- ISBN 978-0-486-43093-5.

- ^ Fridlund, Alan and James Kalat. Mind and Brain, the Science of Psychology. California: Cengage Learning, 2014. Print.

- PMID 30245012.)

{{cite journal}}: CS1 maint: multiple names: authors list (link - ^ PMID 18311558.

- ^ PMID 30670642.

Listening to pleasurable music is often accompanied by measurable bodily reactions such as goose bumps or shivers down the spine, commonly called 'chills' or 'frissons.' ... Overall, our results straightforwardly revealed that pharmacological interventions bidirectionally modulated the reward responses elicited by music. In particular, we found that risperidone impaired participants' ability to experience musical pleasure, whereas levodopa enhanced it. ... Here, in contrast, studying responses to abstract rewards in human subjects, we show that manipulation of dopaminergic transmission affects both the pleasure (i.e., amount of time reporting chills and emotional arousal measured by EDA) and the motivational components of musical reward (money willing to spend). These findings suggest that dopaminergic signaling is a sine qua non condition not only for motivational responses, as has been shown with primary and secondary rewards, but also for hedonic reactions to music. This result supports recent findings showing that dopamine also mediates the perceived pleasantness attained by other types of abstract rewards and challenges previous findings in animal models on primary rewards, such as food.

- ^ PMID 30770455.

In a pharmacological study published in PNAS, Ferreri et al. (1) present evidence that enhancing or inhibiting dopamine signaling using levodopa or risperidone modulates the pleasure experienced while listening to music. ... In a final salvo to establish not only the correlational but also the causal implication of dopamine in musical pleasure, the authors have turned to directly manipulating dopaminergic signaling in the striatum, first by applying excitatory and inhibitory transcranial magnetic stimulation over their participants' left dorsolateral prefrontal cortex, a region known to modulate striatal function (5), and finally, in the current study, by administrating pharmaceutical agents able to alter dopamine synaptic availability (1), both of which influenced perceived pleasure, physiological measures of arousal, and the monetary value assigned to music in the predicted direction. ... While the question of the musical expression of emotion has a long history of investigation, including in PNAS (6), and the 1990s psychophysiological strand of research had already established that musical pleasure could activate the autonomic nervous system (7), the authors' demonstration of the implication of the reward system in musical emotions was taken as inaugural proof that these were veridical emotions whose study has full legitimacy to inform the neurobiology of our everyday cognitive, social, and affective functions (8). Incidentally, this line of work, culminating in the article by Ferreri et al. (1), has plausibly done more to attract research funding for the field of music sciences than any other in this community. The evidence of Ferreri et al. (1) provides the latest support for a compelling neurobiological model in which musical pleasure arises from the interaction of ancient reward/valuation systems (striatal–limbic–paralimbic) with more phylogenetically advanced perception/predictions systems (temporofrontal).

- Young, Jared W.; Anticevic, Alan; Barch, Deanna M. (2018). "Cognitive and Motivational Neuroscience of Psychotic Disorders". In Charney, Dennis S.; Sklar, Pamela; Buxbaum, Joseph D.; Nestler, Eric J. (eds.). Charney & Nestler's Neurobiology of Mental Illness (5th ed.). New York: Oxford University Press. ISBN 9780190681425.