Stochastic process

| Part of a series on statistics |

| Probability theory |

|---|

|

In

Applications and the study of phenomena have in turn inspired the proposal of new stochastic processes. Examples of such stochastic processes include the

The term random function is also used to refer to a stochastic or random process,

Based on their mathematical properties, stochastic processes can be grouped into various categories, which include

Introduction

A stochastic or random process can be defined as a collection of random variables that is indexed by some mathematical set, meaning that each random variable of the stochastic process is uniquely associated with an element in the set.

Classifications

A stochastic process can be classified in different ways, for example, by its state space, its index set, or the dependence among the random variables. One common way of classification is by the cardinality of the index set and the state space.[51][52][53]

When interpreted as time, if the index set of a stochastic process has a finite or countable number of elements, such as a finite set of numbers, the set of integers, or the natural numbers, then the stochastic process is said to be in

If the state space is the integers or natural numbers, then the stochastic process is called a discrete or integer-valued stochastic process. If the state space is the real line, then the stochastic process is referred to as a real-valued stochastic process or a process with continuous state space. If the state space is -dimensional Euclidean space, then the stochastic process is called a -dimensional vector process or -vector process.[51][52]

Etymology

The word stochastic in

According to the Oxford English Dictionary, early occurrences of the word random in English with its current meaning, which relates to chance or luck, date back to the 16th century, while earlier recorded usages started in the 14th century as a noun meaning "impetuosity, great speed, force, or violence (in riding, running, striking, etc.)". The word itself comes from a Middle French word meaning "speed, haste", and it is probably derived from a French verb meaning "to run" or "to gallop". The first written appearance of the term random process pre-dates stochastic process, which the Oxford English Dictionary also gives as a synonym, and was used in an article by

Terminology

The definition of a stochastic process varies,[67] but a stochastic process is traditionally defined as a collection of random variables indexed by some set.[68][69] The terms random process and stochastic process are considered synonyms and are used interchangeably, without the index set being precisely specified.[27][29][30][70][71][72] Both "collection",[28][70] or "family" are used[4][73] while instead of "index set", sometimes the terms "parameter set"[28] or "parameter space"[30] are used.

The term random function is also used to refer to a stochastic or random process,[5][74][75] though sometimes it is only used when the stochastic process takes real values.[28][73] This term is also used when the index sets are mathematical spaces other than the real line,[5][76] while the terms stochastic process and random process are usually used when the index set is interpreted as time,[5][76][77] and other terms are used such as random field when the index set is -dimensional Euclidean space or a manifold.[5][28][30]

Notation

A stochastic process can be denoted, among other ways, by ,[56] ,[69] [78] or simply as . Some authors mistakenly write even though it is an abuse of function notation.[79] For example, or are used to refer to the random variable with the index , and not the entire stochastic process.[78] If the index set is , then one can write, for example, to denote the stochastic process.[29]

Examples

Bernoulli process

One of the simplest stochastic processes is the

Random walk

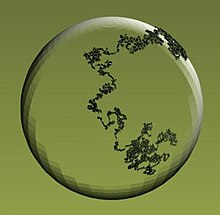

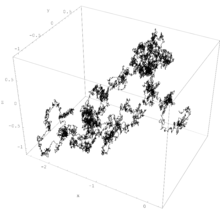

A classic example of a random walk is known as the simple random walk, which is a stochastic process in discrete time with the integers as the state space, and is based on a Bernoulli process, where each Bernoulli variable takes either the value positive one or negative one. In other words, the simple random walk takes place on the integers, and its value increases by one with probability, say, , or decreases by one with probability , so the index set of this random walk is the natural numbers, while its state space is the integers. If , this random walk is called a symmetric random walk.[92][93]

Wiener process

The Wiener process is a stochastic process with

Playing a central role in the theory of probability, the Wiener process is often considered the most important and studied stochastic process, with connections to other stochastic processes.[1][2][3][98][99][100][101] Its index set and state space are the non-negative numbers and real numbers, respectively, so it has both continuous index set and states space.[102] But the process can be defined more generally so its state space can be -dimensional Euclidean space.[91][99][103] If the mean of any increment is zero, then the resulting Wiener or Brownian motion process is said to have zero drift. If the mean of the increment for any two points in time is equal to the time difference multiplied by some constant , which is a real number, then the resulting stochastic process is said to have drift .[104][105][106]

The Wiener process is a member of some important families of stochastic processes, including Markov processes, Lévy processes and Gaussian processes.[2][49] The process also has many applications and is the main stochastic process used in stochastic calculus.[112][113] It plays a central role in quantitative finance,[114][115] where it is used, for example, in the Black–Scholes–Merton model.[116] The process is also used in different fields, including the majority of natural sciences as well as some branches of social sciences, as a mathematical model for various random phenomena.[3][117][118]

Poisson process

The Poisson process is a stochastic process that has different forms and definitions.[119][120] It can be defined as a counting process, which is a stochastic process that represents the random number of points or events up to some time. The number of points of the process that are located in the interval from zero to some given time is a Poisson random variable that depends on that time and some parameter. This process has the natural numbers as its state space and the non-negative numbers as its index set. This process is also called the Poisson counting process, since it can be interpreted as an example of a counting process.[119]

If a Poisson process is defined with a single positive constant, then the process is called a homogeneous Poisson process.[119][121] The homogeneous Poisson process is a member of important classes of stochastic processes such as Markov processes and Lévy processes.[49]

The homogeneous Poisson process can be defined and generalized in different ways. It can be defined such that its index set is the real line, and this stochastic process is also called the stationary Poisson process.[122][123] If the parameter constant of the Poisson process is replaced with some non-negative integrable function of , the resulting process is called an inhomogeneous or nonhomogeneous Poisson process, where the average density of points of the process is no longer constant.[124] Serving as a fundamental process in queueing theory, the Poisson process is an important process for mathematical models, where it finds applications for models of events randomly occurring in certain time windows.[125][126]

Defined on the real line, the Poisson process can be interpreted as a stochastic process,[49][127] among other random objects.[128][129] But then it can be defined on the -dimensional Euclidean space or other mathematical spaces,[130] where it is often interpreted as a random set or a random counting measure, instead of a stochastic process.[128][129] In this setting, the Poisson process, also called the Poisson point process, is one of the most important objects in probability theory, both for applications and theoretical reasons.[22][131] But it has been remarked that the Poisson process does not receive as much attention as it should, partly due to it often being considered just on the real line, and not on other mathematical spaces.[131][132]

Definitions

Stochastic process

A stochastic process is defined as a collection of random variables defined on a common probability space , where is a sample space, is a -

In other words, for a given probability space and a measurable space , a stochastic process is a collection of -valued random variables, which can be written as:[80]

Historically, in many problems from the natural sciences a point had the meaning of time, so is a random variable representing a value observed at time .[133] A stochastic process can also be written as to reflect that it is actually a function of two variables, and .[28][134]

There are other ways to consider a stochastic process, with the above definition being considered the traditional one.[68][69] For example, a stochastic process can be interpreted or defined as a -valued random variable, where is the space of all the possible functions from the set into the space .[27][68] However this alternative definition as a "function-valued random variable" in general requires additional regularity assumptions to be well-defined.[135]

Index set

The set is called the index set

State space

The

Sample function

A sample function is a single outcome of a stochastic process, so it is formed by taking a single possible value of each random variable of the stochastic process.[28][139] More precisely, if is a stochastic process, then for any point , the mapping

is called a sample function, a realization, or, particularly when is interpreted as time, a sample path of the stochastic process .[50] This means that for a fixed , there exists a sample function that maps the index set to the state space .[28] Other names for a sample function of a stochastic process include trajectory, path function[140] or path.[141]

Increment

An increment of a stochastic process is the difference between two random variables of the same stochastic process. For a stochastic process with an index set that can be interpreted as time, an increment is how much the stochastic process changes over a certain time period. For example, if is a stochastic process with state space and index set , then for any two non-negative numbers and such that , the difference is a -valued random variable known as an increment.[48][49] When interested in the increments, often the state space is the real line or the natural numbers, but it can be -dimensional Euclidean space or more abstract spaces such as Banach spaces.[49]

Further definitions

Law

For a stochastic process defined on the probability space , the law of stochastic process is defined as the image measure:

where is a probability measure, the symbol denotes function composition and is the pre-image of the measurable function or, equivalently, the -valued random variable , where is the space of all the possible -valued functions of , so the law of a stochastic process is a probability measure.[27][68][142][143]

For a measurable subset of , the pre-image of gives

so the law of a can be written as:[28]

The law of a stochastic process or a random variable is also called the probability law, probability distribution, or the distribution.[133][142][144][145][146]

Finite-dimensional probability distributions

For a stochastic process with law , its finite-dimensional distribution for is defined as:

This measure is the joint distribution of the random vector ; it can be viewed as a "projection" of the law onto a finite subset of .[27][147]

For any measurable subset of the -fold

The finite-dimensional distributions of a stochastic process satisfy two mathematical conditions known as consistency conditions.[57]

Stationarity

Stationarity is a mathematical property that a stochastic process has when all the random variables of that stochastic process are identically distributed. In other words, if is a stationary stochastic process, then for any the random variable has the same distribution, which means that for any set of index set values , the corresponding random variables

all have the same probability distribution. The index set of a stationary stochastic process is usually interpreted as time, so it can be the integers or the real line.[148][149] But the concept of stationarity also exists for point processes and random fields, where the index set is not interpreted as time.[148][150][151]

When the index set can be interpreted as time, a stochastic process is said to be stationary if its finite-dimensional distributions are invariant under translations of time. This type of stochastic process can be used to describe a physical system that is in steady state, but still experiences random fluctuations.[148] The intuition behind stationarity is that as time passes the distribution of the stationary stochastic process remains the same.[152] A sequence of random variables forms a stationary stochastic process only if the random variables are identically distributed.[148]

A stochastic process with the above definition of stationarity is sometimes said to be strictly stationary, but there are other forms of stationarity. One example is when a discrete-time or continuous-time stochastic process is said to be stationary in the wide sense, then the process has a finite second moment for all and the covariance of the two random variables and depends only on the number for all .[152][153] Khinchin introduced the related concept of stationarity in the wide sense, which has other names including covariance stationarity or stationarity in the broad sense.[153][154]

Filtration

A filtration is an increasing sequence of sigma-algebras defined in relation to some probability space and an index set that has some total order relation, such as in the case of the index set being some subset of the real numbers. More formally, if a stochastic process has an index set with a total order, then a filtration , on a probability space is a family of sigma-algebras such that for all , where and denotes the total order of the index set .[51] With the concept of a filtration, it is possible to study the amount of information contained in a stochastic process at , which can be interpreted as time .[51][155] The intuition behind a filtration is that as time passes, more and more information on is known or available, which is captured in , resulting in finer and finer partitions of .[156][157]

Modification

A modification of a stochastic process is another stochastic process, which is closely related to the original stochastic process. More precisely, a stochastic process that has the same index set , state space , and probability space as another stochastic process is said to be a modification of if for all the following

holds. Two stochastic processes that are modifications of each other have the same finite-dimensional law[158] and they are said to be stochastically equivalent or equivalent.[159]

Instead of modification, the term version is also used,[150][160][161][162] however some authors use the term version when two stochastic processes have the same finite-dimensional distributions, but they may be defined on different probability spaces, so two processes that are modifications of each other, are also versions of each other, in the latter sense, but not the converse.[163][142]

If a continuous-time real-valued stochastic process meets certain moment conditions on its increments, then the Kolmogorov continuity theorem says that there exists a modification of this process that has continuous sample paths with probability one, so the stochastic process has a continuous modification or version.[161][162][164] The theorem can also be generalized to random fields so the index set is -dimensional Euclidean space

Indistinguishable

Two stochastic processes and defined on the same probability space with the same index set and set space are said be indistinguishable if the following

holds.[142][158] If two and are modifications of each other and are

Separability

Separability is a property of a stochastic process based on its index set in relation to the probability measure. The property is assumed so that functionals of stochastic processes or random fields with uncountable index sets can form random variables. For a stochastic process to be separable, in addition to other conditions, its index set must be a separable space,[b] which means that the index set has a dense countable subset.[150][168]

More precisely, a real-valued continuous-time stochastic process with a probability space is separable if its index set has a dense countable subset and there is a set of probability zero, so , such that for every open set and every closed set , the two events and differ from each other at most on a subset of .[169][170][171] The definition of separability[c] can also be stated for other index sets and state spaces,[174] such as in the case of random fields, where the index set as well as the state space can be -dimensional Euclidean space.[30][150]

The concept of separability of a stochastic process was introduced by

Independence

Two stochastic processes and defined on the same probability space with the same index set are said be independent if for all and for every choice of epochs , the random vectors and are independent.[177]: p. 515

Two stochastic processes and are called uncorrelated if their cross-covariance is zero for all times.[178]: p. 142 Formally:

- .

If two stochastic processes and are independent, then they are also uncorrelated.[178]: p. 151

Orthogonality

Two stochastic processes and are called orthogonal if their cross-correlation is zero for all times.[178]: p. 142 Formally:

- .

Skorokhod space

A Skorokhod space, also written as Skorohod space, is a mathematical space of all the functions that are right-continuous with left limits, defined on some interval of the real line such as or , and take values on the real line or on some metric space.[179][180][181] Such functions are known as càdlàg or cadlag functions, based on the acronym of the French phrase continue à droite, limite à gauche.[179][182] A Skorokhod function space, introduced by Anatoliy Skorokhod,[181] is often denoted with the letter ,[179][180][181][182] so the function space is also referred to as space .[179][183][184] The notation of this function space can also include the interval on which all the càdlàg functions are defined, so, for example, denotes the space of càdlàg functions defined on the unit interval .[182][184][185]

Skorokhod function spaces are frequently used in the theory of stochastic processes because it often assumed that the sample functions of continuous-time stochastic processes belong to a Skorokhod space.[181][183] Such spaces contain continuous functions, which correspond to sample functions of the Wiener process. But the space also has functions with discontinuities, which means that the sample functions of stochastic processes with jumps, such as the Poisson process (on the real line), are also members of this space.[184][186]

Regularity

In the context of mathematical construction of stochastic processes, the term regularity is used when discussing and assuming certain conditions for a stochastic process to resolve possible construction issues.[187][188] For example, to study stochastic processes with uncountable index sets, it is assumed that the stochastic process adheres to some type of regularity condition such as the sample functions being continuous.[189][190]

Further examples

Markov processes and chains

Markov processes are stochastic processes, traditionally in discrete or continuous time, that have the Markov property, which means the next value of the Markov process depends on the current value, but it is conditionally independent of the previous values of the stochastic process. In other words, the behavior of the process in the future is stochastically independent of its behavior in the past, given the current state of the process.[191][192]

The Brownian motion process and the Poisson process (in one dimension) are both examples of Markov processes[193] in continuous time, while random walks on the integers and the gambler's ruin problem are examples of Markov processes in discrete time.[194][195]

A Markov chain is a type of Markov process that has either discrete

Markov processes form an important class of stochastic processes and have applications in many areas.[39][202] For example, they are the basis for a general stochastic simulation method known as Markov chain Monte Carlo, which is used for simulating random objects with specific probability distributions, and has found application in Bayesian statistics.[203][204]

The concept of the Markov property was originally for stochastic processes in continuous and discrete time, but the property has been adapted for other index sets such as -dimensional Euclidean space, which results in collections of random variables known as Markov random fields.[205][206][207]

Martingale

A martingale is a discrete-time or continuous-time stochastic process with the property that, at every instant, given the current value and all the past values of the process, the conditional expectation of every future value is equal to the current value. In discrete time, if this property holds for the next value, then it holds for all future values. The exact mathematical definition of a martingale requires two other conditions coupled with the mathematical concept of a filtration, which is related to the intuition of increasing available information as time passes. Martingales are usually defined to be real-valued,[208][209][155] but they can also be complex-valued[210] or even more general.[211]

A symmetric random walk and a Wiener process (with zero drift) are both examples of martingales, respectively, in discrete and continuous time.

Martingales can also be created from stochastic processes by applying some suitable transformations, which is the case for the homogeneous Poisson process (on the real line) resulting in a martingale called the compensated Poisson process.[209] Martingales can also be built from other martingales.[212] For example, there are martingales based on the martingale the Wiener process, forming continuous-time martingales.[208][214]

Martingales mathematically formalize the idea of a 'fair game' where it is possible form reasonable expectations for payoffs,

Martingales have many applications in statistics, but it has been remarked that its use and application are not as widespread as it could be in the field of statistics, particularly statistical inference.[221] They have found applications in areas in probability theory such as queueing theory and Palm calculus[222] and other fields such as economics[223] and finance.[17]

Lévy process

Lévy processes are types of stochastic processes that can be considered as generalizations of random walks in continuous time.[49][224] These processes have many applications in fields such as finance, fluid mechanics, physics and biology.[225][226] The main defining characteristics of these processes are their stationarity and independence properties, so they were known as processes with stationary and independent increments. In other words, a stochastic process is a Lévy process if for non-negatives numbers, , the corresponding increments

are all independent of each other, and the distribution of each increment only depends on the difference in time.[49]

A Lévy process can be defined such that its state space is some abstract mathematical space, such as a Banach space, but the processes are often defined so that they take values in Euclidean space. The index set is the non-negative numbers, so , which gives the interpretation of time. Important stochastic processes such as the Wiener process, the homogeneous Poisson process (in one dimension), and subordinators are all Lévy processes.[49][224]

Random field

A random field is a collection of random variables indexed by a -dimensional Euclidean space or some manifold. In general, a random field can be considered an example of a stochastic or random process, where the index set is not necessarily a subset of the real line.[30] But there is a convention that an indexed collection of random variables is called a random field when the index has two or more dimensions.[5][28][227] If the specific definition of a stochastic process requires the index set to be a subset of the real line, then the random field can be considered as a generalization of stochastic process.[228]

Point process

A point process is a collection of points randomly located on some mathematical space such as the real line, -dimensional Euclidean space, or more abstract spaces. Sometimes the term point process is not preferred, as historically the word process denoted an evolution of some system in time, so a point process is also called a random point field.[229] There are different interpretations of a point process, such a random counting measure or a random set.[230][231] Some authors regard a point process and stochastic process as two different objects such that a point process is a random object that arises from or is associated with a stochastic process,[232][233] though it has been remarked that the difference between point processes and stochastic processes is not clear.[233]

Other authors consider a point process as a stochastic process, where the process is indexed by sets of the underlying space[d] on which it is defined, such as the real line or -dimensional Euclidean space.[236][237] Other stochastic processes such as renewal and counting processes are studied in the theory of point processes.[238][233]

History

Early probability theory

Probability theory has its origins in games of chance, which have a long history, with some games being played thousands of years ago,

After Cardano,

Statistical mechanics

In the physical sciences, scientists developed in the 19th century the discipline of statistical mechanics, where physical systems, such as containers filled with gases, are regarded or treated mathematically as collections of many moving particles. Although there were attempts to incorporate randomness into statistical physics by some scientists, such as Rudolf Clausius, most of the work had little or no randomness.[253][254] This changed in 1859 when

Measure theory and probability theory

At the International Congress of Mathematicians in Paris in 1900, David Hilbert presented a list of mathematical problems, where his sixth problem asked for a mathematical treatment of physics and probability involving axioms.[249] Around the start of the 20th century, mathematicians developed measure theory, a branch of mathematics for studying integrals of mathematical functions, where two of the founders were French mathematicians, Henri Lebesgue and Émile Borel. In 1925, another French mathematician Paul Lévy published the first probability book that used ideas from measure theory.[249]

In the 1920s, fundamental contributions to probability theory were made in the Soviet Union by mathematicians such as

Birth of modern probability theory

In 1933, Andrei Kolmogorov published in German, his book on the foundations of probability theory titled Grundbegriffe der Wahrscheinlichkeitsrechnung,[i] where Kolmogorov used measure theory to develop an axiomatic framework for probability theory. The publication of this book is now widely considered to be the birth of modern probability theory, when the theories of probability and stochastic processes became parts of mathematics.[249][252]

After the publication of Kolmogorov's book, further fundamental work on probability theory and stochastic processes was done by Khinchin and Kolmogorov as well as other mathematicians such as

Decades later, Cramér referred to the 1930s as the "heroic period of mathematical probability theory".[252] World War II greatly interrupted the development of probability theory, causing, for example, the migration of Feller from Sweden to the United States of America[252] and the death of Doeblin, considered now a pioneer in stochastic processes.[262]Stochastic processes after World War II

After World War II, the study of probability theory and stochastic processes gained more attention from mathematicians, with significant contributions made in many areas of probability and mathematics as well as the creation of new areas.

Also starting in the 1940s, connections were made between stochastic processes, particularly martingales, and the mathematical field of potential theory, with early ideas by Shizuo Kakutani and then later work by Joseph Doob.[265] Further work, considered pioneering, was done by Gilbert Hunt in the 1950s, connecting Markov processes and potential theory, which had a significant effect on the theory of Lévy processes and led to more interest in studying Markov processes with methods developed by Itô.[21][267][268]

In 1953, Doob published his book Stochastic processes, which had a strong influence on the theory of stochastic processes and stressed the importance of measure theory in probability.[265] [264] Doob also chiefly developed the theory of martingales, with later substantial contributions by Paul-André Meyer. Earlier work had been carried out by Sergei Bernstein, Paul Lévy and Jean Ville, the latter adopting the term martingale for the stochastic process.[269][270] Methods from the theory of martingales became popular for solving various probability problems. Techniques and theory were developed to study Markov processes and then applied to martingales. Conversely, methods from the theory of martingales were established to treat Markov processes.[265]

Other fields of probability were developed and used to study stochastic processes, with one main approach being the theory of large deviations.

The theory of stochastic processes still continues to be a focus of research, with yearly international conferences on the topic of stochastic processes.[45][225]

Discoveries of specific stochastic processes

Although Khinchin gave mathematical definitions of stochastic processes in the 1930s,[63][260] specific stochastic processes had already been discovered in different settings, such as the Brownian motion process and the Poisson process.[21][24] Some families of stochastic processes such as point processes or renewal processes have long and complex histories, stretching back centuries.[276]

Bernoulli process

The Bernoulli process, which can serve as a mathematical model for flipping a biased coin, is possibly the first stochastic process to have been studied.

Random walks

In 1905, Karl Pearson coined the term random walk while posing a problem describing a random walk on the plane, which was motivated by an application in biology, but such problems involving random walks had already been studied in other fields. Certain gambling problems that were studied centuries earlier can be considered as problems involving random walks.[89][278] For example, the problem known as the Gambler's ruin is based on a simple random walk,[195][279] and is an example of a random walk with absorbing barriers.[242][280] Pascal, Fermat and Huyens all gave numerical solutions to this problem without detailing their methods,[281] and then more detailed solutions were presented by Jakob Bernoulli and Abraham de Moivre.[282]

For random walks in -dimensional integer lattices, George Pólya published, in 1919 and 1921, work where he studied the probability of a symmetric random walk returning to a previous position in the lattice. Pólya showed that a symmetric random walk, which has an equal probability to advance in any direction in the lattice, will return to a previous position in the lattice an infinite number of times with probability one in one and two dimensions, but with probability zero in three or higher dimensions.[283][284]

Wiener process

The

The French mathematician

It is commonly thought that Bachelier's work gained little attention and was forgotten for decades until it was rediscovered in the 1950s by the

In 1905, Albert Einstein published a paper where he studied the physical observation of Brownian motion or movement to explain the seemingly random movements of particles in liquids by using ideas from the kinetic theory of gases. Einstein derived a differential equation, known as a diffusion equation, for describing the probability of finding a particle in a certain region of space. Shortly after Einstein's first paper on Brownian movement, Marian Smoluchowski published work where he cited Einstein, but wrote that he had independently derived the equivalent results by using a different method.[292]

Einstein's work, as well as experimental results obtained by

Poisson process

The Poisson process is named after

Another discovery occurred in

In 1910, Ernest Rutherford and Hans Geiger published experimental results on counting alpha particles. Motivated by their work, Harry Bateman studied the counting problem and derived Poisson probabilities as a solution to a family of differential equations, resulting in the independent discovery of the Poisson process.[22] After this time there were many studies and applications of the Poisson process, but its early history is complicated, which has been explained by the various applications of the process in numerous fields by biologists, ecologists, engineers and various physical scientists.[22]

Markov processes

Markov processes and Markov chains are named after

In 1912, Poincaré studied Markov chains on

Lévy processes

Lévy processes such as the Wiener process and the Poisson process (on the real line) are named after Paul Lévy who started studying them in the 1930s,

Mathematical construction

In mathematics, constructions of mathematical objects are needed, which is also the case for stochastic processes, to prove that they exist mathematically.[57] There are two main approaches for constructing a stochastic process. One approach involves considering a measurable space of functions, defining a suitable measurable mapping from a probability space to this measurable space of functions, and then deriving the corresponding finite-dimensional distributions.[309]

Another approach involves defining a collection of random variables to have specific finite-dimensional distributions, and then using Kolmogorov's existence theorem[j] to prove a corresponding stochastic process exists.[57][309] This theorem, which is an existence theorem for measures on infinite product spaces,[313] says that if any finite-dimensional distributions satisfy two conditions, known as consistency conditions, then there exists a stochastic process with those finite-dimensional distributions.[57]

Construction issues

When constructing continuous-time stochastic processes certain mathematical difficulties arise, due to the uncountable index sets, which do not occur with discrete-time processes.[58][59] One problem is that is it possible to have more than one stochastic process with the same finite-dimensional distributions. For example, both the left-continuous modification and the right-continuous modification of a Poisson process have the same finite-dimensional distributions.[314] This means that the distribution of the stochastic process does not, necessarily, specify uniquely the properties of the sample functions of the stochastic process.[309][315]

Another problem is that functionals of continuous-time process that rely upon an uncountable number of points of the index set may not be measurable, so the probabilities of certain events may not be well-defined.[168] For example, the supremum of a stochastic process or random field is not necessarily a well-defined random variable.[30][59] For a continuous-time stochastic process , other characteristics that depend on an uncountable number of points of the index set include:[168]

- a sample function of a stochastic process is a continuous function of ;

- a sample function of a stochastic process is a bounded function of ; and

- a sample function of a stochastic process is an increasing functionof .

To overcome these two difficulties, different assumptions and approaches are possible.[69]

Resolving construction issues

One approach for avoiding mathematical construction issues of stochastic processes, proposed by

Another approach is possible, originally developed by

Although less used, the separability assumption is considered more general because every stochastic process has a separable version.[263] It is also used when it is not possible to construct a stochastic process in a Skorokhod space.[173] For example, separability is assumed when constructing and studying random fields, where the collection of random variables is now indexed by sets other than the real line such as -dimensional Euclidean space.[30][320]

See also

- List of stochastic processes topics

- Covariance function

- Deterministic system

- Dynamics of Markovian particles

- Entropy rate (for a stochastic process)

- Ergodic process

- Gillespie algorithm

- Interacting particle system

- Law (stochastic processes)

- Markov chain

- Stochastic cellular automaton

- Random field

- Randomness

- Stationary process

- Statistical model

- Stochastic calculus

- Stochastic control

- Stochastic parrot

- Stochastic processes and boundary value problems

Notes

- ^ The term Brownian motion can refer to the physical process, also known as Brownian movement, and the stochastic process, a mathematical object, but to avoid ambiguity this article uses the terms Brownian motion process or Wiener process for the latter in a style similar to, for example, Gikhman and Skorokhod[19] or Rosenblatt.[20]

- ^ The term "separable" appears twice here with two different meanings, where the first meaning is from probability and the second from topology and analysis. For a stochastic process to be separable (in a probabilistic sense), its index set must be a separable space (in a topological or analytic sense), in addition to other conditions.[136]

- ^ The definition of separability for a continuous-time real-valued stochastic process can be stated in other ways.[172][173]

- ^ In the context of point processes, the term "state space" can mean the space on which the point process is defined such as the real line,[234][235] which corresponds to the index set in stochastic process terminology.

- ^ Also known as James or Jacques Bernoulli.[245]

- ^ It has been remarked that a notable exception was the St Petersburg School in Russia, where mathematicians led by Chebyshev studied probability theory.[250]

- ^ The name Khinchin is also written in (or transliterated into) English as Khintchine.[63]

- ^ Doob, when citing Khinchin, uses the term 'chance variable', which used to be an alternative term for 'random variable'.[261]

- ^ Later translated into English and published in 1950 as Foundations of the Theory of Probability[249]

- ^ The theorem has other names including Kolmogorov's consistency theorem,[310] Kolmogorov's extension theorem[311] or the Daniell–Kolmogorov theorem.[312]

References

- ^ a b c d e f g h i Joseph L. Doob (1990). Stochastic processes. Wiley. pp. 46, 47.

- ^ ISBN 978-1-107-71749-7.

- ^ ISBN 978-1-4684-9305-4.

- ^ ISBN 978-0-486-79688-8.

- ^ ISBN 978-0-486-69387-3.

- ISBN 978-3-319-08488-6.

- ISBN 978-0-08-047536-3.

- ISBN 978-0-19-852525-7.

- ISBN 978-0-19-923507-0.

- ISBN 978-3-319-00327-6.

- ISBN 978-0-8194-2513-3.

- ISBN 1-886529-03-5.

- ISBN 978-1-118-58577-1.

- ISBN 978-1-4987-6060-7.

- ISBN 978-1-60198-264-3.

- ISBN 978-0-387-95016-7.

- ^ ISBN 978-3-540-26653-2.

- ISBN 978-0-387-40101-0.

- ISBN 978-0-486-69387-3.

- ^ Murray Rosenblatt (1962). Random Processes. Oxford University Press.

- ^ ISSN 0749-2170.

- ^ S2CID 125163415.

- ISBN 978-1-4612-3166-0.

- ^ S2CID 80836.

- ISBN 978-0-387-87862-1.

- ISBN 978-3-540-26312-8.

- ^ ISBN 978-0-387-95313-7.

- ^ ISBN 978-3-540-90275-1.

- ^ ISBN 978-1-107-60655-5.

- ^ ISBN 978-0-387-48116-6.

- ISBN 978-1-139-48876-1.

- ISBN 978-0-521-40605-5.

- ISBN 978-1-107-71749-7.

- ISBN 978-0-521-83263-2.

- ISBN 978-3-642-24939-6.

- ISBN 978-0-89871-693-1.

- ISBN 978-0-08-057041-9.

- ISBN 978-1-316-24124-0.

- ^ ISBN 978-0-89871-425-8.

- ISBN 978-0-387-21337-8.

- ISBN 978-81-265-1771-8.

- ISBN 978-3-319-09590-5.

- ISBN 978-0-521-83166-6.

- ^ Applebaum, David (2004). "Lévy processes: From probability to finance and quantum groups". Notices of the AMS. 51 (11): 1336–1347.

- ^ ISBN 978-3-03719-072-2.

- ISBN 978-3-642-54075-2.

- ISBN 978-3-319-08488-6.

- ^ ISBN 978-0-08-057041-9.

- ^ a b c d e f g h i j Applebaum, David (2004). "Lévy processes: From probability to finance and quantum groups". Notices of the AMS. 51 (11): 1337.

- ^ ISBN 978-1-107-71749-7.

- ^ ISBN 978-1-118-59320-2.

- ^ ISBN 978-0-08-057041-9.

- ISBN 978-1-4612-3166-0.

- ^ ISBN 978-81-265-1771-8.

- ^ ISBN 978-1-4471-5201-9.

- ^ ISBN 978-3-319-09590-5.

- ^ ISBN 978-981-310-165-4.

- ^ ISBN 978-3-662-12616-5.

- ^ ISBN 978-0-387-21631-7.

- ^ a b "Stochastic". Oxford English Dictionary (Online ed.). Oxford University Press. (Subscription or participating institution membership required.)

- ISBN 978-3-938417-40-9.

- ISBN 978-3-89971-812-6.

- ^ PMID 16587907.

- S2CID 122842868.

- S2CID 119439925.

- ^ "Random". Oxford English Dictionary (Online ed.). Oxford University Press. (Subscription or participating institution membership required.)

- ISBN 978-1-4899-2837-5.

- ^ ISBN 978-1-107-71749-7.

- ^ ISBN 978-0-387-00211-8.

- ^ ISBN 978-0-19-856814-8.

- ^ Murray Rosenblatt (1962). Random Processes. Oxford University Press. p. 91.

- ISBN 978-1-139-45717-0.

- ^ ISBN 978-0-8218-3898-3.

- ISBN 978-0-387-90262-3.

- ISBN 978-3-319-09590-5.

- ^ a b Gusak et al. (2010), p. 1

- ISBN 978-1-139-50147-7.

- ^ ISBN 978-3-540-90275-1.

- ISBN 978-1-86094-555-7.

- ^ ISBN 978-1-118-59320-2.

- ^ ISBN 978-1-118-59320-2.

- ^ ISBN 978-1-886529-40-3.

- ISBN 978-1-118-61793-9.

- ISBN 978-1-4471-5362-7.

- ISBN 978-1-139-48876-1.

- ISBN 978-0-387-95313-7.

- ISBN 978-1-118-59320-2.

- ISBN 978-1-139-49113-6.

- ^ ISBN 978-0471667193.

- ISBN 978-0-521-42408-0.

- ^ ISBN 978-1-86094-555-7.

- ISBN 978-1-4614-4708-5.

- ISBN 978-0-19-857222-0.

- ISBN 978-1-86094-555-7.

- S2CID 117623580.

- ^ Applebaum, David (2004). "Lévy processes: From probability to finance and quantum groups". Notices of the AMS. 51 (11): 1338.

- ISBN 978-0-486-69387-3.

- ISBN 978-1-118-59320-2.

- ^ ISBN 978-0-08-057041-9.

- ISBN 978-1-4612-0949-2.

- ISBN 978-3-662-06400-9.

- ISBN 978-981-310-165-4.

- ISBN 978-1-4612-3166-0.

- ISBN 978-1-4684-9305-4.

- ^ ISBN 978-1-139-48657-6.

- ISBN 978-1-4612-0949-2.

- ISBN 978-1-4612-0949-2.

- ISBN 978-0-387-40101-0.

- ISBN 978-0-387-95313-7.

- ISBN 978-1-4612-0949-2.

- ISBN 978-1-139-48657-6.

- ISBN 978-1-86094-555-7.

- ISBN 978-1-4612-0949-2.

- ^ Applebaum, David (2004). "Lévy processes: From probability to finance and quantum groups". Notices of the AMS. 51 (11): 1341.

- ISBN 978-0-08-057041-9.

- ISBN 978-1-86094-555-7.

- ISBN 978-1-4612-0949-2.

- ISBN 978-0-470-02171-2.

- ^ ISBN 978-0-471-49881-0.

- ISBN 978-0-387-21564-8.

- ISBN 978-0-12-381416-6.

- ISBN 978-0-19-159124-2.

- ISBN 978-0-387-21564-8.

- ISBN 978-0-19-159124-2.

- ISBN 978-0-08-057041-9.

- ISBN 978-0-471-49110-1.

- ^ Murray Rosenblatt (1962). Random Processes. Oxford University Press. p. 94.

- ^ ISBN 978-1-107-01469-5.

- ^ ISBN 978-1-118-65825-3.

- ISBN 978-0-19-159124-2.

- ^ ISBN 978-1-4419-6923-1.

- ISBN 978-0-19-159124-2.

- ^ ISBN 978-1-4471-5201-9.

- ISBN 978-1-4665-8618-5.

- S2CID 117171116.

- ^ ISBN 978-3-540-26312-8.

- ISBN 978-1-4612-3166-0.

- ISBN 978-3-540-26312-8.

- ISBN 978-1-118-59320-2.

- ISBN 978-81-265-1771-8.

- ISBN 978-3-540-04758-2.

- ^ ISBN 978-1-139-48721-4.

- ISBN 978-1-4612-0387-2.

- ISBN 978-0-387-21748-2.

- ISBN 978-0-521-83263-2.

- ISBN 978-3-662-06400-9.

- ISBN 978-1-107-71749-7.

- ^ ISBN 978-3-540-90275-1.

- ISBN 978-0-486-69387-3.

- ^ ISBN 978-0-89871-693-1.

- ISBN 978-1-118-65825-3.

- ^ a b Joseph L. Doob (1990). Stochastic processes. Wiley. pp. 94–96.

- ^ ISBN 978-1-118-59320-2.

- ISBN 978-0-486-69387-3.

- ^ ISBN 978-0-521-40605-5.

- ISBN 978-1-86094-555-7.

- ISBN 978-1-139-48657-6.

- ^ ISBN 978-1-107-71749-7.

- ISBN 978-1-4471-5201-9.

- ISBN 978-1-86094-555-7.

- ^ ISBN 978-3-540-04758-2.

- ^ ISBN 978-1-118-59320-2.

- ISBN 978-3-662-06400-9.

- ISBN 978-0-521-83263-2.

- ISBN 978-0-521-59925-2.

- ISBN 978-0-387-95313-7.

- ISBN 978-1-85233-376-8.

- ^ ISBN 978-0-8218-3898-3.

- ISBN 978-0-486-69387-3.

- ^ ISBN 978-1-4613-9742-7.

- ISBN 978-1-85233-892-3.

- ^ ISBN 978-81-265-1771-8.

- ^ ISBN 978-1-4471-5201-9.

- ^ Gusak et al. (2010), p. 22

- ^ Joseph L. Doob (1990). Stochastic processes. Wiley. p. 56.

- ISBN 978-0-387-21631-7.

- ^ Lapidoth, Amos, A Foundation in Digital Communication, Cambridge University Press, 2009.

- ^ a b c Kun Il Park, Fundamentals of Probability and Stochastic Processes with Applications to Communications, Springer, 2018, 978-3-319-68074-3

- ^ ISBN 978-0-387-21748-2.

- ^ a b Gusak et al. (2010), p. 24

- ^ ISBN 978-3-540-34514-5.

- ^ ISBN 978-1-86094-555-7.

- ^ ISBN 978-0-387-00211-8.

- ^ ISBN 978-1-118-62596-5.

- ISBN 978-1-139-50147-7.

- ISBN 978-1-4471-3856-3.

- ISBN 978-1-4471-5201-9.

- ISBN 978-0-387-21631-7.

- ISBN 978-1-4613-9742-7.

- ISBN 978-0-387-21748-2.

- ISBN 978-3-540-89332-5.

- ISBN 978-1-4613-8190-7.

- ISBN 978-0-471-12062-9.

- ISBN 978-1-118-59320-2.

- ^ ISBN 978-0-08-057041-9.

- ^ ISBN 978-0-387-00211-8.

- ISBN 978-0-486-79688-8.

- ISBN 978-0-08-057041-9.

- ISBN 978-3-540-90275-1.

- ISBN 978-0-471-12062-9.

- ISBN 978-0-521-73182-9.

- ISBN 978-0-08-057041-9.

- ISBN 978-1-118-21052-9.

- ISBN 978-1-58488-587-0.

- ISBN 978-1-4613-8190-7.

- ISBN 978-1-4612-3166-0.

- ISBN 978-1-4757-3124-8.

- ^ ISBN 978-1-86094-555-7.

- ^ ISBN 978-1-4612-0949-2.

- ^ Joseph L. Doob (1990). Stochastic processes. Wiley. pp. 292, 293.

- ISBN 978-1-316-67946-3.

- ^ ISBN 978-1-4684-9305-4.

- ^ ISBN 978-1-4832-6322-9.

- ISBN 978-1-4684-9305-4.

- ISBN 978-0-471-12062-9.

- ^ ISBN 978-1-4684-9305-4.

- ISBN 978-0-387-95313-7.

- ISBN 978-1-4684-9305-4.

- ISBN 978-1-4684-9305-4.

- ISBN 978-0-19-857222-0.

- S2CID 62552177.

- ISBN 978-3-662-11657-9.

- ISBN 978-1-4832-6322-9.

- ^ ISBN 978-0-521-64632-1.

- ^ a b c Applebaum, David (2004). "Lévy processes: From probability to finance and quantum groups". Notices of the AMS. 51 (11): 1336.

- ISBN 978-0-521-83263-2.

- ISBN 978-3-540-68829-7.

- ISBN 978-0-521-83263-2.

- ISBN 978-1-118-65825-3.

- ISBN 978-1-118-65825-3.

- ISBN 978-1-107-01469-5.

- ISBN 978-0-387-21564-8.

- ^ ISBN 978-0-412-21910-8.

- ISBN 978-0-19-159124-2.

- ISBN 978-0-203-49693-0.

- ISBN 978-0-08-057041-9.

- ISBN 978-3-319-10064-7.

- ISBN 978-0-387-21564-8.

- ^ ISBN 978-1-119-38755-8.

- JSTOR 2333419.

- ISBN 978-1-4832-1863-2.

- ^ ISBN 978-0471667193.

- ISBN 978-0-8160-6873-9.

- ISSN 0315-0860.

- ISBN 978-0-471-72517-6.

- ISBN 978-1-4832-1863-2.

- ISBN 978-0-8160-6873-9.

- ^ JSTOR 2589523.

- ^ ISSN 0006-3444.

- ^ S2CID 118011380.

- JSTOR 2974673.

- ^ ISSN 0091-1798.

- S2CID 189764116.

- S2CID 120059181.

- S2CID 189764116.

- ISSN 0003-3790.

- S2CID 117623580.

- ^ ISSN 0024-6093.

- ISBN 978-0471667193.

- ^ ISBN 978-0471667193.

- ^ ISSN 0021-9002.

- ISSN 0091-1798.

- ^ S2CID 17288507.

- ^ ISSN 0021-9002.

- ^ a b c d e Meyer, Paul-André (2009). "Stochastic Processes from 1950 to the Present". Electronic Journal for History of Probability and Statistics. 5 (1): 1–42.

- ^ "Kiyosi Itô receives Kyoto Prize". Notices of the AMS. 45 (8): 981–982. 1998.

- ISBN 978-0-521-64632-1.

- ISBN 978-1-4684-9305-4.

- ISBN 978-1-4832-6322-9.

- ISSN 0091-1798.

- ISSN 0346-1238.

- ^ Raussen, Martin; Skau, Christian (2008). "Interview with Srinivasa Varadhan". Notices of the AMS. 55 (2): 238–246.

- ISBN 978-3-642-27933-1.

- ^ "2006 Fields Medals Awarded". Notices of the AMS. 53 (9): 1041–1044. 2015.

- ^ Quastel, Jeremy (2015). "The Work of the 2014 Fields Medalists". Notices of the AMS. 62 (11): 1341–1344.

- ISBN 978-0-387-21564-8.

- ISBN 978-0-471-72517-6.

- ^ ISBN 978-0-444-86806-0.

- ISBN 978-1-118-59320-2.

- ISBN 978-1-118-61793-9.

- ISBN 978-0-471-72517-6.

- ISBN 978-0-471-72517-6.

- ISBN 978-1-118-59320-2.

- ISBN 978-0-19-853788-5.

- ^ Thiele, Thorwald N. (1880). "Om Anvendelse af mindste Kvadraterbs Methode i nogle Tilfælde, hvoren Komplikation af visse Slags uensartede tilfældige Fejlkilder giver Fejleneen "systematisk" Karakter". Kongelige Danske Videnskabernes Selskabs Skrifter. Series 5 (12): 381–408.

- JSTOR 1403034.

- ^ JSTOR 1402616.

- (PDF) from the original on 2011-06-05.

- .

- ^ (PDF) from the original on 2018-07-21.

- ^ (PDF) from the original on 2018-07-21.

- S2CID 117623580.

- S2CID 117623580.

- ISBN 978-0-387-21564-8.

- ISSN 0169-7161.

- ISSN 0346-1238.

- ^ ISBN 978-0-8218-0749-1.

- ^ ISBN 978-1-4757-3124-8.

- ^ .

- ISBN 978-1-119-38755-8.

- JSTOR 1403518.

- ISBN 978-0-387-95283-3.

- ISBN 978-1-4471-7262-8.

- ISBN 978-3-540-26312-8.

- ISSN 0002-9505.

- ISBN 978-1-4612-3038-0.

- ISSN 0024-6093.

- ISBN 978-0-521-83263-2.

- ^ ISBN 978-0-89871-693-1.

- ISBN 978-0-387-32903-1.

- ISBN 978-3-540-04758-2.

- ISBN 978-0-521-40605-5.

- ISBN 978-1-139-49113-6.

- ISBN 978-81-265-1771-8.

- ISBN 978-1-4471-5201-9.

- ISBN 978-0-387-32903-1.

- ^ ISBN 978-0-387-48116-6.

- ISBN 978-0-387-32903-1.

- ISBN 978-1-4471-5201-9.

- ISBN 978-1-118-72062-2.

Further reading

This 'further reading' section may need cleanup. (July 2018) |

Articles

- Applebaum, David (2004). "Lévy processes: From probability to finance and quantum groups". Notices of the AMS. 51 (11): 1336–1347.

- Cramer, Harald (1976). "Half a Century with Probability Theory: Some Personal Recollections". The Annals of Probability. 4 (4): 509–546. ISSN 0091-1798.

- Guttorp, Peter; Thorarinsdottir, Thordis L. (2012). "What Happened to Discrete Chaos, the Quenouille Process, and the Sharp Markov Property? Some History of Stochastic Point Processes". International Statistical Review. 80 (2): 253–268. S2CID 80836.

- Jarrow, Robert; Protter, Philip (2004). "A short history of stochastic integration and mathematical finance: the early years, 1880–1970". A Festschrift for Herman Rubin. Institute of Mathematical Statistics Lecture Notes - Monograph Series. pp. 75–91. ISSN 0749-2170.

- Meyer, Paul-André (2009). "Stochastic Processes from 1950 to the Present". Electronic Journal for History of Probability and Statistics. 5 (1): 1–42.

Books

- Robert J. Adler (2010). The Geometry of Random Fields. SIAM. ISBN 978-0-89871-693-1.

- Robert J. Adler; Jonathan E. Taylor (2009). Random Fields and Geometry. Springer Science & Business Media. ISBN 978-0-387-48116-6.

- Pierre Brémaud (2013). Markov Chains: Gibbs Fields, Monte Carlo Simulation, and Queues. Springer Science & Business Media. ISBN 978-1-4757-3124-8.

- Joseph L. Doob (1990). Stochastic processes. Wiley.

- Anders Hald (2005). A History of Probability and Statistics and Their Applications before 1750. John Wiley & Sons. ISBN 978-0-471-72517-6.

- ISBN 978-3-540-70712-7.

- Iosif I. Gikhman; Anatoly Vladimirovich Skorokhod (1996). Introduction to the Theory of Random Processes. Courier Corporation. ISBN 978-0-486-69387-3.

- ISBN 978-0-486-79688-8.

- Murray Rosenblatt (1962). Random Processes. Oxford University Press.

External links

Media related to Stochastic processes at Wikimedia Commons

Media related to Stochastic processes at Wikimedia Commons

![{\displaystyle \operatorname {K} _{\mathbf {X} \mathbf {Y} }(t_{1},t_{2})=\operatorname {E} \left[\left(X(t_{1})-\mu _{X}(t_{1})\right)\left(Y(t_{2})-\mu _{Y}(t_{2})\right)\right]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/0bf123985d84cdc25587546891caea6d23dc4f6d)

![{\displaystyle \operatorname {R} _{\mathbf {X} \mathbf {Y} }(t_{1},t_{2})=\operatorname {E} [X(t_{1}){\overline {Y(t_{2})}}]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/93d58e51ab83745d727396e86c894660ee44857e)

![{\displaystyle [0,1]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/738f7d23bb2d9642bab520020873cccbef49768d)

![{\displaystyle D[0,1]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/a4d18229131d1ad9cd7cff586ad63243aa6acaae)