Transpose

In linear algebra, the transpose of a matrix is an operator which flips a matrix over its diagonal; that is, it switches the row and column indices of the matrix A by producing another matrix, often denoted by AT (among other notations).[1]

The transpose of a matrix was introduced in 1858 by the British mathematician Arthur Cayley.[2] In the case of a logical matrix representing a binary relation R, the transpose corresponds to the converse relation RT.

Transpose of a matrix

Definition

The transpose of a matrix A, denoted by AT,[3] ⊤A, A⊤, ,[4][5] A′,[6] Atr, tA or At, may be constructed by any one of the following methods:

- Reflect A over its main diagonal (which runs from top-left to bottom-right) to obtain AT

- Write the rows of A as the columns of AT

- Write the columns of A as the rows of AT

Formally, the i-th row, j-th column element of AT is the j-th row, i-th column element of A:

If A is an m × n matrix, then AT is an n × m matrix.

In the case of square matrices, AT may also denote the Tth power of the matrix A. For avoiding a possible confusion, many authors use left upperscripts, that is, they denote the transpose as TA. An advantage of this notation is that no parentheses are needed when exponents are involved: as (TA)n = T(An), notation TAn is not ambiguous.

In this article this confusion is avoided by never using the symbol T as a variable name.

Matrix definitions involving transposition

A square matrix whose transpose is equal to itself is called a symmetric matrix; that is, A is symmetric if

A square matrix whose transpose is equal to its negative is called a skew-symmetric matrix; that is, A is skew-symmetric if

A square complex matrix whose transpose is equal to the matrix with every entry replaced by its complex conjugate (denoted here with an overline) is called a Hermitian matrix (equivalent to the matrix being equal to its conjugate transpose); that is, A is Hermitian if

A square complex matrix whose transpose is equal to the negation of its complex conjugate is called a skew-Hermitian matrix; that is, A is skew-Hermitian if

A square matrix whose transpose is equal to its

A square complex matrix whose transpose is equal to its conjugate inverse is called a unitary matrix; that is, A is unitary if

Examples

Properties

Let A and B be matrices and c be a scalar.

-

- The operation of taking the transpose is an inverse).

- The operation of taking the transpose is an

-

- The transpose respects addition.

-

- The transpose of a scalar is the same scalar. Together with the preceding property, this implies that the transpose is a linear map from the space of m × n matrices to the space of the n × m matrices.

-

- The order of the factors reverses.

This implies that a square matrix A is invertible if and only if AT is invertible, and in this case one has (A−1)T = (AT)−1. By induction, this result extends to the general case of multiple matrices, where- (A1A2...Ak−1Ak)T = AkTAk−1T…A2TA1T.

- The order of the factors reverses.

-

- The determinant of a square matrix is the same as the determinant of its transpose.

- The dot product of two column vectors a and b can be computed as the single entry of the matrix product

- If A has only real entries, then ATA is a positive-semidefinite matrix.

-

- The transpose of an invertible matrix is also invertible, and its inverse is the transpose of the inverse of the original matrix.

The notation A−T is sometimes used to represent either of these equivalent expressions.

- The transpose of an invertible matrix is also invertible, and its inverse is the transpose of the inverse of the original matrix.

- If A is a square matrix, then its eigenvalues are equal to the eigenvalues of its transpose, since they share the same characteristic polynomial.

- Over any field , a square matrix is similar to .

- This implies that and have the same invariant factors, which implies they share the same minimal polynomial, characteristic polynomial, and eigenvalues, among other properties.

- A proof of this property uses the following two observations.

- Let and be matrices over some base field and let be a field extension of . If and are similar as matrices over , then they are similar over . In particular this applies when is the algebraic closure of .

- If is a matrix over an algebraically closed field in Jordan normal form with respect to some basis, then is similar to . This further reduces to proving the same fact when is a single Jordan block, which is a straightforward exercise.

- This implies that and have the same

Products

If A is an m × n matrix and AT is its transpose, then the result of

A quick proof of the symmetry of A AT results from the fact that it is its own transpose:

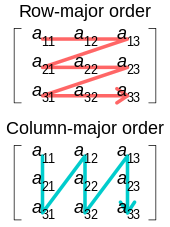

Implementation of matrix transposition on computers

On a

However, there remain a number of circumstances in which it is necessary or desirable to physically reorder a matrix in memory to its transposed ordering. For example, with a matrix stored in

Ideally, one might hope to transpose a matrix with minimal additional storage. This leads to the problem of transposing an n × m matrix

Transposes of linear maps and bilinear forms

As the main use of matrices is to represent linear maps between

This leads to a much more general definition of the transpose that works on every linear map, even when linear maps cannot be represented by matrices (such as in the case of infinite dimensional vector spaces). In the finite dimensional case, the matrix representing the transpose of a linear map is the transpose of the matrix representing the linear map, independently of the basis choice.

Transpose of a linear map

Let X# denote the

- ⟨u#(f), x⟩ = ⟨f, u(x)⟩ for all f ∈ Y# and x ∈ X

where ⟨•, •⟩ is the

The definition of the transpose may be seen to be independent of any bilinear form on the modules, unlike the adjoint (below).

The

If the matrix A describes a linear map with respect to bases of V and W, then the matrix AT describes the transpose of that linear map with respect to the dual bases.

Transpose of a bilinear form

Every linear map to the dual space u : X → X# defines a bilinear form B : X × X → F, with the relation B(x, y) = u(x)(y). By defining the transpose of this bilinear form as the bilinear form tB defined by the transpose tu : X## → X# i.e. tB(y, x) = tu(Ψ(y))(x), we find that B(x, y) = tB(y, x). Here, Ψ is the natural

Adjoint

If the vector spaces X and Y have respectively

If u : X → Y is a linear map between vector spaces X and Y, we define g as the adjoint of u if g : Y → X satisfies

- for all x ∈ X and y ∈ Y.

These bilinear forms define an isomorphism between X and X#, and between Y and Y#, resulting in an isomorphism between the transpose and adjoint of u. The matrix of the adjoint of a map is the transposed matrix only if the bases are orthonormal with respect to their bilinear forms. In this context, many authors however, use the term transpose to refer to the adjoint as defined here.

The adjoint allows us to consider whether g : Y → X is equal to u −1 : Y → X. In particular, this allows the orthogonal group over a vector space X with a quadratic form to be defined without reference to matrices (nor the components thereof) as the set of all linear maps X → X for which the adjoint equals the inverse.

Over a complex vector space, one often works with sesquilinear forms (conjugate-linear in one argument) instead of bilinear forms. The Hermitian adjoint of a map between such spaces is defined similarly, and the matrix of the Hermitian adjoint is given by the conjugate transpose matrix if the bases are orthonormal.

See also

- cofactor matrix

- Conjugate transpose

- Moore–Penrose pseudoinverse

- Projection (linear algebra)

References

- ^ Nykamp, Duane. "The transpose of a matrix". Math Insight. Retrieved September 8, 2020.

- ^ Arthur Cayley (1858) "A memoir on the theory of matrices", Philosophical Transactions of the Royal Society of London, 148 : 17–37. The transpose (or "transposition") is defined on page 31.

- ISBN 978-0-7514-0159-2.

- ^ "Transpose of a Matrix Product (ProofWiki)". ProofWiki. Retrieved 4 Feb 2021.

- ^ "What is the best symbol for vector/matrix transpose?". Stack Exchange. Retrieved 4 Feb 2021.

- ^ Weisstein, Eric W. "Transpose". mathworld.wolfram.com. Retrieved 2020-09-08.

- ISBN 0-03-010567-6

- ^ Schaefer & Wolff 1999, p. 128.

- ^ Halmos 1974, §44

- ^ Bourbaki 1989, II §2.5

- ^ Trèves 2006, p. 240.

Further reading

- OCLC 18588156.

- ISBN 978-0-387-90093-3.

- Maruskin, Jared M. (2012). Essential Linear Algebra. San José: Solar Crest. pp. 122–132. ISBN 978-0-9850627-3-6.

- OCLC 840278135.

- OCLC 853623322.

- Schwartz, Jacob T. (2001). Introduction to Matrices and Vectors. Mineola: Dover. pp. 126–132. ISBN 0-486-42000-0.

External links

- Gilbert Strang (Spring 2010) Linear Algebra from MIT Open Courseware

![{\displaystyle \left[\mathbf {A} ^{\operatorname {T} }\right]_{ij}=\left[\mathbf {A} \right]_{ji}.}](https://wikimedia.org/api/rest_v1/media/math/render/svg/9b0864ad54decb7f1b251512de895b40545facf5)