IEEE 754-1985

IEEE 754-1985

IEEE 754-1985 represents numbers in

| Level | Width | Range at full precision | Precision[a] |

|---|---|---|---|

| Single precision | 32 bits | ±1.18×10−38 to ±3.4×1038 | Approximately 7 decimal digits |

| Double precision | 64 bits | ±2.23×10−308 to ±1.80×10308 | Approximately 16 decimal digits |

The standard also defines representations for positive and negative

Representation of numbers

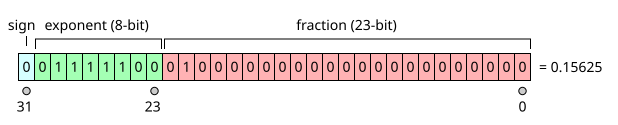

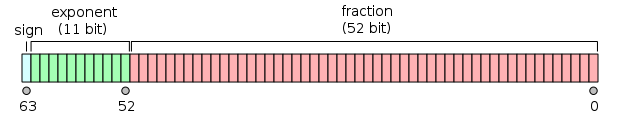

Floating-point numbers in IEEE 754 format consist of three fields: a sign bit, a biased exponent, and a fraction. The following example illustrates the meaning of each.

The decimal number 0.1562510 represented in binary is 0.001012 (that is, 1/8 + 1/32). (Subscripts indicate the number base.) Analogous to scientific notation, where numbers are written to have a single non-zero digit to the left of the decimal point, we rewrite this number so it has a single 1 bit to the left of the "binary point". We simply multiply by the appropriate power of 2 to compensate for shifting the bits left by three positions:

Now we can read off the fraction and the exponent: the fraction is .012 and the exponent is −3.

As illustrated in the pictures, the three fields in the IEEE 754 representation of this number are:

- sign = 0, because the number is positive. (1 indicates negative.)

- biased exponent = −3 + the "bias". In single precision, the bias is 127, so in this example the biased exponent is 124; in double precision, the bias is 1023, so the biased exponent in this example is 1020.

- fraction = .01000…2.

IEEE 754 adds a

The leading 1 bit is omitted since all numbers except zero start with a leading 1; the leading 1 is implicit and doesn't actually need to be stored which gives an extra bit of precision for "free."

Zero

The number zero is represented specially:

- sign = 0 for positive zero, 1 for negative zero.

- biased exponent = 0.

- fraction = 0.

Denormalized numbers

The number representations described above are called normalized, meaning that the implicit leading binary digit is a 1. To reduce the loss of precision when an

A denormal number is represented with a biased exponent of all 0 bits, which represents an exponent of −126 in single precision (not −127), or −1022 in double precision (not −1023).[3] In contrast, the smallest biased exponent representing a normal number is 1 (see examples below).

Representation of non-numbers

The biased-exponent field is filled with all 1 bits to indicate either infinity or an invalid result of a computation.

Positive and negative infinity

- sign = 0 for positive infinity, 1 for negative infinity.

- biased exponent = all 1 bits.

- fraction = all 0 bits.

NaN

Some operations of

- sign = either 0 or 1.

- biased exponent = all 1 bits.

- fraction = anything except all 0 bits (since all 0 bits represents infinity).

Range and precision

Precision is defined as the minimum difference between two successive mantissa representations; thus it is a function only in the mantissa; while the gap is defined as the difference between two successive numbers.[4]

Single precision

- The positive and negative numbers closest to zero (represented by the denormalized value with all 0s in the exponent field and the binary value 1 in the fraction field) are

- ±2−23 × 2−126 ≈ ±1.40130×10−45

- The positive and negative normalized numbers closest to zero (represented with the binary value 1 in the exponent field and 0 in the fraction field) are

- ±1 × 2−126 ≈ ±1.17549×10−38

- The finite positive and finite negative numbers furthest from zero (represented by the value with 254 in the exponent field and all 1s in the fraction field) are

- ±(2−2−23) × 2127[5] ≈ ±3.40282×1038

Some example range and gap values for given exponents in single precision:

| Actual Exponent (unbiased) | Exp (biased) | Minimum | Maximum | Gap |

|---|---|---|---|---|

| −1 | 126 | 0.5 | ≈ 0.999999940395 | ≈ 5.96046e-8 |

| 0 | 127 | 1 | ≈ 1.999999880791 | ≈ 1.19209e-7 |

| 1 | 128 | 2 | ≈ 3.999999761581 | ≈ 2.38419e-7 |

| 2 | 129 | 4 | ≈ 7.999999523163 | ≈ 4.76837e-7 |

| 10 | 137 | 1024 | ≈ 2047.999877930 | ≈ 1.22070e-4 |

| 11 | 138 | 2048 | ≈ 4095.999755859 | ≈ 2.44141e-4 |

| 23 | 150 | 8388608 | 16777215 | 1 |

| 24 | 151 | 16777216 | 33554430 | 2 |

| 127 | 254 | ≈ 1.70141e38 | ≈ 3.40282e38 | ≈ 2.02824e31 |

As an example, 16,777,217 cannot be encoded as a 32-bit float as it will be rounded to 16,777,216. However, all integers within the representable range that are a power of 2 can be stored in a 32-bit float without rounding.

Double precision

- The positive and negative numbers closest to zero (represented by the denormalized value with all 0s in the Exp field and the binary value 1 in the Fraction field) are

- ±2−52 × 2−1022 ≈ ±4.94066×10−324

- The positive and negative normalized numbers closest to zero (represented with the binary value 1 in the Exp field and 0 in the fraction field) are

- ±1 × 2−1022 ≈ ±2.22507×10−308

- The finite positive and finite negative numbers furthest from zero (represented by the value with 2046 in the Exp field and all 1s in the fraction field) are

- ±(2−2−52) × 21023[5] ≈ ±1.79769×10308

Some example range and gap values for given exponents in double precision:

| Actual Exponent (unbiased) | Exp (biased) | Minimum | Maximum | Gap |

|---|---|---|---|---|

| −1 | 1022 | 0.5 | ≈ 0.999999999999999888978 | ≈ 1.11022e-16 |

| 0 | 1023 | 1 | ≈ 1.999999999999999777955 | ≈ 2.22045e-16 |

| 1 | 1024 | 2 | ≈ 3.999999999999999555911 | ≈ 4.44089e-16 |

| 2 | 1025 | 4 | ≈ 7.999999999999999111822 | ≈ 8.88178e-16 |

| 10 | 1033 | 1024 | ≈ 2047.999999999999772626 | ≈ 2.27374e-13 |

| 11 | 1034 | 2048 | ≈ 4095.999999999999545253 | ≈ 4.54747e-13 |

| 52 | 1075 | 4503599627370496 | 9007199254740991 | 1 |

| 53 | 1076 | 9007199254740992 | 18014398509481982 | 2 |

| 1023 | 2046 | ≈ 8.98847e307 | ≈ 1.79769e308 | ≈ 1.99584e292 |

Extended formats

The standard also recommends extended format(s) to be used to perform internal computations at a higher precision than that required for the final result, to minimise round-off errors: the standard only specifies minimum precision and exponent requirements for such formats. The x87 80-bit extended format is the most commonly implemented extended format that meets these requirements.

Examples

Here are some examples of single-precision IEEE 754 representations:

| Type | Sign | Actual Exponent | Exp (biased) | Exponent field | Fraction field | Value |

|---|---|---|---|---|---|---|

| Zero | 0 | −126 | 0 | 0000 0000 | 000 0000 0000 0000 0000 0000 | 0.0 |

Negative zero

|

1 | −126 | 0 | 0000 0000 | 000 0000 0000 0000 0000 0000 | −0.0 |

| One | 0 | 0 | 127 | 0111 1111 | 000 0000 0000 0000 0000 0000 | 1.0 |

| Minus One | 1 | 0 | 127 | 0111 1111 | 000 0000 0000 0000 0000 0000 | −1.0 |

| Smallest denormalized number

|

* | −126 | 0 | 0000 0000 | 000 0000 0000 0000 0000 0001 | ±2−23 × 2−126 = ±2−149 ≈ ±1.4×10−45 |

| "Middle" denormalized number | * | −126 | 0 | 0000 0000 | 100 0000 0000 0000 0000 0000 | ±2−1 × 2−126 = ±2−127 ≈ ±5.88×10−39 |

| Largest denormalized number | * | −126 | 0 | 0000 0000 | 111 1111 1111 1111 1111 1111 | ±(1−2−23) × 2−126 ≈ ±1.18×10−38 |

| Smallest normalized number | * | −126 | 1 | 0000 0001 | 000 0000 0000 0000 0000 0000 | ±2−126 ≈ ±1.18×10−38 |

| Largest normalized number | * | 127 | 254 | 1111 1110 | 111 1111 1111 1111 1111 1111 | ±(2−2−23) × 2127 ≈ ±3.4×1038 |

| Positive infinity | 0 | 128 | 255 | 1111 1111 | 000 0000 0000 0000 0000 0000 | +∞ |

| Negative infinity | 1 | 128 | 255 | 1111 1111 | 000 0000 0000 0000 0000 0000 | −∞ |

Not a number

|

* | 128 | 255 | 1111 1111 | non zero | NaN |

| * Sign bit can be either 0 or 1 . | ||||||

Comparing floating-point numbers

Every possible bit combination is either a NaN or a number with a unique value in the

Rounding errors inherent to floating point calculations may limit the use of comparisons for checking the exact equality of results. Choosing an acceptable range is a complex topic. A common technique is to use a comparison epsilon value to perform approximate comparisons.[6] Depending on how lenient the comparisons are, common values include 1e-6 or 1e-5 for single-precision, and 1e-14 for double-precision.[7][8] Another common technique is ULP, which checks what the difference is in the last place digits, effectively checking how many steps away the two values are.[9]

Although negative zero and positive zero are generally considered equal for comparison purposes, some programming language relational operators and similar constructs treat them as distinct. According to the Java Language Specification,[10] comparison and equality operators treat them as equal, but Math.min() and Math.max() distinguish them (officially starting with Java version 1.1 but actually with 1.1.1), as do the comparison methods equals(), compareTo() and even compare() of classes Float and Double.

Rounding floating-point numbers

The IEEE standard has four different rounding modes; the first is the default; the others are called

- Round to Nearest – rounds to the nearest value; if the number falls midway it is rounded to the nearest value with an even (zero) least significant bit, which means it is rounded up 50% of the time (in IEEE 754-2008this mode is called roundTiesToEven to distinguish it from another round-to-nearest mode)

- Round toward 0 – directed rounding towards zero

- Round toward +∞ – directed rounding towards positive infinity

- Round toward −∞ – directed rounding towards negative infinity.

Extending the real numbers

The IEEE standard employs (and extends) the

Functions and predicates

Standard operations

The following functions must be provided:

- Add, subtract, multiply, divide

- Square root

- Floating point remainder. This is not like a normal modulo operation, it can be negative for two positive numbers. It returns the exact value of x–(round(x/y)·y).

- Round to nearest integer. For undirected rounding when halfway between two integers the even integer is chosen.

- Comparison operations. Besides the more obvious results, IEEE 754 defines that −∞ = −∞, +∞ = +∞ and x ≠

NaNfor any x (includingNaN).

Recommended functions and predicates

copysign(x,y)returns x with the sign of y, soabs(x)equalscopysign(x,1.0). This is one of the few operations which operates on a NaN in a way resembling arithmetic. The functioncopysignis new in the C99 standard.- −x returns x with the sign reversed. This is different from 0−x in some cases, notably when x is 0. So −(0) is −0, but the sign of 0−0 depends on the rounding mode.

scalb(y, N)logb(x)finite(x)apredicatefor "x is a finite value", equivalent to −Inf < x < Infisnan(x)a predicate for "x is a NaN", equivalent to "x ≠ x"x <> y, which turns out to have different behavior than NOT(x = y) due to NaN.unordered(x, y)is true when "x is unordered with y", i.e., either x or y is a NaN.class(x)nextafter(x,y)returns the next representable value from x in the direction towards y

History

In 1976, Intel was starting the development of a floating-point coprocessor.[14][15] Intel hoped to be able to sell a chip containing good implementations of all the operations found in the widely varying maths software libraries.[14][16]

John Palmer, who managed the project, believed the effort should be backed by a standard unifying floating point operations across disparate processors. He contacted

The work within Intel worried other vendors, who set up a standardization effort to ensure a "level playing field". Kahan attended the second IEEE 754 standards working group meeting, held in November 1977. He subsequently received permission from Intel to put forward a draft proposal based on his work for their coprocessor; he was allowed to explain details of the format and its rationale, but not anything related to Intel's implementation architecture. The draft was co-written with Jerome Coonen and Harold Stone, and was initially known as the "Kahan-Coonen-Stone proposal" or "K-C-S format".[14][15][16][18]

As an 8-bit exponent was not wide enough for some operations desired for double-precision numbers, e.g. to store the product of two 32-bit numbers,

Even before it was approved, the draft standard had been implemented by a number of manufacturers.[25][26] The Intel 8087, which was announced in 1980, was the first chip to implement the draft standard.

In 1980, the Intel 8087 chip was already released,[27] but DEC remained opposed, to denormal numbers in particular, because of performance concerns and since it would give DEC a competitive advantage to standardise on DEC's format.

The arguments over

See also

- IEEE 754

- Minifloat for simple examples of properties of IEEE 754 floating point numbers

- Fixed-point arithmetic

Notes

- ^ Precision: The number of decimal digits precision is calculated via number_of_mantissa_bits * Log10(2). Thus ~7.2 and ~15.9 for single and double precision respectively.

References

- ISBN 0-7381-1165-1.

- ^ "ANSI/IEEE Std 754-2019". 754r.ucbtest.org. Retrieved 2019-08-06.

- ISBN 9780123744937.

- ^ Hossam A. H. Fahmy; Shlomo Waser; Michael J. Flynn, Computer Arithmetic (PDF), archived from the original (PDF) on 2010-10-08, retrieved 2011-01-02

- ^ a b c William Kahan (October 1, 1997). "Lecture Notes on the Status of IEEE 754" (PDF). University of California, Berkeley. Retrieved 2007-04-12.

- ^ "Godot math_funcs.h". GitHub.com. 30 July 2022.

- ^ "Godot math_defs.h". GitHub.com. 30 July 2022.

- ^ "Godot MathfEx.cs". GitHub.com.

- ^ "Comparing Floating Point Numbers, 2012 Edition". randomascii.wordpress.com. 26 February 2012.

- ^ "Java Language and Virtual Machine Specifications". Java Documentation.

- S2CID 9820157.

- S2CID 15523399.

- S2CID 16981715.

- ^ a b c d "Intel and Floating-Point - Updating One of the Industry's Most Successful Standards - The Technology Vision for the Floating-Point Standard" (PDF). Intel. 2016. Archived from the original (PDF) on 2016-03-04. Retrieved 2016-05-30. (11 pages)

- ^ a b c d "An Interview with the Old Man of Floating-Point". cs.berkeley.edu. 1998-02-20. Retrieved 2016-05-30.

- ^ Dr. Dobb's. drdobbs.com. Retrieved 2016-05-30.

- ^ W. Kahan 2003, pers. comm. to Mike Cowlishaw and others after an IEEE 754 meeting

- ^ a b c d "IEEE 754: An Interview with William Kahan" (PDF). dr-chuck.com. Retrieved 2016-06-02.

- ^ "IEEE vs. Microsoft Binary Format; Rounding Issues (Complete)". Microsoft Support. Microsoft. 2006-11-21. Article ID KB35826, Q35826. Archived from the original on 2020-08-28. Retrieved 2010-02-24.

- (PDF) from the original on 2020-08-28. Retrieved 2016-06-02. (1+13+181+2+2 pages)

- Kahan, William Morton. "Why do we need a floating-point arithmetic standard?"(PDF). cs.berkeley.edu. Retrieved 2016-06-02.

- Kahan, William Morton; Darcy, Joseph D. "How Java's Floating-Point Hurts Everyone Everywhere"(PDF). cs.berkeley.edu. Retrieved 2016-06-02.

- ISBN 978-3-66239812-8. Retrieved 2016-05-30.

- ^ "Names for Standardized Floating-Point Formats" (PDF). cs.berkeley.edu. Retrieved 2016-06-02.

- ^ Charles Severance (20 February 1998). "An Interview with the Old Man of Floating-Point".

- ^ Charles Severance. "History of IEEE Floating-Point Format". Connexions. Archived from the original on 2009-11-20.

- ^ "Molecular Expressions: Science, Optics & You - Olympus MIC-D: Integrated Circuit Gallery - Intel 8087 Math Coprocessor". micro.magnet.fsu.edu. Retrieved 2016-05-30.

Further reading

- S2CID 33291145. Archived from the original(PDF) on 2009-08-23. Retrieved 2008-04-28.

- David Goldberg (March 1991). "What Every Computer Scientist Should Know About Floating-Point Arithmetic" (PDF). S2CID 222008826. Retrieved 2008-04-28.

- Chris Hecker (February 1996). "Let's Get To The (Floating) Point" (PDF). Game Developer Magazine: 19–24. ISSN 1073-922X. Archived from the original(PDF) on 2007-02-03.

- David Monniaux (May 2008). "The pitfalls of verifying floating-point computations". S2CID 218578808.: A compendium of non-intuitive behaviours of floating-point on popular architectures, with implications for program verification and testing.