Recursive neural network

A recursive neural network is a kind of

Architectures

Basic

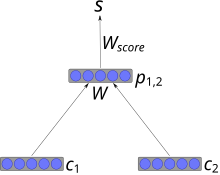

In the most simple architecture, nodes are combined into parents using a weight matrix that is shared across the whole network, and a non-linearity such as

Where W is a learned weight matrix.

This architecture, with a few improvements, has been used for successfully parsing natural scenes, syntactic parsing of natural language sentences,[4] and recursive autoencoding and generative modeling of 3D shape structures in the form of cuboid abstractions.[5]

Recursive cascade correlation (RecCC)

RecCC is a constructive neural network approach to deal with tree domains[2] with pioneering applications to chemistry[6] and extension to directed acyclic graphs.[7]

Unsupervised RNN

A framework for unsupervised RNN has been introduced in 2004.[8][9]

Tensor

Recursive neural tensor networks use one, tensor-based composition function for all nodes in the tree.[10]

Training

Stochastic gradient descent

Typically,

Properties

Universal approximation capability of RNN over trees has been proved in literature.[11][12]

Related models

Recurrent neural networks

Tree Echo State Networks

An efficient approach to implement recursive neural networks is given by the Tree Echo State Network[13] within the reservoir computing paradigm.

Extension to graphs

Extensions to graphs include graph neural network (GNN),[14] Neural Network for Graphs (NN4G),[15] and more recently convolutional neural networks for graphs.

References

- S2CID 6536466.

- ^ PMID 18255672.

- PMID 18255765.

- ^ Socher, Richard; Lin, Cliff; Ng, Andrew Y.; Manning, Christopher D. "Parsing Natural Scenes and Natural Language with Recursive Neural Networks" (PDF). The 28th International Conference on Machine Learning (ICML 2011).

- S2CID 20432407.

- S2CID 10031212.

- S2CID 12370239.

- PMID 15555852.

- .

- ^ Socher, Richard; Perelygin, Alex; Y. Wu, Jean; Chuang, Jason; D. Manning, Christopher; Y. Ng, Andrew; Potts, Christopher. "Recursive Deep Models for Semantic Compositionality Over a Sentiment Treebank" (PDF). EMNLP 2013.

- ISBN 9781846285677.

- S2CID 10845957.

- hdl:11568/158480.

- S2CID 206756462.

- S2CID 17486263.

![{\displaystyle p_{1,2}=\tanh \left(W[c_{1};c_{2}]\right)}](https://wikimedia.org/api/rest_v1/media/math/render/svg/6987af6182af39692701b8272fa8144516092e2b)