Boosting (machine learning)

This article needs to be updated. (April 2023) |

This article may be too technical for most readers to understand. (September 2023) |

| Part of a series on |

| Machine learning and data mining |

|---|

In

The concept of boosting is based on the question posed by

Robert Schapire answered the question posed by Kearns and Valiant in the affirmative in a paper published in 1990[5].This has had significant ramifications in machine learning and statistics, most notably leading to the development of boosting.[6]

When first introduced, the hypothesis boosting problem simply referred to the process of turning a weak learner into a strong learner. "Informally, [the hypothesis boosting] problem asks whether an efficient learning algorithm […] that outputs a hypothesis whose performance is only slightly better than random guessing [i.e. a weak learner] implies the existence of an efficient algorithm that outputs a hypothesis of arbitrary accuracy [i.e. a strong learner]."[3] Algorithms that achieve hypothesis boosting quickly became simply known as "boosting". Freund and Schapire's arcing (Adapt[at]ive Resampling and Combining),[7] as a general technique, is more or less synonymous with boosting.[8]

Boosting algorithms

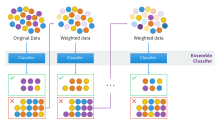

While boosting is not algorithmically constrained, most boosting algorithms consist of iteratively learning weak classifiers with respect to a distribution and adding them to a final strong classifier. When they are added, they are weighted in a way that is related to the weak learners' accuracy. After a weak learner is added, the data weights are readjusted, known as "re-weighting". Misclassified input data gain a higher weight and examples that are classified correctly lose weight.[note 1] Thus, future weak learners focus more on the examples that previous weak learners misclassified.

There are many boosting algorithms. The original ones, proposed by Robert Schapire (a recursive majority gate formulation),[5] and Yoav Freund (boost by majority),[9] were not adaptive and could not take full advantage of the weak learners. Schapire and Freund then developed AdaBoost, an adaptive boosting algorithm that won the prestigious Gödel Prize.

Only algorithms that are provable boosting algorithms in the probably approximately correct learning formulation can accurately be called boosting algorithms. Other algorithms that are similar in spirit[clarification needed] to boosting algorithms are sometimes called "leveraging algorithms", although they are also sometimes incorrectly called boosting algorithms.[9]

The main variation between many boosting algorithms is their method of

Object categorization in computer vision

Given images containing various known objects in the world, a classifier can be learned from them to automatically classify the objects in future images. Simple classifiers built based on some image feature of the object tend to be weak in categorization performance. Using boosting methods for object categorization is a way to unify the weak classifiers in a special way to boost the overall ability of categorization.[citation needed]

Problem of object categorization

Status quo for object categorization

The recognition of object categories in images is a challenging problem in

Boosting for binary categorization

AdaBoost can be used for face detection as an example of

- Form a large set of simple features

- Initialize weights for training images

- For T rounds

- Normalize the weights

- For available features from the set, train a classifier using a single feature and evaluate the training error

- Choose the classifier with the lowest error

- Update the weights of the training images: increase if classified wrongly by this classifier, decrease if correctly

- Form the final strong classifier as the linear combination of the T classifiers (coefficient larger if training error is small)

After boosting, a classifier constructed from 200 features could yield a 95% detection rate under a false positive rate.[15]

Another application of boosting for binary categorization is a system that detects pedestrians using

Boosting for multi-class categorization

Compared with binary categorization,

The main flow of the algorithm is similar to the binary case. What is different is that a measure of the joint training error shall be defined in advance. During each iteration the algorithm chooses a classifier of a single feature (features that can be shared by more categories shall be encouraged). This can be done via converting

In the paper "Sharing visual features for multiclass and multiview object detection", A. Torralba et al. used

Convex vs. non-convex boosting algorithms

Boosting algorithms can be based on convex or non-convex optimization algorithms. Convex algorithms, such as AdaBoost and LogitBoost, can be "defeated" by random noise such that they can't learn basic and learnable combinations of weak hypotheses.[19][20] This limitation was pointed out by Long & Servedio in 2008. However, by 2009, multiple authors demonstrated that boosting algorithms based on non-convex optimization, such as BrownBoost, can learn from noisy datasets and can specifically learn the underlying classifier of the Long–Servedio dataset.

See also

- Random forest

- Alternating decision tree

- Bootstrap aggregating (bagging)

- Cascading

- CoBoosting

- Logistic regression

- Maximum entropy methods

- Gradient boosting

- Margin classifiers

- Cross-validation

- List of datasets for machine learning research

Implementations

- scikit-learn, an open source machine learning library for Python

- Orange, a free data mining software suite, module Orange.ensemble

- Wekais a machine learning set of tools that offers variate implementations of boosting algorithms like AdaBoost and LogitBoost

- R package GBM (Generalized Boosted Regression Models) implements extensions to Freund and Schapire's AdaBoost algorithm and Friedman's gradient boosting machine.

- jboost; AdaBoost, LogitBoost, RobustBoost, Boostexter and alternating decision trees

- R package adabag: Applies Multiclass AdaBoost.M1, AdaBoost-SAMME and Bagging

- R package xgboost: An implementation of gradient boosting for linear and tree-based models.

Notes

- ^ Some boosting-based classification algorithms actually decrease the weight of repeatedly misclassified examples; for example boost by majority and BrownBoost.

References

- ^ Leo Breiman (1996). "BIAS, VARIANCE, AND ARCING CLASSIFIERS" (PDF). TECHNICAL REPORT. Archived from the original (PDF) on 2015-01-19. Retrieved 19 January 2015.

Arcing [Boosting] is more successful than bagging in variance reduction

- ISBN 978-1439830031.

The term boosting refers to a family of algorithms that are able to convert weak learners to strong learners

- ^ a b Michael Kearns(1988); Thoughts on Hypothesis Boosting, Unpublished manuscript (Machine Learning class project, December 1988)

- S2CID 536357.

- ^ S2CID 53304535. Archived from the original(PDF) on 2012-10-10. Retrieved 2012-08-23.

- .

Schapire (1990) proved that boosting is possible. (Page 823)

- ^ Yoav Freund and Robert E. Schapire (1997); A Decision-Theoretic Generalization of On-Line Learning and an Application to Boosting, Journal of Computer and System Sciences, 55(1):119-139

- ^ Leo Breiman (1998); Arcing Classifier (with Discussion and a Rejoinder by the Author), Annals of Statistics, vol. 26, no. 3, pp. 801-849: "The concept of weak learning was introduced by Kearns and Valiant (1988, 1989), who left open the question of whether weak and strong learnability are equivalent. The question was termed the boosting problem since [a solution must] boost the low accuracy of a weak learner to the high accuracy of a strong learner. Schapire (1990) proved that boosting is possible. A boosting algorithm is a method that takes a weak learner and converts it into a strong learner. Freund and Schapire (1997) proved that an algorithm similar to arc-fs is boosting.

- ^ a b c Llew Mason, Jonathan Baxter, Peter Bartlett, and Marcus Frean (2000); Boosting Algorithms as Gradient Descent, in S. A. Solla, T. K. Leen, and K.-R. Muller, editors, Advances in Neural Information Processing Systems 12, pp. 512-518, MIT Press

- ^ Emer, Eric. "Boosting (AdaBoost algorithm)" (PDF). MIT. Archived (PDF) from the original on 2022-10-09. Retrieved 2018-10-10.

- ^ Sivic, Russell, Efros, Freeman & Zisserman, "Discovering objects and their location in images", ICCV 2005

- ^ A. Opelt, A. Pinz, et al., "Generic Object Recognition with Boosting", IEEE Transactions on PAMI 2006

- ^ M. Marszalek, "Semantic Hierarchies for Visual Object Recognition", 2007

- ^ "Large Scale Visual Recognition Challenge". December 2017.

- ^ P. Viola, M. Jones, "Robust Real-time Object Detection", 2001

- ^ Viola, P.; Jones, M.; Snow, D. (2003). Detecting Pedestrians Using Patterns of Motion and Appearance (PDF). ICCV. Archived (PDF) from the original on 2022-10-09.

- ^ A. Torralba, K. P. Murphy, et al., "Sharing visual features for multiclass and multiview object detection", IEEE Transactions on PAMI 2006

- ^ A. Opelt, et al., "Incremental learning of object detectors using a visual shape alphabet", CVPR 2006

- ^ P. Long and R. Servedio. 25th International Conference on Machine Learning (ICML), 2008, pp. 608--615.

- (PDF) from the original on 2022-10-09. Retrieved 2015-11-17.

Further reading

- Freund, Yoav; Schapire, Robert E. (1997). "A Decision-Theoretic Generalization of On-line Learning and an Application to Boosting" (PDF). Journal of Computer and System Sciences. 55 (1): 119–139. .

- Schapire, Robert E. (1990). "The strength of weak learnability". Machine Learning. 5 (2): 197–227. S2CID 6207294.

- Schapire, Robert E.; Singer, Yoram (1999). "Improved Boosting Algorithms Using Confidence-Rated Predictors". Machine Learning. 37 (3): 297–336. S2CID 2329907.

- Zhou, Zhihua (2008). "On the margin explanation of boosting algorithm" (PDF). In: Proceedings of the 21st Annual Conference on Learning Theory (COLT'08): 479–490.

- Zhou, Zhihua (2013). "On the doubt about margin explanation of boosting" (PDF). Artificial Intelligence. 203: 1–18. S2CID 2828847.

External links

- Robert E. Schapire (2003); The Boosting Approach to Machine Learning: An Overview, MSRI (Mathematical Sciences Research Institute) Workshop on Nonlinear Estimation and Classification

- , CCL 2014 Keynote.