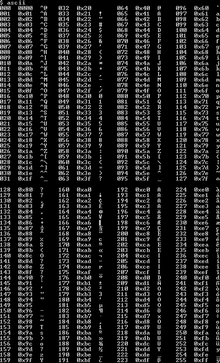

Extended ASCII

Extended ASCII is a repertoire of

The ISO standard

All modern operating systems use Unicode which supports thousands of characters. However, extended ASCII remains important in the history of computing, and supporting multiple extended ASCII character sets required software to be written in ways that made it much easier to support the UTF-8 encoding method later on.

History

This section needs additional citations for verification. (March 2016) |

ASCII was designed in the 1960s for

Seven-bit ASCII improved over prior five- and six-bit codes. Of the 27=128 codes, 33 were used for controls, and 95 carefully selected

The ASCII character set is barely large enough for US English use and lacks many glyphs common in typesetting, and far too small for universal use. Many more letters and symbols are desirable, useful, or required to directly represent letters of alphabets other than English, more kinds of punctuation and spacing, more mathematical operators and symbols (× ÷ ⋅ ≠ ≥ ≈ π etc.), some unique symbols used by some programming languages, ideograms, logograms, box-drawing characters, etc.

The biggest problem for computer users around the world was other alphabets. ASCII's English alphabet almost accommodates European languages, if accented letters are replaced by non-accented letters or two-character approximations such as ss for ß. Modified variants of 7-bit ASCII appeared promptly, trading some lesser-used symbols for highly desired symbols or letters, such as replacing "#" with "£" on UK Teletypes, "\" with "¥" in Japan or "₩" in Korea, etc. At least 29 variant sets resulted. 12 code points were modified by at least one modified set, leaving only 82 "invariant" codes. Programming languages however had assigned meaning to many of the replaced characters, work-arounds were devised such as C three-character sequences "??<" and "??>" to represent "{" and "}".[4] Languages with dissimilar basic alphabets could use transliteration, such as replacing all the Latin letters with the closest match Cyrillic letters (resulting in odd but somewhat readable text when English was printed in Cyrillic or vice versa). Schemes were also devised so that two letters could be overprinted (often with the backspace control between them) to produce accented letters. Users were not comfortable with any of these compromises and they were often poorly supported.[citation needed]

When computers and peripherals standardized on eight-bit bytes in the 1970s, it became obvious that computers and software could handle text that uses 256-character sets at almost no additional cost in programming, and no additional cost for storage. (Assuming that the unused 8th bit of each byte was not reused in some way, such as error checking, Boolean fields, or packing 8 characters into 7 bytes.) This would allow ASCII to be used unchanged and provide 128 more characters. Many manufacturers devised 8-bit character sets consisting of ASCII plus up to 128 of the unused codes: encodings which covered all the more used Western European (and Latin American) languages, such as Danish, Dutch, French, German, Portuguese, Spanish, Swedish and more could be made.

128 additional characters is still not enough to cover all purposes, all languages, or even all European languages, so the emergence of many proprietary and national ASCII-derived 8-bit character sets was inevitable. Translating between these sets (transcoding) is complex (especially if a character is not in both sets); and was often not done, producing mojibake (semi-readable resulting text, often users learned how to manually decode it). There were eventually attempts at cooperation or coordination by national and international standards bodies in the late 1990s, but manufacturer-proprietary sets remained the most popular by far, primarily because the international standards excluded characters popular in or peculiar to specific cultures.

Proprietary extensions

This section needs additional citations for verification. (June 2020) |

Various proprietary modifications and extensions of ASCII appeared on non-EBCDIC mainframe computers and minicomputers, especially in universities.

Atari and Commodore home computers added many graphic symbols to their non-standard ASCII (Respectively, ATASCII and PETSCII, based on the original ASCII standard of 1963).

The TRS-80 character set for the TRS-80 home computer added 64 semigraphics characters (0x80 through 0xBF) that implemented low-resolution block graphics. (Each block-graphic character displayed as a 2x3 grid of pixels, with each block pixel effectively controlled by one of the lower 6 bits.)[5]

ISO 8859

In 1987, the

One notable way in which the ISO standards differ from some vendor-specific extended ASCII is that the 32 character positions 8016 to 9F16, which correspond to the ASCII control characters with the high-order bit 'set', are reserved by ISO for control use and unused for printable characters (they are also reserved in Unicode[6]). This convention was almost universally ignored by other extended ASCII sets.

Windows-1252

Microsoft intended to use ISO 8859 standards in Windows,[

Character set confusion

The meaning of each extended code point can be different in every encoding. In order to correctly interpret and display text data (sequences of characters) that includes extended codes, hardware and software that reads or receives the text must use the specific extended ASCII encoding that applies to it. Applying the wrong encoding causes irrational substitution of many or all extended characters in the text.

Software can use a fixed encoding selection, or it can select from a palette of encodings by defaulting, checking the computer's nation and language settings, reading a declaration in the text, analyzing the text, asking the user, letting the user select or override, and/or defaulting to last selection. When text is transferred between computers that use different operating systems, software, and encodings, applying the wrong encoding can be commonplace.

Because the full English alphabet and the most-used characters in English are included in the seven-bit code points of ASCII, which are common to all encodings (even most proprietary encodings), English-language text is less damaged by interpreting it with the wrong encoding, but text in other languages can display as mojibake (complete nonsense). Because many Internet standards use ISO 8859-1, and because Microsoft Windows (using the code page 1252 superset of ISO 8859-1) is the dominant operating system for personal computers today,[citation needed][when?] unannounced use of ISO 8859-1 is quite commonplace, and may generally be assumed unless there are indications otherwise.

Many

-assigned character set identifiers.See also

References

- ^ Benjamin Riefenstahl (26 Feb 2001). "Re: Cygwin Termcap information involving extended ascii charicters". cygwin (Mailing list). Archived from the original on 11 July 2013. Retrieved 2 December 2012.

- ^ S. Wolicki (Mar 23, 2012). "Print Extended ASCII Codes in sql*plus". Retrieved May 17, 2022.

- ^ Mark J. Reed (March 28, 2004). "vim: how to type extended-ascii?". Newsgroup: comp.editors. Retrieved May 17, 2022.

- ^ "2.2.1.1 Trigraph sequences". Rationale for American National Standard for Information Systems - Programming Language - C. Archived from the original on 2018-09-29. Retrieved 2019-02-08.

- ^ Goldklang, Ira (2015). "Graphic Tips & Tricks". Archived from the original on 2017-07-29. Retrieved 2017-07-29.

- ^ "C1 Controls and Latin-1 Supplement | Range: 0080–00FF" (PDF). The Unicode Standard, Version 15.1. Unicode Consortium.

- ^ "HTML Character Sets". W3 Schools.

When a browser detects ISO-8859-1 it normally defaults to Windows-1252, because Windows-1252 has 32 more international characters.

- ^ "Encoding". WHATWG. 27 January 2015. sec. 5.2 Names and labels. Archived from the original on 4 February 2015. Retrieved 4 February 2015.