Brain-reading

Brain-reading or thought identification uses the responses of multiple

In 2007, professor of neuropsychology Barbara Sahakian qualified, "A lot of neuroscientists in the field are very cautious and say we can't talk about reading individuals' minds, and right now that is very true, but we're moving ahead so rapidly, it's not going to be that long before we will be able to tell whether someone's making up a story, or whether someone intended to do a crime with a certain degree of certainty."[2]

Applications

Natural images

Identification of complex natural images is possible using voxels from early and anterior visual cortex areas forward of them (visual areas V3A, V3B, V4, and the lateral occipital) together with Bayesian inference. This brain reading approach uses three components:[3] a structural encoding model that characterizes responses in early visual areas; a semantic encoding model that characterizes responses in anterior visual areas; and a Bayesian prior that describes the distribution of structural and semantic scene statistics.[3]

Experimentally the procedure is for subjects to view 1750

In 2008 IBM applied for a patent on how to extract mental images of human faces from the human brain. It uses a feedback loop based on brain measurements of the fusiform gyrus area in the brain which activates proportionate with degree of facial recognition.[4]

In 2011, a team led by Shinji Nishimoto used only brain recordings to partially reconstruct what volunteers were seeing. The researchers applied a new model, about how moving object information is processed in human brains, while volunteers watched clips from several videos. An algorithm searched through thousands of hours of external YouTube video footage (none of the videos were the same as the ones the volunteers watched) to select the clips that were most similar.[5][6] The authors have uploaded demos comparing the watched and the computer-estimated videos.[7][8]

In 2017 a face perception study in monkeys reported the reconstruction of human faces by analyzing electrical activity from 205 neurons.[9][10]

In 2023 image reconstruction was reported utilizing Stable Diffusion on human brain activity obtained via fMRI.[11][12]

Lie detector

Brain-reading has been suggested as an alternative to

A number of concerns have been raised about the accuracy and ethical implications of brain-reading for this purpose. Laboratory studies have found rates of accuracy of up to 85%; however, there are concerns about what this means for false positive results among non-criminal populations: "If the prevalence of "prevaricators" in the group being examined is low, the test will yield far more false-positive than true-positive results; about one person in five will be incorrectly identified by the test."[13] Ethical problems involved in the use of brain-reading as lie detection include misapplications due to adoption of the technology before its reliability and validity can be properly assessed and due to misunderstanding of the technology, and privacy concerns due to unprecedented access to individual's private thoughts.[13] However, it has been noted that the use of polygraph lie detection carries similar concerns about the reliability of the results[13] and violation of privacy.[15]

Human–machine interfaces

Brain-reading has also been proposed as a method of improving

Detecting attention

It is possible to track which of two forms of rivalrous binocular illusions a person was subjectively experiencing from fMRI signals.[19]

When humans think of an object, such as a screwdriver, many different areas of the brain activate. Marcel Just and his colleague, Tom Mitchell, have used fMRI brain scans to teach a computer to identify the various parts of the brain associated with specific thoughts.[20] This technology also yielded a discovery: similar thoughts in different human brains are surprisingly similar neurologically. To illustrate this, Just and Mitchell used their computer to predict, based on nothing but fMRI data, which of several images a volunteer was thinking about. The computer was 100% accurate, but so far the machine is only distinguishing between 10 images.[20]

Detecting thoughts

The category of event which a person freely recalls can be identified from fMRI before they say what they remembered.[21]

December 16, 2015, a study conducted by Toshimasa Yamazaki at

In 2023, the

Detecting language

Statistical analysis of

On 31 January 2012 Brian Pasley and colleagues of University of California Berkeley published their paper in

Predicting intentions

Some researchers in 2008 were able to predict, with 60% accuracy, whether a subject was going to push a button with their left or right hand. This is notable, not just because the accuracy is better than chance, but also because the scientists were able to make these predictions up to 10 seconds before the subject acted – well before the subject felt they had decided.[32] This data is even more striking in light of other research suggesting that the decision to move, and possibly the ability to cancel that movement at the last second,[33] may be the results of unconscious processing.[34]

John Dylan-Haynes has also demonstrated that fMRI can be used to identify whether a volunteer is about to add or subtract two numbers in their head.[20]

Predictive processing in the brain

Neural decoding techniques have been used to test theories about the

Virtual environments

It has also been shown that brain-reading can be achieved in a complex virtual environment.[37]

Emotions

Just and Mitchell also claim they are beginning to be able to identify kindness, hypocrisy, and love in the brain.[20]

Security

In 2013 a project led by University of California Berkeley professor John Chuang published findings on the feasibility of brainwave-based computer authentication as a substitute for passwords. Improvements in the use of biometrics for computer authentication has continually improved since the 1980s, but this research team was looking for a method faster and less intrusive than today's retina scans, fingerprinting, and voice recognition. The technology chosen to improve security measures is an

John-Dylan Haynes states that fMRI can also be used to identify recognition in the brain. He provides the example of a criminal being interrogated about whether he recognizes the scene of the crime or murder weapons.[20]

Methods of analysis

Classification

In classification, a pattern of activity across multiple voxels is used to determine the particular class from which the stimulus was drawn.[39] Many studies have classified visual stimuli, but this approach has also been used to classify cognitive states.[citation needed]

Reconstruction

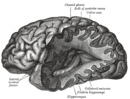

In reconstruction brain reading the aim is to create a literal picture of the image that was presented. Early studies used voxels from early visual cortex areas (V1, V2, and V3) to reconstruct geometric stimuli made up of flickering checkerboard patterns.[40][41]

EEG

EEG has also been used to identify recognition of specific information or memories by the P300 event related potential, which has been dubbed 'brain fingerprinting'.[42]

Accuracy

Brain-reading accuracy is increasing steadily as the quality of the data and the complexity of the decoding algorithms improve. In one recent experiment it was possible to identify which single image was being seen from a set of 120.[43] In another it was possible to correctly identify 90% of the time which of two categories the stimulus came and the specific semantic category (out of 23) of the target image 40% of the time.[3]

Limitations

It has been noted that so far brain-reading is limited. "In practice, exact reconstructions are impossible to achieve by any reconstruction algorithm on the basis of brain activity signals acquired by fMRI. This is because all reconstructions will inevitably be limited by inaccuracies in the encoding models and noise in the measured signals. Our results [who?] demonstrate that the natural image prior is a powerful (if unconventional) tool for mitigating the effects of these fundamental limitations. A natural image prior with only six million images is sufficient to produce reconstructions that are structurally and semantically similar to a target image."[3]

Ethical issues

With

In other countries outside the United States, thought identification has already been used in criminal law. In 2008 an Indian woman was convicted of murder after an EEG of her brain allegedly revealed that she was familiar with the circumstances surrounding the poisoning of her ex-fiancé.[46] Some neuroscientists and legal scholars doubt the validity of using thought identification as a whole for anything past research on the nature of deception and the brain.[47]

The Economist cautioned people to be "afraid" of the future impact, and some ethicists argue that privacy laws should protect private thoughts. Legal scholar Hank Greely argues that the court systems could benefit from such technology, and neuroethicist Julian Savulescu states that brain data is not fundamentally different from other types of evidence.[48] In Nature, journalist Liam Drew writes about emerging projects to attach brain-reading devices to speech synthesizers or other output devices for the benefit of tetraplegics. Such devices could create concerns of accidentally broadcasting the patient's "inner thoughts" rather than merely conscious speech.[49]

History

Psychologist

The fMRI has allowed research to expand by significant amounts because it can track the activity in an individual's brain by measuring the brain's blood flow. It is currently thought to be the best method for measuring brain activity, which is why it has been used in multiple research experiments in order to improve the understanding of how doctors and psychologists can identify thoughts.[50]

In a 2020 study, AI using implanted electrodes could correctly transcribe a sentence read aloud from a fifty-sentence test set 97% of the time, given 40 minutes of training data per participant.[51]

Future research

Experts are unsure of how far thought identification can expand, but Marcel Just believed in 2014 that in 3–5 years there will be a machine that is able to read complex thoughts such as 'I hate so-and-so'.[46]

Donald Marks, founder and chief science officer of MMT, is working on playing back thoughts individuals have after they have already been recorded.[52]

Researchers at the University of California Berkeley have already been successful in forming, erasing, and reactivating memories in rats. Marks says they are working on applying the same techniques to humans. This discovery could be monumental for war veterans who suffer from

Further research is also being done in analyzing brain activity during video games to detect criminals, neuromarketing, and using brain scans in government security checks.[46][50]

In popular culture

Episode Black Hole of American medical drama

See also

- Bayesian approaches to brain function

- Cyberware

- Mind uploading

- Minority Report (film)

- Neural decoding

- Neuroinformatics

- Thoughtcrime

- Thought recording and reproduction device

References

- ^ S2CID 16025026. Retrieved 8 December 2014.

- ^ a b Sample, Ian (9 February 2007). "The brain scan that can read people's intentions". The Guardian.

- ^ PMID 19778517.

- ^ "IBM Patent Application US 2010/0049076: Retrieving mental images of faces from the human brain" (PDF). 25 February 2010.

- ^ PMID 21945275

- ^ a b "Breakthrough Could Enable Others to Watch Your Dreams and Memories [Video], Philip Yam". Archived from the original on 6 May 2017. Retrieved 6 May 2021.

- ^ a b Nishimoto et al. 2011 uploaded video 1 Movie reconstruction from human brain activity on Youtube

- ^ a b Nishimoto et al. 2011 uploaded video 2 Movie reconstructions from human brain activity: 3 subjects, "Nishimoto.etal.2011.3Subjects.mpeg" on Youtube

- S2CID 32432231.

- ISSN 0261-3077. Retrieved 26 March 2023.

- )

- ^ "AI re-creates what people see by reading their brain scans". www.science.org. Retrieved 26 March 2023.

- ^ S2CID 219640810.

- PMID 23869200.

- .

- PMID 24358125.

- ^ "Surge in U.S. 'brain-reading' patents". BBC News. 7 May 2015.

- ^ Le, Tan (2010). "A headset that reads your brainwaves". TEDGlobal.

- S2CID 6456352.

- ^ a b c d e "'60 Minutes' video: Tech that reads your mind". CNET News. 5 January 2009.

- PMID 16373577.

- ^ Ito, Takashi; Yamaguchi, Hiromi; Yamaguchi, Ayaka; Yamazaki, Toshimasa; Fukuzumi, Shin'Ichi; Yamanoi, Takahiro (16 December 2015). "Silent Speech BCI – An investigation for practical problems". IEICE Technical Report. 114 (514). IEICE Technical Committee: 81–84. Retrieved 17 January 2016.

- ^ Danigelis, Alyssa (7 January 2016). "Mind-Reading Computer Knows What You're About to Say". Discovery News. Retrieved 17 January 2016.

- ^ "頭の中の言葉 解読 障害者と意思疎通、ロボット操作も 九工大・山崎教授ら" (in Japanese). Nishinippon Shimbun. 4 January 2016. Archived from the original on 17 January 2016. Retrieved 17 January 2016.

- ^ O'Sullivan, Donie (23 May 2023). "How the technology behind ChatGPT could make mind-reading a reality". CNN. Retrieved 17 June 2023.

- S2CID 252684880.

- S2CID 18097705.

- PMID 10588761.

- PMID 22303281.

- ^ Palmer, Jason (31 January 2012). "Science decodes 'internal voices'". BBC News.

- ^ a b "Secrets of the inner voice unlocked". NHS. 1 February 2012. Archived from the original on 3 February 2012.

- S2CID 2652613.

- S2CID 9086887.

- PMID 19046374.

- PMID 22841311.

- PMID 30327503.

- PMID 20348000.

- ^ "New Research: Computers That Can Identify You by Your Thoughts". UC Berkeley School of Information. UC Berkeley. 3 April 2013. Retrieved 8 December 2014.

- PMID 15852014.

- S2CID 17327816.

- S2CID 13361917.

- PMID 23869200.

- PMID 18322462.

- .

- ^ Brennan-Marquez, Kiel (2012). "A modest defense of mind reading". Yale Journal of Law & Technology. Yale University. p. 214.

Ronald Allen and Kristen Mace discern 'universal agreement' that the (Mind Reader Machine) is unacceptable.

- ^ a b c d "How Technology May Soon "Read" Your Mind". CBS News. 31 December 2008. Retrieved 8 December 2014.

- ^ Stix, Gary (1 August 2008). "Can fMRI Really Tell If You're Lying?". Scientific American. Retrieved 8 December 2014.

- PMID 24153277.

- PMID 31341310.

- ^ a b Saenz, Aaron (17 March 2010). "fMRI Reads the Images in Your Brain – We Know What You're Looking At". SingularityHUB. Singularity University. Retrieved 8 December 2014.

- ^ Davis, Nicola (30 March 2020). "Scientists develop AI that can turn brain activity into text". The Guardian. Retrieved 31 March 2020.

- ^ a b Cuthbertson, Anthony (29 August 2014). "Mind Reader: Meet The Man Who Records and Stores Your Thoughts, Dreams and Memories". International Business Times. Retrieved 8 December 2014.

External links

- Brain scanners can tell what you're thinking about New Scientist article on brain-reading 28 October 2009

- 2007 Pittsburgh Brain Activity Interpretation Competition:Interpreting subject-driven actions and sensory experience in a rigorously characterized virtual world

- Mind-reading tech is closer than you think, 2022 BBC video