History of statistics

In early times, the meaning was restricted to information about states, particularly

Introduction

By the 18th century, the term "

The term "

Applied statistics can be regarded as not a field of

Etymology

- Look up statistics in Wiktionary, the free dictionary.

The term statistics is ultimately derived from the

Origins in probability theory

Basic forms of statistics have been used since the beginning of civilization. Early empires often collated censuses of the population or recorded the trade in various commodities. The Han dynasty and the Roman Empire were some of the first states to extensively gather data on the size of the empire's population, geographical area and wealth.

The use of statistical methods dates back to at least the 5th century BCE. The historian Thucydides in his History of the Peloponnesian War[2] describes how the Athenians calculated the height of the wall of Platea by counting the number of bricks in an unplastered section of the wall sufficiently near them to be able to count them. The count was repeated several times by a number of soldiers. The most frequent value (in modern terminology - the mode ) so determined was taken to be the most likely value of the number of bricks. Multiplying this value by the height of the bricks used in the wall allowed the Athenians to determine the height of the ladders necessary to scale the walls.[citation needed]

The Trial of the Pyx is a test of the purity of the coinage of the Royal Mint which has been held on a regular basis since the 12th century. The Trial itself is based on statistical sampling methods. After minting a series of coins - originally from ten pounds of silver - a single coin was placed in the Pyx - a box in Westminster Abbey. After a given period - now once a year - the coins are removed and weighed. A sample of coins removed from the box are then tested for purity.

The Nuova Cronica, a 14th-century history of Florence by the Florentine banker and official Giovanni Villani, includes much statistical information on population, ordinances, commerce and trade, education, and religious facilities and has been described as the first introduction of statistics as a positive element in history,[3] though neither the term nor the concept of statistics as a specific field yet existed.

The arithmetic mean, although a concept known to the Greeks, was not generalised to more than two values until the 16th century. The invention of the decimal system by Simon Stevin in 1585 seems likely to have facilitated these calculations. This method was first adopted in astronomy by Tycho Brahe who was attempting to reduce the errors in his estimates of the locations of various celestial bodies.

The idea of the median originated in Edward Wright's book on navigation (Certaine Errors in Navigation) in 1599 in a section concerning the determination of location with a compass. Wright felt that this value was the most likely to be the correct value in a series of observations. The difference between the mean and the median was noticed in 1669 by Chistiaan Huygens in the context of using Graunt's tables.[4]

The term 'statistic' was introduced by the Italian scholar

Although the original scope of statistics was limited to data useful for governance, the approach was extended to many fields of a scientific or commercial nature during the 19th century. The mathematical foundations for the subject heavily drew on the new

A key early application of statistics in the 18th century was to the

.The formal study of

Roger Joseph Boscovich in 1755 based in his work on the shape of the earth proposed in his book De Litteraria expeditione per pontificiam ditionem ad dimetiendos duos meridiani gradus a PP. Maire et Boscovicli that the true value of a series of observations would be that which minimises the sum of absolute errors. In modern terminology this value is the median. The first example of what later became known as the normal curve was studied by Abraham de Moivre who plotted this curve on November 12, 1733.[14] de Moivre was studying the number of heads that occurred when a 'fair' coin was tossed.

In 1763 Richard Price transmitted to the Royal Society Thomas Bayes proof of a rule for using a binomial distribution to calculate a posterior probability on a prior event.

In 1765 Joseph Priestley invented the first timeline charts.

Johann Heinrich Lambert in his 1765 book Anlage zur Architectonic proposed the semicircle as a distribution of errors:

with -1 < x < 1.

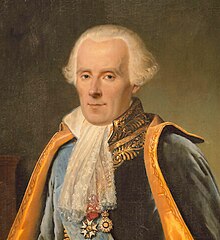

Pierre-Simon Laplace (1774) made the first attempt to deduce a rule for the combination of observations from the principles of the theory of probabilities. He represented the law of probability of errors by a curve and deduced a formula for the mean of three observations.

Laplace in 1774 noted that the frequency of an error could be expressed as an exponential function of its magnitude once its sign was disregarded.[15][16] This distribution is now known as the Laplace distribution. Lagrange proposed a parabolic fractal distribution of errors in 1776.

Laplace in 1778 published his second law of errors wherein he noted that the frequency of an error was proportional to the exponential of the square of its magnitude. This was subsequently rediscovered by

Lagrange also suggested in 1781 two other distributions for errors - a raised cosine distribution and a logarithmic distribution.

Laplace gave (1781) a formula for the law of facility of error (a term due to

In 1786 William Playfair (1759-1823) introduced the idea of graphical representation into statistics. He invented the line chart, bar chart and histogram and incorporated them into his works on economics, the Commercial and Political Atlas. This was followed in 1795 by his invention of the pie chart and circle chart which he used to display the evolution of England's imports and exports. These latter charts came to general attention when he published examples in his Statistical Breviary in 1801.

Laplace, in an investigation of the motions of Saturn and Jupiter in 1787, generalized Mayer's method by using different linear combinations of a single group of equations.

In 1791 Sir John Sinclair introduced the term 'statistics' into English in his Statistical Accounts of Scotland.

In 1802 Laplace estimated the population of France to be 28,328,612.[19] He calculated this figure using the number of births in the previous year and census data for three communities. The census data of these communities showed that they had 2,037,615 persons and that the number of births were 71,866. Assuming that these samples were representative of France, Laplace produced his estimate for the entire population.

The

The method of least squares was preceded by the use a median regression slope. This method minimizing the sum of the absolute deviances. A method of estimating this slope was invented by Roger Joseph Boscovich in 1760 which he applied to astronomy.

The term probable error (der wahrscheinliche Fehler) - the median deviation from the mean - was introduced in 1815 by the German astronomer Frederik Wilhelm Bessel. Antoine Augustin Cournot in 1843 was the first to use the term median (valeur médiane) for the value that divides a probability distribution into two equal halves.

Other contributors to the theory of errors were Ellis (1844), De Morgan (1864), Glaisher (1872), and Giovanni Schiaparelli (1875).[citation needed] Peters's (1856) formula for , the "probable error" of a single observation was widely used and inspired early robust statistics (resistant to outliers: see Peirce's criterion).

In the 19th century authors on

.The first tests of the normal distribution were invented by the German statistician Wilhelm Lexis in the 1870s. The only data sets available to him that he was able to show were normally distributed were birth rates.

Development of modern statistics

Although the origins of statistical theory lie in the 18th-century advances in probability, the modern field of statistics only emerged in the late-19th and early-20th century in three stages. The first wave, at the turn of the century, was led by the work of Francis Galton and Karl Pearson, who transformed statistics into a rigorous mathematical discipline used for analysis, not just in science, but in industry and politics as well. The second wave of the 1910s and 20s was initiated by William Sealy Gosset, and reached its culmination in the insights of Ronald Fisher. This involved the development of better design of experiments models, hypothesis testing and techniques for use with small data samples. The final wave, which mainly saw the refinement and expansion of earlier developments, emerged from the collaborative work between Egon Pearson and Jerzy Neyman in the 1930s.[23] Today, statistical methods are applied in all fields that involve decision making, for making accurate inferences from a collated body of data and for making decisions in the face of uncertainty based on statistical methodology.

The first statistical bodies were established in the early 19th century. The Royal Statistical Society was founded in 1834 and Florence Nightingale, its first female member, pioneered the application of statistical analysis to health problems for the furtherance of epidemiological understanding and public health practice. However, the methods then used would not be considered as modern statistics today.

The

The Norwegian

Francis Galton is credited as one of the principal founders of statistical theory. His contributions to the field included introducing the concepts of standard deviation, correlation, regression and the application of these methods to the study of the variety of human characteristics - height, weight, eyelash length among others. He found that many of these could be fitted to a normal curve distribution.[27]

Galton submitted a paper to Nature in 1907 on the usefulness of the median.[28] He examined the accuracy of 787 guesses of the weight of an ox at a country fair. The actual weight was 1208 pounds: the median guess was 1198. The guesses were markedly non-normally distributed (cf. Wisdom of the Crowd).

Galton's publication of Natural Inheritance in 1889 sparked the interest of a brilliant mathematician,

His work, and that of Galton, underpins many of the 'classical' statistical methods which are in common use today, including the

He also founded the

The second wave of mathematical statistics was pioneered by

Over the next seven years, he pioneered the principles of the design of experiments (see below) and elaborated his studies of analysis of variance. He furthered his studies of the statistics of small samples. Perhaps even more important, he began his systematic approach of the analysis of real data as the springboard for the development of new statistical methods. He developed computational algorithms for analyzing data from his balanced experimental designs. In 1925, this work resulted in the publication of his first book, Statistical Methods for Research Workers.[35] This book went through many editions and translations in later years, and it became the standard reference work for scientists in many disciplines. In 1935, this book was followed by The Design of Experiments, which was also widely used.

In addition to analysis of variance, Fisher named and promoted the method of

Other important contributions at this time included Charles Spearman's rank correlation coefficient that was a useful extension of the Pearson correlation coefficient. William Sealy Gosset, the English statistician better known under his pseudonym of Student, introduced Student's t-distribution, a continuous probability distribution useful in situations where the sample size is small and population standard deviation is unknown.

Egon Pearson (Karl's son) and Jerzy Neyman introduced the concepts of "Type II" error, power of a test and confidence intervals. Jerzy Neyman in 1934 showed that stratified random sampling was in general a better method of estimation than purposive (quota) sampling.[38]

Design of experiments

In 1747, while serving as surgeon on HM Bark Salisbury,

Lind is today often described as a one-factor-at-a-time experimenter.

A theory of statistical inference was developed by

Peirce's experiment inspired other researchers in psychology and education, which developed a research tradition of randomized experiments in laboratories and specialized textbooks in the 1800s.

The use of a sequence of experiments, where the design of each may depend on the results of previous experiments, including the possible decision to stop experimenting, was pioneered[47] by Abraham Wald in the context of sequential tests of statistical hypotheses.[48] Surveys are available of optimal sequential designs,[49] and of adaptive designs.[50] One specific type of sequential design is the "two-armed bandit", generalized to the multi-armed bandit, on which early work was done by Herbert Robbins in 1952.[51]

The term "design of experiments" (DOE) derives from early statistical work performed by Sir Ronald Fisher. He was described by Anders Hald as "a genius who almost single-handedly created the foundations for modern statistical science."[52] Fisher initiated the principles of design of experiments and elaborated on his studies of "analysis of variance". Perhaps even more important, Fisher began his systematic approach to the analysis of real data as the springboard for the development of new statistical methods. He began to pay particular attention to the labour involved in the necessary computations performed by hand, and developed methods that were as practical as they were founded in rigour. In 1925, this work culminated in the publication of his first book, Statistical Methods for Research Workers.[53] This went into many editions and translations in later years, and became a standard reference work for scientists in many disciplines.[54]

A methodology for designing experiments was proposed by Ronald A. Fisher, in his innovative book The Design of Experiments (1935) which also became a standard.[55][56][57][58] As an example, he described how to test the hypothesis that a certain lady could distinguish by flavour alone whether the milk or the tea was first placed in the cup. While this sounds like a frivolous application, it allowed him to illustrate the most important ideas of experimental design: see Lady tasting tea.

Agricultural science advances served to meet the combination of larger city populations and fewer farms. But for crop scientists to take due account of widely differing geographical growing climates and needs, it was important to differentiate local growing conditions. To extrapolate experiments on local crops to a national scale, they had to extend crop sample testing economically to overall populations. As statistical methods advanced (primarily the efficacy of designed experiments instead of one-factor-at-a-time experimentation), representative factorial design of experiments began to enable the meaningful extension, by inference, of experimental sampling results to the population as a whole.[citation needed] But it was hard to decide how representative was the crop sample chosen.[citation needed] Factorial design methodology showed how to estimate and correct for any random variation within the sample and also in the data collection procedures.

Bayesian statistics

The term Bayesian refers to

After the 1920s,

In the 20th century, the ideas of Laplace were further developed in two different directions, giving rise to objective and subjective currents in Bayesian practice. In the objectivist stream, the statistical analysis depends on only the model assumed and the data analysed.[66] No subjective decisions need to be involved. In contrast, "subjectivist" statisticians deny the possibility of fully objective analysis for the general case.

In the further development of Laplace's ideas, subjective ideas predate objectivist positions. The idea that 'probability' should be interpreted as 'subjective degree of belief in a proposition' was proposed, for example, by

Objective Bayesian inference was further developed by

.In the 1980s, there was a dramatic growth in research and applications of Bayesian methods, mostly attributed to the discovery of Markov chain Monte Carlo methods, which removed many of the computational problems, and an increasing interest in nonstandard, complex applications.[71] Despite growth of Bayesian research, most undergraduate teaching is still based on frequentist statistics.[72] Nonetheless, Bayesian methods are widely accepted and used, such as for example in the field of machine learning.[73]

Important contributors to statistics

|

|

|

References

- ISBN 978-0-374-53041-9.

- ^ Thucydides (1985). History of the Peloponnesian War. New York: Penguin Books, Ltd. p. 204.

- Encyclopædia Britannica 2006 Ultimate Reference Suite DVD. Retrieved on 2008-03-04.

- ISSN 1573-0816.

- .

- ISBN 978-0231555647.

- ISBN 978-1-4020-6036-6.

- S2CID 186209819.

- ^ ISBN 978-0-471-16068-7

- ISBN 978-0-412-44980-2

- ISBN 978-0-67440341-3.

- ISBN 978-0-387-95329-8

- ^ Hald, Anders (1998), "Chapter 4. Chance or Design: Tests of Significance", A History of Mathematical Statistics from 1750 to 1930, Wiley, p. 65

- ^ de Moivre, A. (1738) The doctrine of chances. Woodfall

- ^ Laplace, P-S (1774). "Mémoire sur la probabilité des causes par les évènements". Mémoires de l'Académie Royale des Sciences Présentés par Divers Savants. 6: 621–656.

- JSTOR 2965467

- ^ Havil J (2003) Gamma: Exploring Euler's Constant. Princeton, NJ: Princeton University Press, p. 157

- C. S. Peirce(1873) Theory of errors of observations. Report of the Superintendent US Coast Survey, Washington, Government Printing Office. Appendix no. 21: 200-224

- ISBN 978-1483237930

- ^ Keynes, JM (1921) A treatise on probability. Pt II Ch XVII §5 (p 201)

- ^ Galton F (1881) Report of the Anthropometric Committee pp 245-260. Report of the 51st Meeting of the British Association for the Advancement of Science

- ^ Stigler (1986, Chapter 5: Quetelet's Two Attempts)

- ISBN 9780405066283.

- ^ (Stigler 1986, Chapter 9: The Next Generation: Edgeworth)

- ^ Bellhouse DR (1988) A brief history of random sampling methods. Handbook of statistics. Vol 6 pp 1-14 Elsevier

- JSTOR 2339344.

- doi:10.1038/015492a0.

- S2CID 4053860.

- ^ Stigler (1986, Chapter 10: Pearson and Yule)

- JSTOR 27956805.

- .

- ^ .

- .

- ^ Jolliffe, I. T. (2002). Principal Component Analysis, 2nd ed. New York: Springer-Verlag.

- ^ Box, R. A. Fisher, pp 93–166

- S2CID 18896230.

- ^ Fisher RA (1925) Statistical methods for research workers, Edinburgh: Oliver & Boyd

- JSTOR 2342192

- PMID 9059193.

- ^ ISBN 9780470530689.

- ^ a b Charles Sanders Peirce and Joseph Jastrow (1885). "On Small Differences in Sensation". Memoirs of the National Academy of Sciences. 3: 73–83.

- ^ S2CID 52201011.

- ^ S2CID 143685203.

- ^ S2CID 23526321.

- JSTOR 168276.

- JSTOR 2331929.

- ^ Johnson, N.L. (1961). "Sequential analysis: a survey." Journal of the Royal Statistical Society, Series A. Vol. 124 (3), 372–411. (pages 375–376)

- ^ Wald, A. (1945) "Sequential Tests of Statistical Hypotheses", Annals of Mathematical Statistics, 16 (2), 117–186.

- ISBN 978-0898710069

- ISBN 0-444-82061-2. (pages 151–180)

- .

- ^ Hald, Anders (1998) A History of Mathematical Statistics. New York: Wiley. [page needed]

- ISBN 0-471-09300-9(pp 93–166)

- ISBN 9780444508713.

- S2CID 145725524.

- JSTOR 2682986.

- JSTOR 2528399.

- S2CID 145725524.

- ^ a b c Stigler (1986, Chapter 3: Inverse Probability)

- ^ Hald (1998)[page needed]

- ^ Lucien Le Cam (1986) Asymptotic Methods in Statistical Decision Theory: Pages 336 and 618–621 (von Mises and Bernstein).

- ^ a b c Stephen. E. Fienberg, (2006) When did Bayesian Inference become "Bayesian"? Archived 2014-09-10 at the Wayback Machine Bayesian Analysis, 1 (1), 1–40. See page 5.

- ^ doi:10.1214/08-ba306.

- S2CID 46968744.

- ^ Jeff Miller, "Earliest Known Uses of Some of the Words of Mathematics (B)" "The term Bayesian entered circulation around 1950. R. A. Fisher used it in the notes he wrote to accompany the papers in his Contributions to Mathematical Statistics (1950). Fisher thought Bayes's argument was all but extinct for the only recent work to take it seriously was Harold Jeffreys's Theory of Probability (1939). In 1951 L. J. Savage, reviewing Wald's Statistical Decisions Functions, referred to "modern, or unBayesian, statistical theory" ("The Theory of Statistical Decision," Journal of the American Statistical Association, 46, p. 58.). Soon after, however, Savage changed from being an unBayesian to being a Bayesian."

- ^ ISBN 9780444515391.

- ISBN 0-415-18276-Xpp 50–1

- ISBN 0-521-59271-2

- ^ O'Connor, John J.; Robertson, Edmund F., "History of statistics", MacTutor History of Mathematics Archive, University of St Andrews

- ^ Bernardo, J. M. and Smith, A. F. M. (1994). "Bayesian Theory". Chichester: Wiley.

- MR 2082155.

- ^ Bernardo, J. M. (2006). "A Bayesian Mathematical Statistics Primer" (PDF). Proceedings of the Seventh International Conference on Teaching Statistics [CDROM]. Salvador (Bahia), Brazil: International Association for Statistical Education.

- ISBN 978-0387310732

Bibliography

- Freedman, D. (1999). "From association to causation: Some remarks on the history of statistics". Statistical Science. 14 (3): 243–258. )

- ISBN 978-0-471-47129-5.

- ISBN 978-0-471-17912-2.

- Kotz, S., Johnson, N.L. (1992,1992,1997). Breakthroughs in Statistics, Vols I, II, III. Springer ISBN 0-387-94989-5

- ISBN 978-0-02-850120-8.

- ISBN 0-7167-4106-7

- ISBN 978-0-674-40341-3.

- Stigler, Stephen M. (1999) Statistics on the Table: The History of Statistical Concepts and Methods. Harvard University Press. ISBN 0-674-83601-4

- David, H. A. (1995). "First (?) Occurrence of Common Terms in Mathematical Statistics". JSTOR 2684625.

External links

- JEHPS: Recent publications in the history of probability and statistics

- Electronic Journ@l for History of Probability and Statistics/Journ@l Electronique d'Histoire des Probabilités et de la Statistique

- Figures from the History of Probability and Statistics (Univ. of Southampton)

- Materials for the History of Statistics (Univ. of York)

- Probability and Statistics on the Earliest Uses Pages (Univ. of Southampton)

- Earliest Uses of Symbols in Probability and Statistics on Earliest Uses of Various Mathematical Symbols