Statistics: Difference between revisions

Spikeylegs (talk | contribs) →Statistical computing: added examples of computing software. |

→Statistical computing: re-ordered software examples to be listed alphabetically. |

||

| Line 239: | Line 239: | ||

The rapid and sustained increases in computing power starting from the second half of the 20th century have had a substantial impact on the practice of statistical science. Early statistical models were almost always from the class of [[linear model]]s, but powerful computers, coupled with suitable numerical [[algorithms]], caused an increased interest in [[Nonlinear regression|nonlinear models]] (such as [[neural networks]]) as well as the creation of new types, such as [[generalized linear model]]s and [[multilevel model]]s. |

The rapid and sustained increases in computing power starting from the second half of the 20th century have had a substantial impact on the practice of statistical science. Early statistical models were almost always from the class of [[linear model]]s, but powerful computers, coupled with suitable numerical [[algorithms]], caused an increased interest in [[Nonlinear regression|nonlinear models]] (such as [[neural networks]]) as well as the creation of new types, such as [[generalized linear model]]s and [[multilevel model]]s. |

||

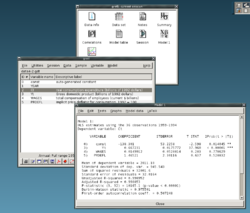

Increased computing power has also led to the growing popularity of computationally intensive methods based on [[Resampling (statistics)|resampling]], such as permutation tests and the [[Bootstrapping (statistics)|bootstrap]], while techniques such as [[Gibbs sampling]] have made use of Bayesian models more feasible. The computer revolution has implications for the future of statistics with new emphasis on "experimental" and "empirical" statistics. A large number of both general and special purpose [[List of statistical packages|statistical software]] are now available. Examples of available software capable of complex statistical computation include programs such as [[SAS (software) | SAS]], [[SPSS]], [[R (programming language) | R |

Increased computing power has also led to the growing popularity of computationally intensive methods based on [[Resampling (statistics)|resampling]], such as permutation tests and the [[Bootstrapping (statistics)|bootstrap]], while techniques such as [[Gibbs sampling]] have made use of Bayesian models more feasible. The computer revolution has implications for the future of statistics with new emphasis on "experimental" and "empirical" statistics. A large number of both general and special purpose [[List of statistical packages|statistical software]] are now available. Examples of available software capable of complex statistical computation include programs such as [[Mathematica]], [[SAS (software) | SAS]], [[SPSS]], and [[R (programming language) | R]]. |

||

===Statistics applied to mathematics or the arts=== |

===Statistics applied to mathematics or the arts=== |

||

Revision as of 15:45, 22 January 2018

Statistics is a branch of

When

Two main statistical methods are used in data analysis: descriptive statistics, which summarize data from a sample using indexes such as the mean or standard deviation, and inferential statistics, which draw conclusions from data that are subject to random variation (e.g., observational errors, sampling variation).[3] Descriptive statistics are most often concerned with two sets of properties of a distribution (sample or population): central tendency (or location) seeks to characterize the distribution's central or typical value, while dispersion (or variability) characterizes the extent to which members of the distribution depart from its center and each other. Inferences on mathematical statistics are made under the framework of probability theory, which deals with the analysis of random phenomena.

A standard statistical procedure involves the

Measurement processes that generate statistical data are also subject to error. Many of these errors are classified as random (noise) or systematic (bias), but other types of errors (e.g., blunder, such as when an analyst reports incorrect units) can also be important. The presence of missing data or censoring may result in biased estimates and specific techniques have been developed to address these problems.

Statistics can be said to have begun in ancient civilization, going back at least to the 5th century BC, but it was not until the 18th century that it started to draw more heavily from calculus and probability theory.

Scope

Some definitions are:

- Merriam-Webster dictionary defines statistics as "a branch of mathematics dealing with the collection, analysis, interpretation, and presentation of masses of numerical data."[5]

- Statistician Sir Arthur Lyon Bowley defines statistics as "Numerical statements of facts in any department of inquiry placed in relation to each other."[6]

Statistics is a mathematical body of science that pertains to the collection, analysis, interpretation or explanation, and presentation of data,[7] or as a branch of mathematics.[8] Some consider statistics to be a distinct mathematical science rather than a branch of mathematics. While many scientific investigations make use of data, statistics is concerned with the use of data in the context of uncertainty and decision making in the face of uncertainty.[9][10]

Mathematical statistics

Mathematical statistics is the application of

Overview

In applying statistics to a problem, it is common practice to start with a population or process to be studied. Populations can be diverse topics such as "all persons living in a country" or "every atom composing a crystal".

Ideally, statisticians compile data about the entire population (an operation called

When a census is not feasible, a chosen subset of the population called a

Data collection

Sampling

When full census data cannot be collected, statisticians collect sample data by developing specific experiment designs and survey samples. Statistics itself also provides tools for prediction and forecasting through statistical models.

To use a sample as a guide to an entire population, it is important that it truly represents the overall population. Representative sampling assures that inferences and conclusions can safely extend from the sample to the population as a whole. A major problem lies in determining the extent that the sample chosen is actually representative. Statistics offers methods to estimate and correct for any bias within the sample and data collection procedures. There are also methods of experimental design for experiments that can lessen these issues at the outset of a study, strengthening its capability to discern truths about the population.

Sampling theory is part of the

Experimental and observational studies

A common goal for a statistical research project is to investigate causality, and in particular to draw a conclusion on the effect of changes in the values of predictors or independent variables on dependent variables. There are two major types of causal statistical studies: experimental studies and observational studies. In both types of studies, the effect of differences of an independent variable (or variables) on the behavior of the dependent variable are observed. The difference between the two types lies in how the study is actually conducted. Each can be very effective. An experimental study involves taking measurements of the system under study, manipulating the system, and then taking additional measurements using the same procedure to determine if the manipulation has modified the values of the measurements. In contrast, an observational study does not involve experimental manipulation. Instead, data are gathered and correlations between predictors and response are investigated. While the tools of data analysis work best on data from

Experiments

The basic steps of a statistical experiment are:

- Planning the research, including finding the number of replicates of the study, using the following information: preliminary estimates regarding the size of experimental variability. Consideration of the selection of experimental subjects and the ethics of research is necessary. Statisticians recommend that experiments compare (at least) one new treatment with a standard treatment or control, to allow an unbiased estimate of the difference in treatment effects.

- experimental protocolthat will guide the performance of the experiment and which specifies the primary analysis of the experimental data.

- Performing the experiment following the experimental protocol and analyzing the datafollowing the experimental protocol.

- Further examining the data set in secondary analyses, to suggest new hypotheses for future study.

- Documenting and presenting the results of the study.

Experiments on human behavior have special concerns. The famous

Observational study

An example of an observational study is one that explores the association between smoking and lung cancer. This type of study typically uses a survey to collect observations about the area of interest and then performs statistical analysis. In this case, the researchers would collect observations of both smokers and non-smokers, perhaps through a

Types of data

Various attempts have been made to produce a taxonomy of levels of measurement. The psychophysicist Stanley Smith Stevens defined nominal, ordinal, interval, and ratio scales. Nominal measurements do not have meaningful rank order among values, and permit any one-to-one transformation. Ordinal measurements have imprecise differences between consecutive values, but have a meaningful order to those values, and permit any order-preserving transformation. Interval measurements have meaningful distances between measurements defined, but the zero value is arbitrary (as in the case with longitude and temperature measurements in Celsius or Fahrenheit), and permit any linear transformation. Ratio measurements have both a meaningful zero value and the distances between different measurements defined, and permit any rescaling transformation.

Because variables conforming only to nominal or ordinal measurements cannot be reasonably measured numerically, sometimes they are grouped together as

Other categorizations have been proposed. For example, Mosteller and Tukey (1977)[16] distinguished grades, ranks, counted fractions, counts, amounts, and balances. Nelder (1990)[17] described continuous counts, continuous ratios, count ratios, and categorical modes of data. See also Chrisman (1998),[18] van den Berg (1991).[19]

The issue of whether or not it is appropriate to apply different kinds of statistical methods to data obtained from different kinds of measurement procedures is complicated by issues concerning the transformation of variables and the precise interpretation of research questions. "The relationship between the data and what they describe merely reflects the fact that certain kinds of statistical statements may have truth values which are not invariant under some transformations. Whether or not a transformation is sensible to contemplate depends on the question one is trying to answer" (Hand, 2004, p. 82).[20]

Terminology and theory of inferential statistics

Statistics, estimators and pivotal quantities

Consider

A statistic is a random variable that is a function of the random sample, but not a function of unknown parameters. The probability distribution of the statistic, though, may have unknown parameters.

Consider now a function of the unknown parameter: an

A random variable that is a function of the random sample and of the unknown parameter, but whose probability distribution does not depend on the unknown parameter is called a

Between two estimators of a given parameter, the one with lower

Other desirable properties for estimators include:

This still leaves the question of how to obtain estimators in a given situation and carry the computation, several methods have been proposed: the

Null hypothesis and alternative hypothesis

Interpretation of statistical information can often involve the development of a null hypothesis which is usually (but not necessarily) that no relationship exists among variables or that no change occurred over time.[22][23]

The best illustration for a novice is the predicament encountered by a criminal trial. The null hypothesis, H0, asserts that the defendant is innocent, whereas the alternative hypothesis, H1, asserts that the defendant is guilty. The indictment comes because of suspicion of the guilt. The H0 (status quo) stands in opposition to H1 and is maintained unless H1 is supported by evidence "beyond a reasonable doubt". However, "failure to reject H0" in this case does not imply innocence, but merely that the evidence was insufficient to convict. So the jury does not necessarily accept H0 but fails to reject H0. While one can not "prove" a null hypothesis, one can test how close it is to being true with a

What

Error

Working from a null hypothesis, two basic forms of error are recognized:

- Type I errors where the null hypothesis is falsely rejected giving a "false positive".

- Type II errors where the null hypothesis fails to be rejected and an actual difference between populations is missed giving a "false negative".

A

Many statistical methods seek to minimize the

Measurement processes that generate statistical data are also subject to error. Many of these errors are classified as

Interval estimation

Most studies only sample part of a population, so results don't fully represent the whole population. Any estimates obtained from the sample only approximate the population value.

In principle confidence intervals can be symmetrical or asymmetrical. An interval can be asymmetrical because it works as lower or upper bound for a parameter (left-sided interval or right sided interval), but it can also be asymmetrical because the two sided interval is built violating symmetry around the estimate. Sometimes the bounds for a confidence interval are reached asymptotically and these are used to approximate the true bounds.

Significance

Statistics rarely give a simple Yes/No type answer to the question under analysis. Interpretation often comes down to the level of statistical significance applied to the numbers and often refers to the probability of a value accurately rejecting the null hypothesis (sometimes referred to as the p-value).

The standard approach

Referring to statistical significance does not necessarily mean that the overall result is significant in real world terms. For example, in a large study of a drug it may be shown that the drug has a statistically significant but very small beneficial effect, such that the drug is unlikely to help the patient noticeably.

While in principle the acceptable level of statistical significance may be subject to debate, the p-value is the smallest significance level that allows the test to reject the null hypothesis. This is logically equivalent to saying that the p-value is the probability, assuming the null hypothesis is true, of observing a result at least as extreme as the test statistic. Therefore, the smaller the p-value, the lower the probability of committing type I error.

Some problems are usually associated with this framework (See

- A difference that is highly statistically significant can still be of no practical significance, but it is possible to properly formulate tests to account for this. One response involves going beyond reporting only the significance level to include the p-value when reporting whether a hypothesis is rejected or accepted. The p-value, however, does not indicate the size or importance of the observed effect and can also seem to exaggerate the importance of minor differences in large studies. A better and increasingly common approach is to report confidence intervals. Although these are produced from the same calculations as those of hypothesis tests or p-values, they describe both the size of the effect and the uncertainty surrounding it.

- Fallacy of the transposed conditional, aka prosecutor's fallacy: criticisms arise because the hypothesis testing approach forces one hypothesis (the null hypothesis) to be favored, since what is being evaluated is probability of the observed result given the null hypothesis and not probability of the null hypothesis given the observed result. An alternative to this approach is offered by Bayesian inference, although it requires establishing a prior probability.[25]

- Rejecting the null hypothesis does not automatically prove the alternative hypothesis.

- As everything in fat tails p-values may be seriously mis-computed.[clarification needed]

Examples

Some well-known statistical

Misuse

Misuse of statistics can produce subtle, but serious errors in description and interpretation—subtle in the sense that even experienced professionals make such errors, and serious in the sense that they can lead to devastating decision errors. For instance, social policy, medical practice, and the reliability of structures like bridges all rely on the proper use of statistics.

Even when statistical techniques are correctly applied, the results can be difficult to interpret for those lacking expertise. The statistical significance of a trend in the data—which measures the extent to which a trend could be caused by random variation in the sample—may or may not agree with an intuitive sense of its significance. The set of basic statistical skills (and skepticism) that people need to deal with information in their everyday lives properly is referred to as statistical literacy.

There is a general perception that statistical knowledge is all-too-frequently intentionally misused by finding ways to interpret only the data that are favorable to the presenter.[26] A mistrust and misunderstanding of statistics is associated with the quotation, "There are three kinds of lies: lies, damned lies, and statistics". Misuse of statistics can be both inadvertent and intentional, and the book How to Lie with Statistics[26] outlines a range of considerations. In an attempt to shed light on the use and misuse of statistics, reviews of statistical techniques used in particular fields are conducted (e.g. Warne, Lazo, Ramos, and Ritter (2012)).[27]

Ways to avoid misuse of statistics include using proper diagrams and avoiding

To assist in the understanding of statistics Huff proposed a series of questions to be asked in each case:[32]

- Who says so? (Does he/she have an axe to grind?)

- How does he/she know? (Does he/she have the resources to know the facts?)

- What’s missing? (Does he/she give us a complete picture?)

- Did someone change the subject? (Does he/she offer us the right answer to the wrong problem?)

- Does it make sense? (Is his/her conclusion logical and consistent with what we already know?)

Misinterpretation: correlation

The concept of

History of statistical science

Statistical methods date back at least to the 5th century BC.[citation needed]

Some scholars pinpoint the origin of statistics to 1663, with the publication of Natural and Political Observations upon the Bills of Mortality by John Graunt.[33] Early applications of statistical thinking revolved around the needs of states to base policy on demographic and economic data, hence its stat- etymology. The scope of the discipline of statistics broadened in the early 19th century to include the collection and analysis of data in general. Today, statistics is widely employed in government, business, and natural and social sciences.

Its mathematical foundations were laid in the 17th century with the development of the

The modern field of statistics emerged in the late 19th and early 20th century in three stages.

Ronald Fisher coined the term null hypothesis during the Lady tasting tea experiment, which "is never proved or established, but is possibly disproved, in the course of experimentation".[40][41]

The second wave of the 1910s and 20s was initiated by

The final wave, which mainly saw the refinement and expansion of earlier developments, emerged from the collaborative work between Egon Pearson and Jerzy Neyman in the 1930s. They introduced the concepts of "Type II" error, power of a test and confidence intervals. Jerzy Neyman in 1934 showed that stratified random sampling was in general a better method of estimation than purposive (quota) sampling.[54]

Today, statistical methods are applied in all fields that involve decision making, for making accurate inferences from a collated body of data and for making decisions in the face of uncertainty based on statistical methodology. The use of modern computers has expedited large-scale statistical computations, and has also made possible new methods that are impractical to perform manually. Statistics continues to be an area of active research, for example on the problem of how to analyze Big data.[55]

Applications

Applied statistics, theoretical statistics and mathematical statistics

"Applied statistics" comprises descriptive statistics and the application of inferential statistics.[56][57] Theoretical statistics concerns both the logical arguments underlying justification of approaches to statistical inference, as well encompassing mathematical statistics. Mathematical statistics includes not only the manipulation of probability distributions necessary for deriving results related to methods of estimation and inference, but also various aspects of computational statistics and the design of experiments.

Machine learning and data mining

There are two applications for machine learning and data mining: data management and data analysis. Statistics tools are necessary for the data analysis.

Statistics in society

Statistics is applicable to a wide variety of

Statistical computing

The rapid and sustained increases in computing power starting from the second half of the 20th century have had a substantial impact on the practice of statistical science. Early statistical models were almost always from the class of

Increased computing power has also led to the growing popularity of computationally intensive methods based on

.Statistics applied to mathematics or the arts

Traditionally, statistics was concerned with drawing inferences using a semi-standardized methodology that was "required learning" in most sciences. This has changed with use of statistics in non-inferential contexts. What was once considered a dry subject, taken in many fields as a degree-requirement, is now viewed enthusiastically.[according to whom?] Initially derided by some mathematical purists, it is now considered essential methodology in certain areas.

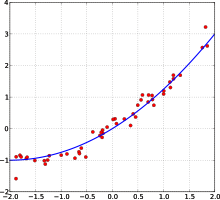

- In number theory, scatter plots of data generated by a distribution function may be transformed with familiar tools used in statistics to reveal underlying patterns, which may then lead to hypotheses.

- Methods of statistics including predictive methods in fractal geometry to create video works that are considered to have great beauty.[citation needed]

- The process art of Jackson Pollock relied on artistic experiments whereby underlying distributions in nature were artistically revealed.[citation needed] With the advent of computers, statistical methods were applied to formalize such distribution-driven natural processes to make and analyze moving video art.[citation needed]

- Methods of statistics may be used predicatively in Markov processthat only works some of the time, the occasion of which can be predicted using statistical methodology.

- Statistics can be used to predicatively create art, as in the statistical or stochastic music invented by Iannis Xenakis, where the music is performance-specific. Though this type of artistry does not always come out as expected, it does behave in ways that are predictable and tunable using statistics.

Specialized disciplines

Statistical techniques are used in a wide range of types of scientific and social research, including:

In addition, there are particular types of statistical analysis that have also developed their own specialised terminology and methodology:

Statistics form a key basis tool in business and manufacturing as well. It is used to understand measurement systems variability, control processes (as in statistical process control or SPC), for summarizing data, and to make data-driven decisions. In these roles, it is a key tool, and perhaps the only reliable tool.

See also

- Abundance estimation

- Data science

- Glossary of probability and statistics

- List of academic statistical associations

- List of important publications in statistics

- List of national and international statistical services

- List of statistical packages(software)

- List of statistics articles

- List of university statistical consulting centers

- Notation in probability and statistics

- Foundations and major areas of statistics

References

- ^ ISBN 0-19-920613-9

- ^ Romijn, Jan-Willem (2014). "Philosophy of statistics". Stanford Encyclopedia of Philosophy.

- ^ Lund Research Ltd. "Descriptive and Inferential Statistics". statistics.laerd.com. Retrieved 2014-03-23.

- ^ "What Is the Difference Between Type I and Type II Hypothesis Testing Errors?". About.com Education. Retrieved 2015-11-27.

- ^ "Definition of STATISTICS". www.merriam-webster.com. Retrieved 2016-05-28.

- ^ "Essay on Statistics: Meaning and Definition of Statistics". Economics Discussion. 2014-12-02. Retrieved 2016-05-28.

- ISBN 978-0-201-15619-5. pp. 1–3

- ISBN 978-0-03-077945-9

- ISBN 978-0-88385-078-7.)

{{cite book}}: Unknown parameter|editors=ignored (|editor=suggested) (help - ISBN 978-0-495-05064-3.

- ISBN 0824706609.)

{{cite book}}:|first=has generic name (help)CS1 maint: extra punctuation (link) CS1 maint: multiple names: authors list (link - ISBN 0387945466.

- ISBN 978-0-521-67105-7

- PMID 17608932.)

{{cite journal}}: CS1 maint: unflagged free DOI (link - ^ Rothman, Kenneth J; Greenland, Sander; Lash, Timothy, eds. (2008). "7". Modern Epidemiology (3rd ed.). Lippincott Williams & Wilkins. p. 100.

- ^ Mosteller, F., & Tukey, J. W. (1977). Data analysis and regression. Boston: Addison-Wesley.

- ^ Nelder, J. A. (1990). The knowledge needed to computerise the analysis and interpretation of statistical information. In Expert systems and artificial intelligence: the need for information about data. Library Association Report, London, March, 23–27.

- .

- ^ van den Berg, G. (1991). Choosing an analysis method. Leiden: DSWO Press

- ^ Hand, D. J. (2004). Measurement theory and practice: The world through quantification. London, UK: Arnold.

- ^ a b Piazza Elio, Probabilità e Statistica, Esculapio 2007

- ISBN 0521593468.

- ^ "Cohen (1994) The Earth Is Round (p < .05) - YourStatsGuru.com - YourStatsGuru.com".

- ^ Rubin, Donald B.; Little, Roderick J. A., Statistical analysis with missing data, New York: Wiley 2002

- PMID 16060722.)

{{cite journal}}: CS1 maint: unflagged free DOI (link - ^ ISBN 0-393-31072-8

- .

- ^ ISBN 978-0-12-373962-9.

- ^ .

- ^ Freund, J. E. (1988). "Modern Elementary Statistics". Credo Reference.

- ^ Huff, Darrell; Irving Geis (1954). How to Lie with Statistics. New York: Norton.

The dependability of a sample can be destroyed by [bias]... allow yourself some degree of skepticism.

- ^ Huff, Darrell; Irving Geis (1954). How to Lie with Statistics. New York: Norton.

- JSTOR 1400906

- ^ J. Franklin, The Science of Conjecture: Evidence and Probability before Pascal, Johns Hopkins Univ Pr 2002

- ^ Helen Mary Walker (1975). Studies in the history of statistical method. Arno Press.

- doi:10.1038/015492a0.

- .

- .

- ^ "Karl Pearson (1857–1936)". Department of Statistical Science – University College London.

- ^ Fisher|1971|loc=Chapter II. The Principles of Experimentation, Illustrated by a Psycho-physical Experiment, Section 8. The Null Hypothesis

- ^ OED quote: 1935 R. A. Fisher, The Design of Experiments ii. 19, "We may speak of this hypothesis as the 'null hypothesis', and the null hypothesis is never proved or established, but is possibly disproved, in the course of experimentation."

- .

- JSTOR 2682986.

- JSTOR 2528399.

- JSTOR 1161806.

- .

- ^ PMID 18811377.

- ^ Fisher, R.A. (1915) The evolution of sexual preference. Eugenics Review (7) 184:192

- ISBN 0-19-850440-3

- ^ Edwards, A.W.F. (2000) Perspectives: Anecdotal, Historial and Critical Commentaries on Genetics. The Genetics Society of America (154) 1419:1426

- ISBN 0-691-00057-3

- ^ Andersson, M. and Simmons, L.W. (2006) Sexual selection and mate choice. Trends, Ecology and Evolution (21) 296:302

- ^ Gayon, J. (2010) Sexual selection: Another Darwinian process. Comptes Rendus Biologies (333) 134:144

- JSTOR 2342192.

- ^ "Science in a Complex World - Big Data: Opportunity or Threat?". Santa Fe Institute.

- ISBN 978-1500815684

- ISBN 978-0-314-03309-3

Further reading

- Barbara Illowsky; Susan Dean (2014). Introductory Statistics. OpenStax CNX. ISBN 9781938168208.

- David W. Stockburger, Introductory Statistics: Concepts, Models, and Applications, 3rd Web Ed. Missouri State University.

- Stephen Jones, 2010. Statistics in Psychology: Explanations without Equations. Palgrave Macmillan. ISBN 9781137282392.

- Cohen, J. (1990). Things I have learned (so far). American Psychologist, 45, 1304-1312.

- Gigerenzer, G. (2004). Mindless statistics. Journal of Socio-Economics, 33, 587–606.

- Ioannidis, J. P. A. (2005). Why most published research findings are false. PLoS Medicine, 2, 696–701.

External links

- (Electronic Version): StatSoft, Inc. (2013). Electronic Statistics Textbook. Tulsa, OK: StatSoft.

- Online Statistics Education: An Interactive Multimedia Course of Study. Developed by Rice University (Lead Developer), University of Houston Clear Lake, Tufts University, and National Science Foundation.

- UCLA Statistical Computing Resources